While audiences around the world know the UK’s BBC best for its hit TV shows like Top Gear and Doctor Who, it has been a key force in technological advances since since it first started broadcasting almost a century ago.

To this day, its labs are cooking up experiments that may completely change the way we consume audio and video, and I went to take a look at them.

The BBC’s R&D division claims noise-canceling microphones, transatlantic broadcasts and HD TV among the innovations it has driven forward. Today though, it’s exploring how audio and video can become more flexible, leading to personalized content for audiences, easier lives for producers and money saved for a publicly-funded broadcaster under pressure to cut costs.

At the BBC’s R&D facility in Salford in the North of England, a team of software developers, designers, human interaction specialists and scientists from fields like psychology and anthropology work alongside academics from universities around the UK on a set of projects all based around the same idea – breaking audio and video up into tiny pieces and doing clever things with those pieces.

Breaking up is hard to do

The idea behind ‘object-based’ media is that you take all the assets for a given TV or radio show – the video clips, the accompanying audio, any music soundtrack, and extras like subtitles and sign language translations – wrap it up in useful metadata and then use software to ‘remix’ it as needed.

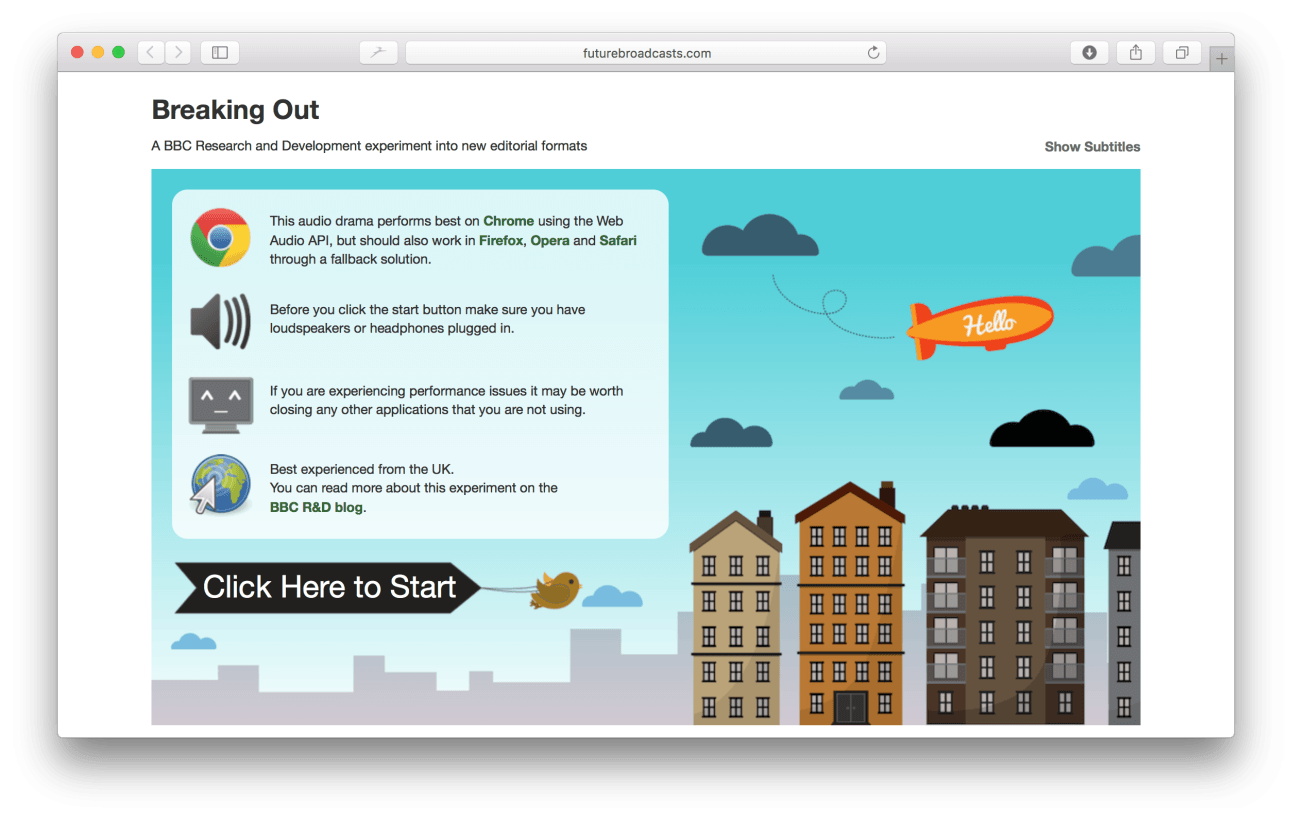

Perhaps the simplest to grasp example of this is one we’ve been following at TNW since it was first revealed in early 2012 – Perceptive Media.

The brainchild of BBC R&D’s Ian Forrester, Perceptive Media uses information about individual viewers and the environment they’re in to tweak a program in clever ways. The initial 2012 experiment was a radio play about a woman stuck in an elevator. Aspects of the drama changed depending on the listener’s location, the local weather, the time of day and what social network cookies were in his or her browser.

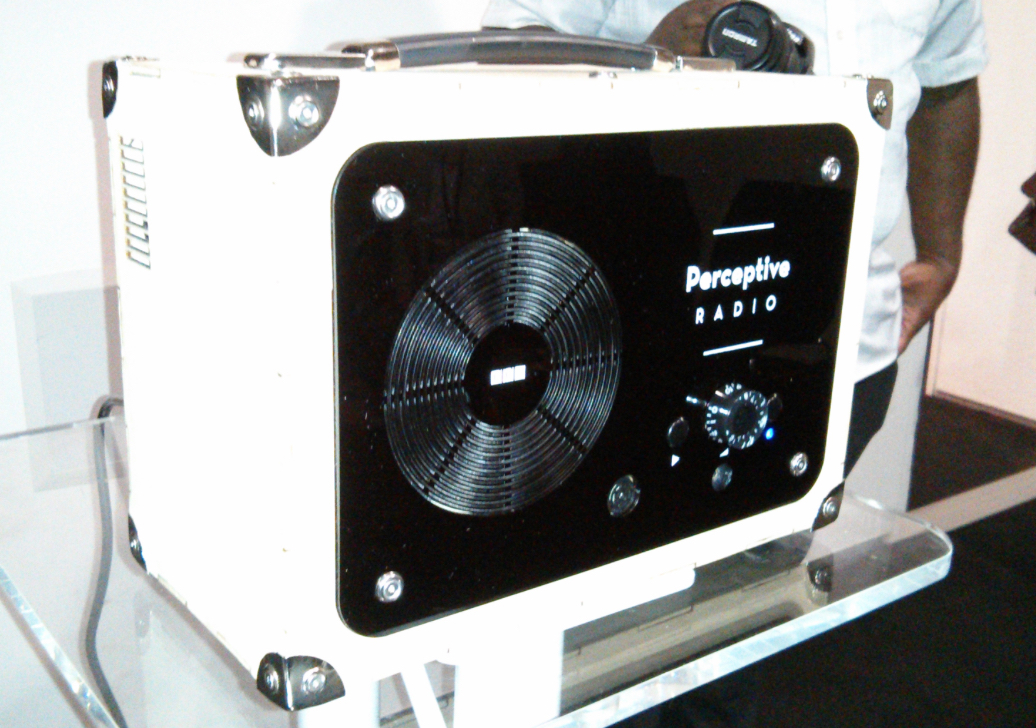

While that was quite a simple demo, it got the idea across, and by the next year it had evolved into a piece of hardware called the Perceptive Radio.

This concept device sported light and proximity sensors, along with a microphone that are used to influence how content is played back. Maybe the playback volume could increase based on ambient noise levels, maybe it could play ‘happy’ music when in a light room or more sinister-sounding tunes in the dark.

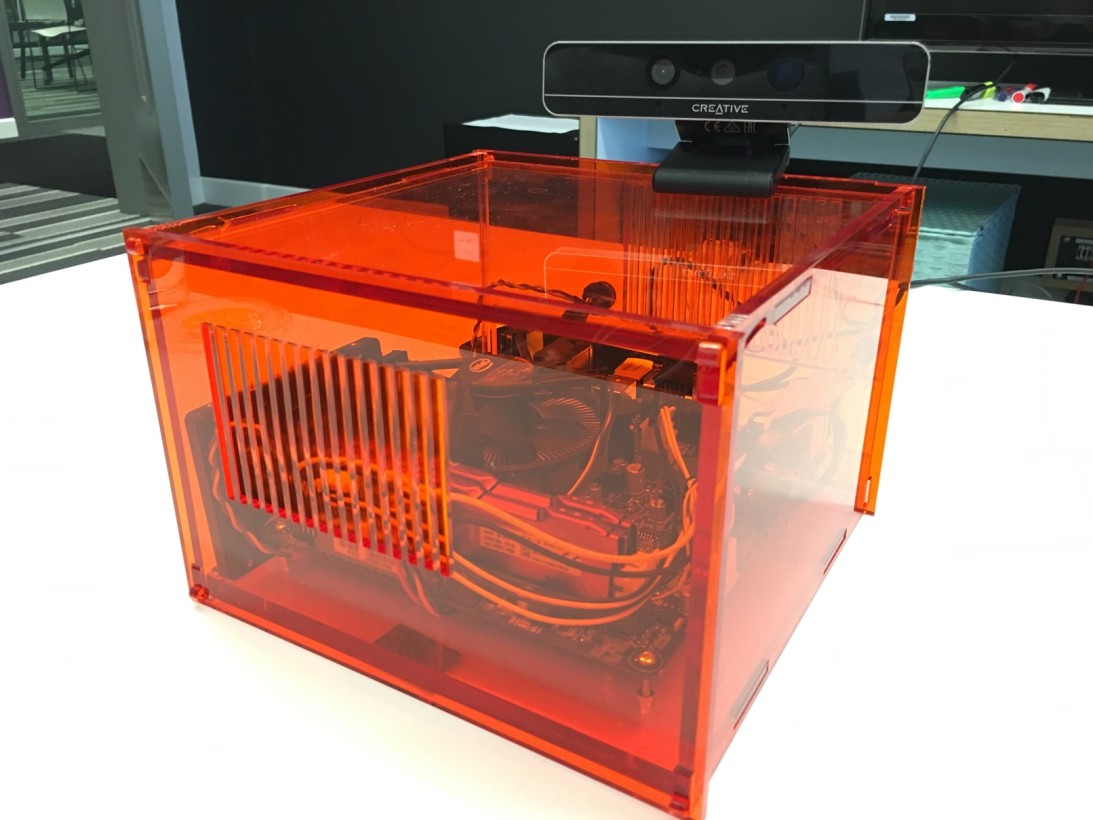

A second Perceptive Radio has now been built in partnership with Lancaster University. While it lacks the retro visual stylings of the original, it includes a face detection camera and geolocation support. Again, these can be used to influence how content is played to different listeners.

These radios aren’t designed to ever go on sale. As BBC R&D’s Phil Stenton explains, the perfect ‘perceptive radio’ is your smartphone, as it already knows lots about you and the world around you. So, it’s easy to imagine that a perceptive radio app might emerge from the BBC in the future.

In recent times, Ian Forrester has turned his attention to ‘Visual Perceptive Media.’ As we first reported late last year, this applies the same principles to video-based content.

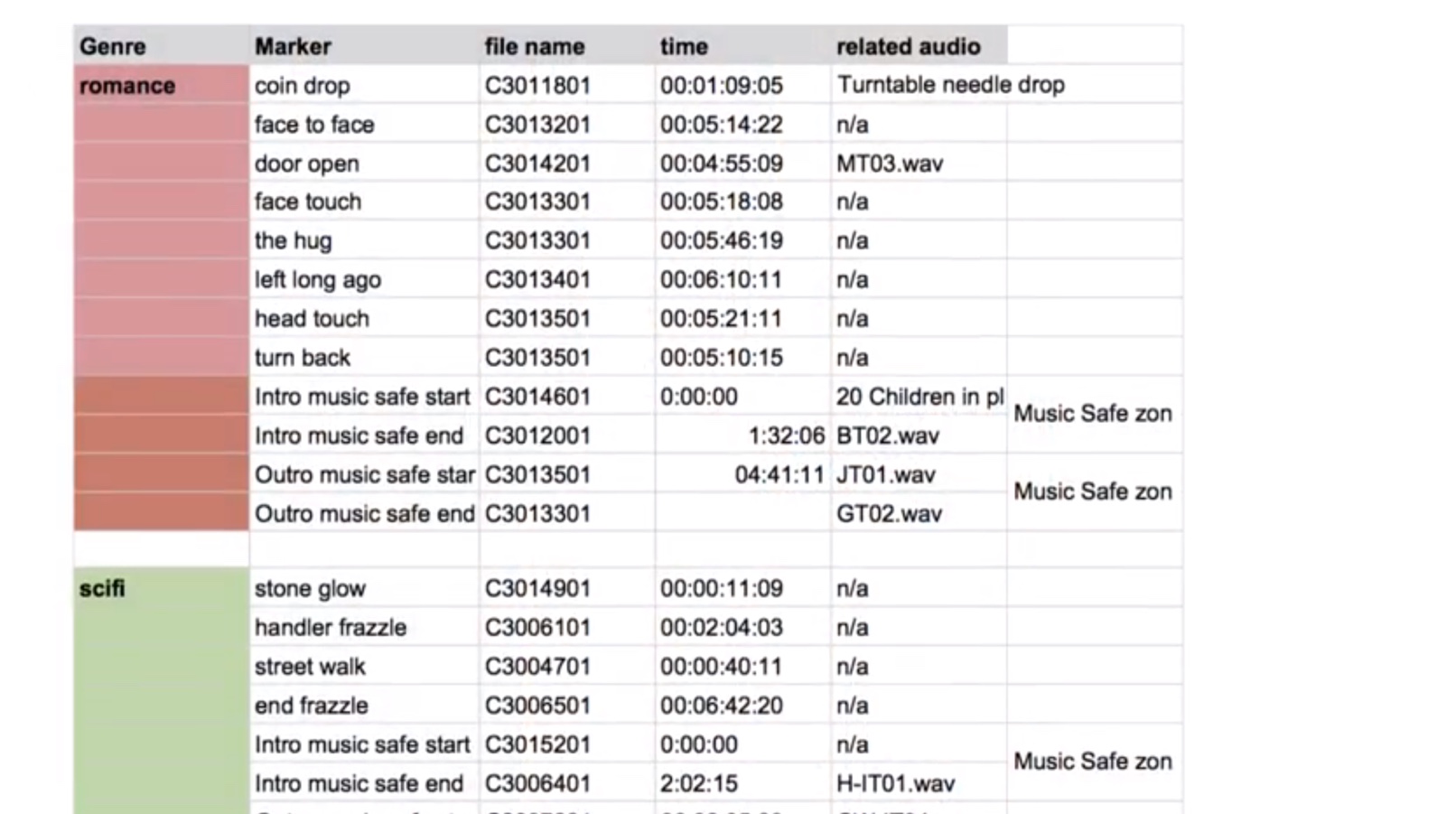

For the first experiment in Visual Perceptive Media, the BBC worked with a screenwriter who created a short drama with multiple starts and endings. In addition to the variable plot, a number of different soundtracks were prepared, and the video was treated with a range of color gradings to give it different moods, from cold and blue to warm and bright.

The video is presented in a Web app that harnesses data about a user’s interests, personality and music taste to find a ‘path’ through the chunks of content, creating a different version of the drama for each viewer.

While this initial experiment is pretty basic, it’s easy to see how the principle could be used to adapt content for viewers who have missed previous episodes of a show, or to automatically include accessibility features for those who need them.

Another thing the BBC is interested in using this for is to automatically swap the soundtrack in a show for another according to geographic licensing restrictions – so much easier than going back into the edit suite to completely rebuild the show.

Thinking smart, saving cash

It’s not easy to be a publicly-funded broadcaster when the government of the day has an agenda to cut back public spending. So, the BBC is exploring ways to use technology to ‘do more with less.’

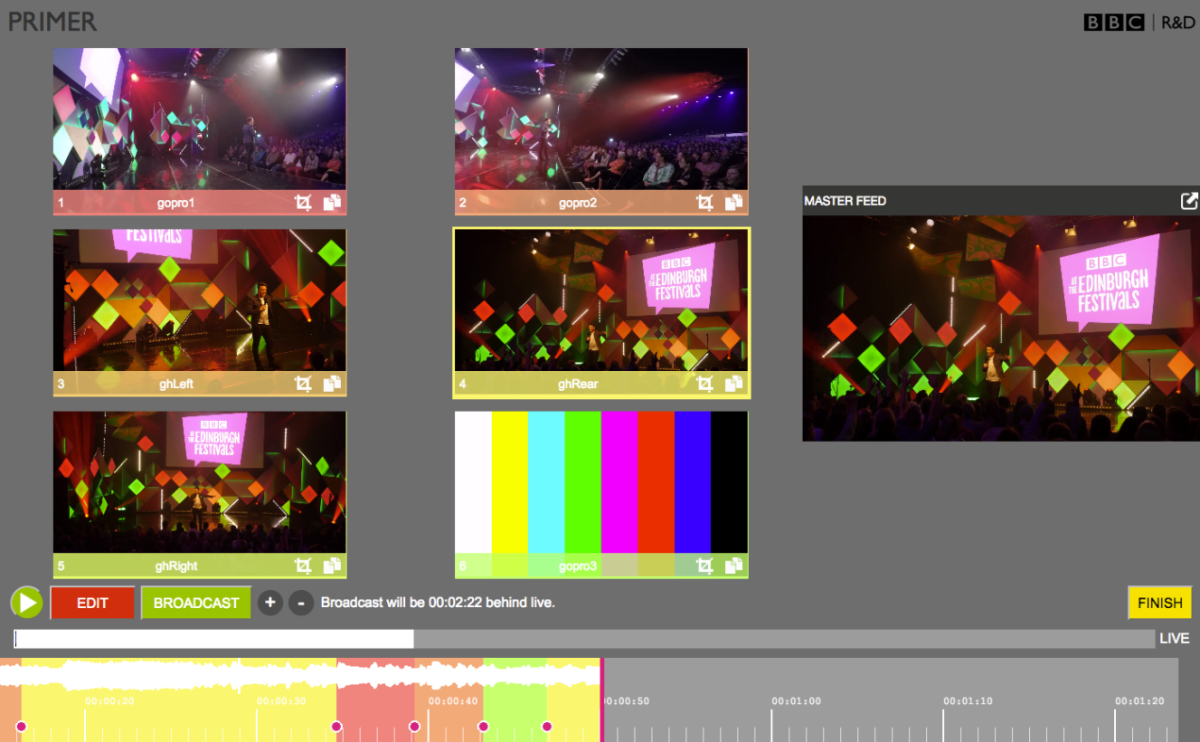

Primer is a project that uses the same ‘object-based’ approach as Perceptive Media but for an entirely different purpose. It was first tested at the Edinburgh Festival Fringe in Scotland last summer but it could be used anywhere, either to cover a stage at a festival over a few days, or used by a news reporter to capture a press conference or other event from multiple camera angles with very little hassle and expense.

Primer consists of a few small video cameras – a mixture of GoPros and 4k units. These can be quickly set up at an event and hooked up over the internet to a browser-based editing tool. The clever bit is that even with just a few real cameras, editors can create as many ‘virtual cameras’ as they like, taking small windows from the 4K units’ huge field of vision.

Once video is streaming into the Web app, it can be edited in real-time, either live or just behind live. This is ideal for when a broadcaster doesn’t want to show, say, an act at the Glastonbury festival live, but they want to trim out any swear words and create a neat highlights package to transmit quickly afterwards.

Once the edit is complete in the browser, an edit decision list is sent out to external software that exports a 1080p transmittable video from the original footage.

Although Primer is simply a demo, it could be evolved into a product to be used by TV production teams. Another idea that has been mooted is to create a Primer-based kit that schools can use to easily and cheaply create video productions in-house.

Elastic media

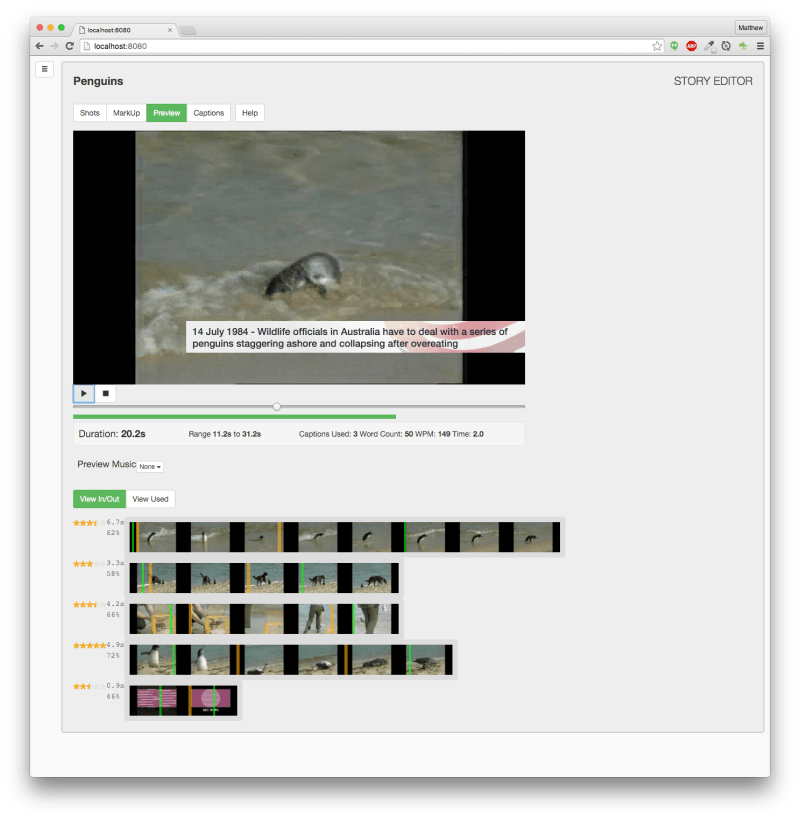

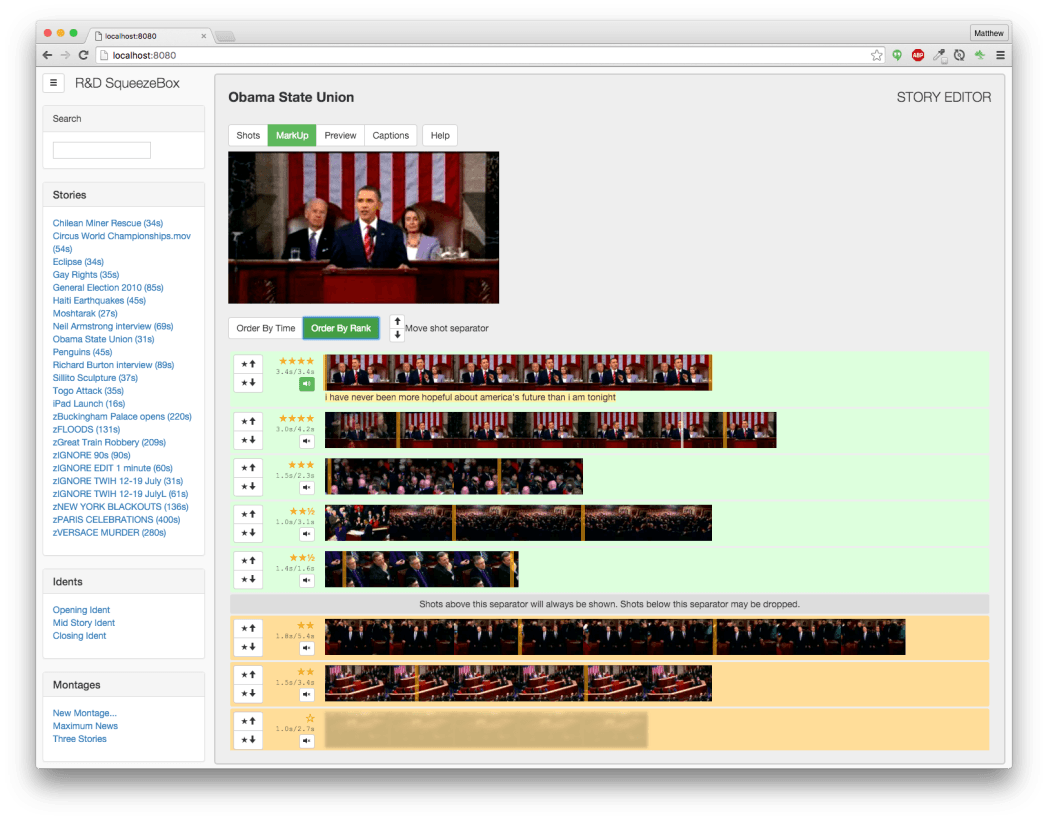

If BBC R&D can create flexible content, surely it can be used to adjust media to specific lengths too? That’s the idea behind SqueezeBox, a tool that makes flexible-length montages from news reports.

The project developed out of ‘Responsive Radio,’ an experiment to create an audio documentary that listeners could adjust in length according to how much time they had available. The Web app would dynamically cut non-essential parts of the story out, or add extra content, depending on the user’s time preference.

SqueezeBox takes that principle and aims to make it useful for TV newsrooms. Often, rolling news stations create montages of recent stories to fill time in the schedule, for example, the commercially-operated BBC World station has ad breaks but doesn’t always sell the space, so it uses combinations of news footage, text and music in those unsold slots.

The problem for BBC World is that creating those montages takes time that might be better spent working on the original reports coming in from correspondents around the globe. Using this problem as inspiration, the R&D team created SqueezeBox as a tool that allows an editor to import a bunch of clips, rank them by priority, highlight the most important parts and then add text captions.

From that, the app generates a montage that could be used either on television or online. Staff working the local BBC news TV show in the North West of England have shown interest in using it to generate the short news roundups that they post to Twitter and Facebook ahead of the live bulletin.

Like the other object-based experiments that BBC R&D is working on, SqueezeBox is an experiment that isn’t intended to be directly productized, but the work could lead to tools that newsrooms can build into their workflows in the future.

In the meantime, R&D continues to hone the concept. It’s recently added integration with an internal BBC tool that converts speech to text. Using this, editors can highlight particular words in the audio that they want the montage to include. At these points, the music soundtrack will fade and the original footage’s audio will play. It’s not perfect yet, but it’s another feature that would make life easier for newsrooms around the world.

Using the Web as a canvas

One thing that struck me when talking to the people working on all of these projects was that they were using the Web browser as their canvas and working with free-to-use, open technologies like OpenGL, Web Audio, Twitter Bootstrap and Facebook React.

The developers I spoke to at BBC R&D were keen to work like this as it allows them to build cross-platform tools that can easily be tested by external parties. It also fits into a wider ‘IP Studio’ vision within the BBC, whereby every element of television production is gradually shifted to internet-based technologies, from sending footage back to HQ from an outside broadcast truck to eventually transmitting it to viewers. It isn’t possible to send 4K video to your TV via terrestrial aerials, it’ll have to be sent over the internet.

Open innovation

BBC R&D’s approach to openness doesn’t end there. Team members regularly blog about their progress. There’s also a community developing on GitHub around various codebases that the BBC has open-sourced, attracting interest from the likes of Samsung and Google.

Some may argue that innovation like this shouldn’t be done with funds from the public purse and that the private sector is better at moving media technology forward. Adrian Woolard, the head of BBC R&D’s Salford lab, says that the point of the BBC doing this is that everyone can benefit from the research, rather than it being commercialized in proprietary technology.

Woolard also notes that while the private sector may do well at addressing the needs of particular high-value audiences, the BBC is better placed to build things like accessibility features that can provide benefit to the whole of the UK, as well as standards that can be used by all.

BBC R&D’s work also ripples out to benefit the wider research community. The Perceptive Radio experiment with Lancaster University is one of four academic collaborations that are underway right now.

York University is researching the mechanics of flexible stories that maintain coherence, story arc, ambience and feel. A partnership with Nottingham University is exploring the idea of engaging with media through objects. Phil Stenton gives the example of “guitars that relive their best performances – object-media fusions that can be gifted and enjoyed in the moment.”

Meanwhile, a collaboration with Newcastle University is examining the creation of media content that responds to the actions of the audience. This is being tested with a cookery show project that explores how to provide recipe guides that keep pace with what’s happening in the viewer’s kitchen.

The BBC’s experiments with object-based media could soon bear more fruit that you can try for yourself. In the past year, the BBC Taster site has featured two interactive video projects – Our World War and Casualty: First Day created in conjunction with BBC R&D that offer insights into how interactive media is developing.

As TV viewing increasingly moves online, the opportunities for subtle (and not so subtle) personalization of the shows we watch are enormous. Some of the most interesting ideas for how that might happen are coming out of BBC R&D.

Get the TNW newsletter

Get the most important tech news in your inbox each week.