If you’ve been using the new Google Photos app announced yesterday at Google I/O, you may be impressed with how smooth the interface is, or how easily the service indexes your photos.

There’s a lot happening behind the scenes to make the magic happen; things you’re never supposed to see, but will nonetheless make you a happy Photos user.

After the keynote address, Google went into more detail about what makes Photos special to a small group of media folk. While our review will give you some insight on Photos’ forward-facing performance, we now know what makes the app so uniquely special.

Free to use, Photos also comes with unlimited photo storage. That free storage doesn’t come without a price, though; your larger photos are reduced to 16 megapixels, part of a “re-encoding” process. According to Google, its focus is on image quality, not file size or type.

To that, they point out your images stored in Photos have “identical visual quality” to the originals. Even zoomed in, it’s hard to see any loss in actual quality.

Google won’t backup your original image files automatically, but you can choose to do so via Google Drive or a hard drive. It’s only when you’re backing your images up in Drive that Google Photos will suggest you remove pictures stored on your device to free up memory.

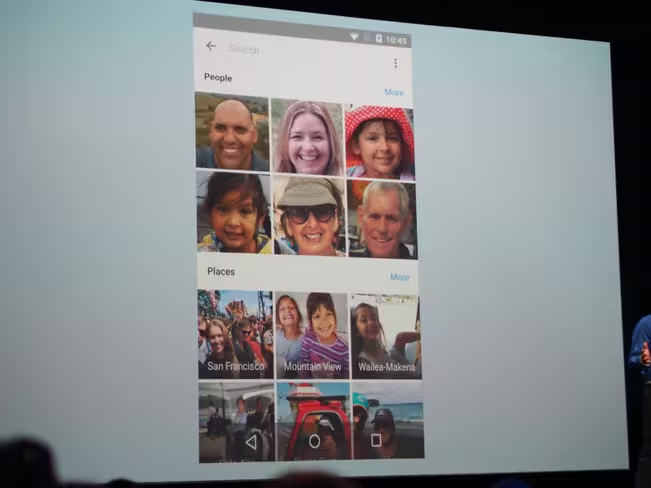

Another impressive Google Photos feature shown to the world was the app’s ability to scan pics and recognize people or places. If you take a lot of photos of one particular person, Google Photos will give them their own tag. Snap a lot of photos in a favorite vacation spot, and that location also gets its own tag.

To know whom to assign a special tag, Google relies on facial recognition. As it scans your pics and recognizes the same face across multiple photos, Google Photos knows you have a special interest in a particular person. It can even detect the same person at different ages, and index your pics according to when you took them for a linear timeline.

That same effort carries over to other things, too. Animals — even specific breeds — can be identified. The app may even understand you took a picture in a particular location, whether or not you had location data turned on or not.

Google achieves this impressive recognition via machine learning. Just as you’ll find in Google Search, Google Photos can recognize specific types of animals, and knows over 250,000 landmarks around the world.

The reason Google decided to scan photos has a lot to do with older images. If you snapped a photo with a point-and-shoot at the Eiffel Tower five years ago, you may not have location data for that file. Similarly, old family photos of the family dog can be scanned and identified, then automatically assigned a tag.

In-app search is another reason photo scanning is a special feature. If you were to search for ‘Golden Retriever’ in the Google Photos app, you’d be shown those old family photos — because Google scanned them and knew the dog was a Golden Retriever (handy if you can’t recall the dog’s name).

This also applies to more generic, recognizable thing like the beach or a bicycle. The scanning feature also allows you to search Google Photos while offline and get the same results you would while connected to the cloud.

Now separate from Google Plus, Photos didn’t leave all the fun editing tools behind. You’ll still have the ability to edit your images, but Google Photos does a better job of understanding what you may be trying to do when editing. In scanning your images for the purpose of tagging, Photos also takes into account what the point of interest may be.

Say you snapped a photo of a friend, but they were centered toward the bottom of the image. Adding a vignette in Google Photos would make their face the central point for the vignette effect; as you apply the filter, Google Photos darkens around the person, not the center of the picture. It seems Google knows we’re terrible at framing a photo.

Similarly, editing your photos to lighten or darken them takes humans into account, and treats them differently from background imagery. Skin tones or faces may lighten while backgrounds get a touch darker in an effort to make things of interest stand out.

It’s smart editing based on the context of the picture. While power users may want more fine-tuned editing, the average photo-happy Google Photos user will likely love these tools.

By knowing where you are and scanning an image, Google also notes that Photos may create panorama views for you. Say you were at the beach, and grabbed some images of a sunset — but didn’t use your phone’s panorama setting.

Google Photos may know you took those photos at the same place based on location data, and will scan them to stitch together a panorama for you. You’ll retain the originals, too, and are free to delete the panorama if you like.

If enough different images and/or video were snapped at the same place, Google Photos will make a small video montage for you — another holdover from the Google Plus days. You can even change the music or filter of your montage, which will also alter the automatic editing.

Rather than full clips, Google Photos picks also parts of a video that suit the soundtrack and filter it automatically assigns.

If you went to an event that looked dreary due to weather — but you had a ton of fun — you could edit away the downtempo feel Google Photos assigned it. Instead, a lighter filter and soundtrack could also lead to different portions of a video being used. Photos may even use different pics where you’re smiling more.

The really impressive thing about Google Photos is how much you’re not seeing. While reading through this article hopefully gave you a better idea of what’s really going on under the hood, Google doesn’t want you worrying about it. Google just wants you to take pictures and let the Photos app take care of the rest.

With what Google Photos has shown us so far, we’re happy to oblige.

Get the TNW newsletter

Get the most important tech news in your inbox each week.