Google is in hot water after child and consumer advocacy groups complained to the Federal Trade Commission that its YouTube Kids app contains content innappropriate for children, reports The Wall Street Journal.

The complaint, filed on Tuesday by the Campaign for a Commercial-Free Childhood and the Center for Digital Democracy, states that the groups found videos that would be “extremely disturbing and/or potentially harmful for young children to view.”

The coalition claims it found videos containing explicit sexual language, jokes about pedophilia and drug use, adult discussions about violence, pornography and suicide, as well as a dance tutorial featuring Michael Jackson’s infamous crotch grab. Here’s a clip with some of these examples from the app.

An FTC spokesperson said the agency has received the complaints and is reviewing them, but couldn’t confirm whether an investigation is underway as those details are private.

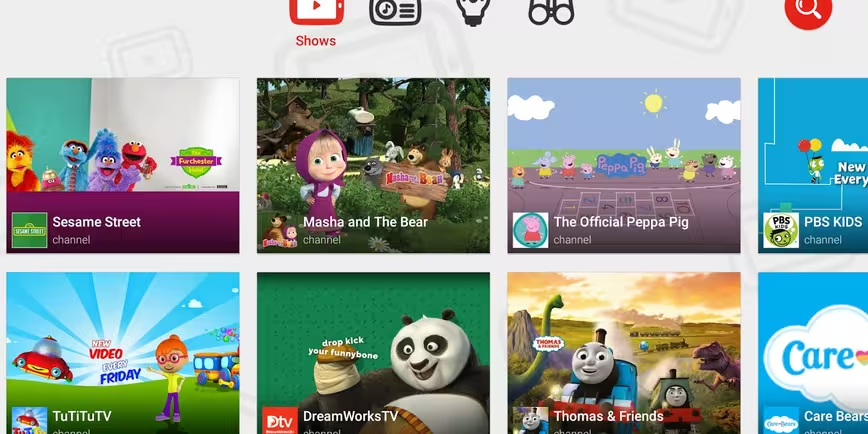

YouTube Kids was launched in February for Android and iOS in the US, and claims to offer curated video content suitable for children. The FTC received complaints about the app last month, when concerned groups and parents found ads targeted at kids blended into its mix of content.

A Google spokeswoman told the WSJ that users can flag videos they consider inappropriate, for YouTube to manually review and remove if necessary. She added that parents can turn off the search function, to ensure children do not stumble upon potentially inappropriate content.

We’ve contacted Google to find out more and will update this post when we hear back.

➤ Google’s YouTube Kids App Criticized for ‘Inappropriate Content’ [The Wall Street Journal]

Read next: Facebook video is on course to steal YouTube’s video sharing crown

Get the TNW newsletter

Get the most important tech news in your inbox each week.