Facebook has shared some more behind-the-scenes information about just how the social network is built, this time centered around the enormous amount of data that it collects. Over half a petabyte of new data arrives in the company’s warehouse every 24 hours with systems running around the clock designed to help put together meaningful features and aggregations.

The data center Facebook uses has grown considerably (2,500 times) over the past four years. The company says some challenges it faces when it comes to handling data for over 1 billion users includes scalability and processing — its largest cluster is more than 100 PB of data and it crunches more than 60,000 Hive queries a day, which is a data warehouse system that processes easy data summarization, ad-hoc queries, while analyzing large datasets. And the data stream is only getting bigger.

Frustrations with MapReduce

Facebook says that previously it used the MapReduce implementation from Apache Hadoop to help manage its data, but just a year ago, realized that it would not handle its growing needs. As a reference, MapReduce is a programming model used to process large data sets and is typically used to execute distributed computing on clusters of computers.

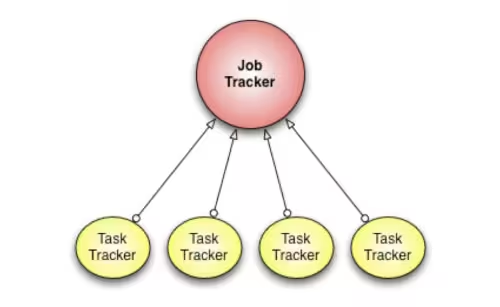

What Facebook was doing involved taking a job tracker program along with many task trackers and executing them so that it processes the data. The role of the job tracker was to manage the cluster resources and schedule all the user jobs — thus funneling it all to the individual task tracker programs. But as the number of jobs increased, the job tracker program just couldn’t handle its responsibilities adequately enough for Facebook’s needs — the company said that its cluster utilization would drop “precipitously” because of the overload.

Other frustrations that it found with MapReduce included the fixed “slot-based resource management model” which divides the cluster into a fixed number of map and reduce slots designed by a specific configuration — it felt this was inefficient because slots become wasted anytime the cluster workload doesn’t match up with the configuration. Also, when software upgrades needed to happen, Facebook found that all running jobs needed to be “killed” or cease to operate, resulting in significant downtime.

Building a better mouse trap

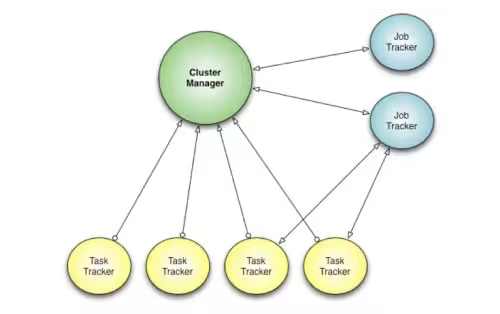

Fed up by these scalability issues and inefficiencies, Facebook’s engineering team says they set out to create a new framework and built one from scratch. It’s called Corona and it “separates cluster resource management from job coordination”.

What’s different from MapReduce is the introduction of a cluster manager, which the company says will track all the notes and the amount of free resources. An individual job tracker is created for each job. Another key difference it says it has compared to Hadoop is the scheduling — it uses push-based versus pull-based:

After the cluster manager receives resource requests from the job tracker, it pushes the resource grants back to the job tracker. Also, once the job tracker gets resource grants, it creates tasks and then pushes these tasks to the task trackers for running. There is no periodic heartbeat involved in this scheduling, so the scheduling latency is minimized

This new system will allow the cluster manager to avoid monitoring a job’s progress in order for it to focus on making fast scheduling decisions. Each different program looks to have its own role in the chain, with all resources spread out to allow it to grow and do an adequate job processing all of Facebook’s data.

Operation: Deployment

The next challenge facing Facebook was getting Corona deployed to the entire system. It says it staged this in three phases: the first one was similar to any beta release — it released it to 500 machines in the cluster so it could receive feedback from early adopters. Next, it moved towards handling what it says were “non-critical workloads”, which resulted in the first scale problem — the cluster manager couldn’t handle 1,000 nodes so it slowed things down. After tweaking it, Facebook moved to the third and final phase: taking over all MapReduce jobs.

Facebook says that the process took them three months to complete and Corona was installed across all its systems by the middle of this year. So far, it says it’s realized several key benefits of the implementation, decreased average time to refill a slot (down from 66 seconds with MapReduce to 55 seconds), better cluster utilization, efficient scheduling, and lower job latency.

Corona is still a work-in-progress within the company and Facebook says that it has become an integral part of its data infrastructure. It has also open-sourced the version it has currently running in production and it’s hosted on its GitHub repository for anyone to use and improve upon.

Photo credit: ROBYN BECK/AFP/Getty Images

Get the TNW newsletter

Get the most important tech news in your inbox each week.