Social media, a tool created to guard freedom of speech and democracy, has increasingly been used in more sinister ways. From its role in lowering the levels of trust in media, to inciting online violence, and amplifying political disinformation in elections— Facebook isn’t just a space to share “what’s on your mind,” and you’d be naive to believe so.

With just over a year left until the 2020 U.S. presidential elections, Facebook updated its policies on the spread of misinformation and released a bunch of new tools to better “protect the democratic process” in a post published yesterday. Now, Facebook will clearly label false posts and state-controlled media, and will invest $2 million in media literacy projects to help people understand the information they’re seeing online.

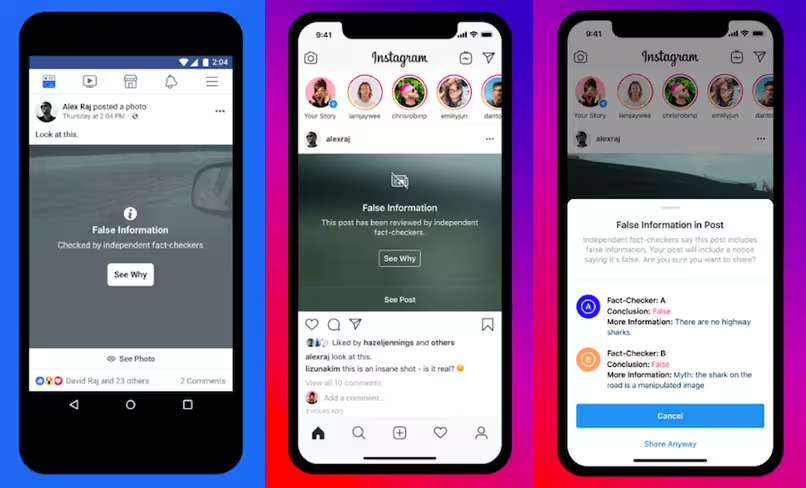

Over the next month, content published on Facebook and Instagram that has been rated false or partly false by a third-party fact-checker will be more prominently labeled so people can better decide for themselves what to read, trust, and share. A pop-up will also appear when users attempt to share posts on Instagram that include content that’s been debunked by its fact-checkers.

According to the social networking company, they’ve “made significant investments since 2016 to better identify new threats, close vulnerabilities, and reduce the spread of viral misinformation and fake accounts.”

This comes after a study by the Oxford Internet Institute found that since 2017, organized social media manipulation has more than doubled with at least 70 countries known to be using online propaganda to manipulate mass public opinion, and in some cases, on a global scale. Despite there being more social media platforms than ever, Facebook remains the most popular choice for online manipulation with propaganda campaigns found on the platform in 56 countries.

To fight this, Facebook revealed it has removed four networks found to be fake, state-backed misinformation-spreading accounts based in Iran and Russia — countries that have recently been found to cross borders to spread misinformation on not just their in-apps but on a global scale too.

Alongside these updates to protect voters in the states, the tech giant introduced a security tool for elected officials and candidates that monitors their accounts to detect hacking such as login attempts in unusual locations or on unverified devices.

Although Facebook is increasing its transparency on the content it hosts, questions still linger as to why the platform allows such ads to circulate online in the first place. Earlier this year, during the Australian election, Facebook said it’s not “our role to remove content that one side of a political debate considers to be false,” adding it only removes content that violates their community standards.

As the face of election campaigns change as technology advances, social platforms like Facebook must take responsibility in preventing the spread of misinformation. But there’s no denying that Facebook’s latest steps to curb this issue are promising.

Get the TNW newsletter

Get the most important tech news in your inbox each week.