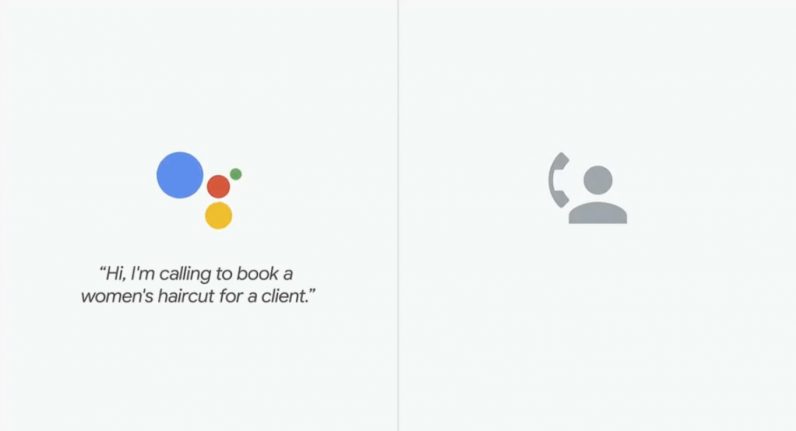

Woman A: “Hello. How can I help you?”

Woman B: “Hi. I’m calling to book a woman’s haircut for a client. I’m looking for something on May 3.”

Woman A: “Sure. Give me one second — sure. What time are you looking for around?”

Woman B: “At 12 p.m.”

Most of you will recognize this phone conversation. Not because it was particularly exciting, but because, as was revealed later, neither voices were human. They were AI-generated bots impersonating humans, created by Google.

Although this first onstage demo of Google Duplex, as the new technology is called, was pre-recorded, the audience was left in awe. With its many “umms” and “ahs,” the conversation sounds completely natural. And everyone listening to the recording will come to the same conclusion: that could have fooled me too.

“At first, I thought it was phenomenal. But my second thought was: How long before someone starts exploiting this?” says Mark Rolston when we discuss the demo. He’s the founder and Chief Creative of argodesign and an expert on human-computer interaction.

Dark interactions

“Technology should be beautiful, useful, and invisible,” reads the tagline on argodesign’s website. A mantra that Rolston, the designer, still lives by, but that Rolston, the human, is increasingly worried about. At least, about the invisible part. Because now that new tracking technologies and smart sensors keep popping up in our offices and streets, we often interact with machines without realizing it.

These dark interactions, as he calls them, are human-computer exchanges that happen unconsciously in the background. One of the most simple examples is the motion sensor. It senses your movements and turns on the lights, or opens a door. A human-sounding digital assistant booking appointments, like Google Duplex, is obviously of a different caliber.

To be clear, the term “dark” should not be interpreted as “bad” or “gloomy” — it merely means the interactions happen without us being conscious of them. Which can be wonderful in many ways, says Rolston. “The bright side is that these technologies make life a little more elegant; sometimes even magical. But we do need a trust infrastructure so those same technologies are unable to know us in ways we prefer not to be known.”

China’s very own 1984

One of the most troubling scenarios is currently playing out in China, where an evolving algorithmic surveillance system is used to keep tabs on its citizens. Recently, a Chinese fugitive was picked out of a crowd of 60,000 people at a pop concert by an AI-powered facial recognition system.

And it’s not just criminals who can be tracked in public: everybody is under surveillance and the Chinese government has unlimited power to process the gathered data. In the city of Shenzhen, local police are already using facial recognition technology to reduce jaywalking — large billboards show jaywalkers’ faces and family names to publicly shame them.

Will we see similar technologies in western countries? Oh yes, says Rolston, though they probably will be implemented in a less pervasive, more contractual way.

“Think of it like this: When we go through security at the airport, we don’t necessarily enjoy the body scans and the facial recognition technology. But we suffer it for that moment. You can imagine other specific occasions in which we would temporarily tolerate a lower level of privacy.”

Garbage bins spying on you

To be fair, most smart sensors in the public sphere have other purposes than surveillance; some don’t even gather human data. Systems that control street lights or detect when garbage bins are full won’t be invading anyone’s privacy. However, when that same garbage bin sensor is tracking wifi signals from people’s phones to show targeted advertisements, which happened in London in 2013, it becomes a completely different story.

The same goes for the workplace, where smart sensors and other tracking devices can be very useful. Air quality and decibel levels can be measured to maintain a comfortable working environment. Smart lighting systems know when to turn the lights off to not waste energy. Most office workers wouldn’t mind those systems running quietly in the background and collecting data.

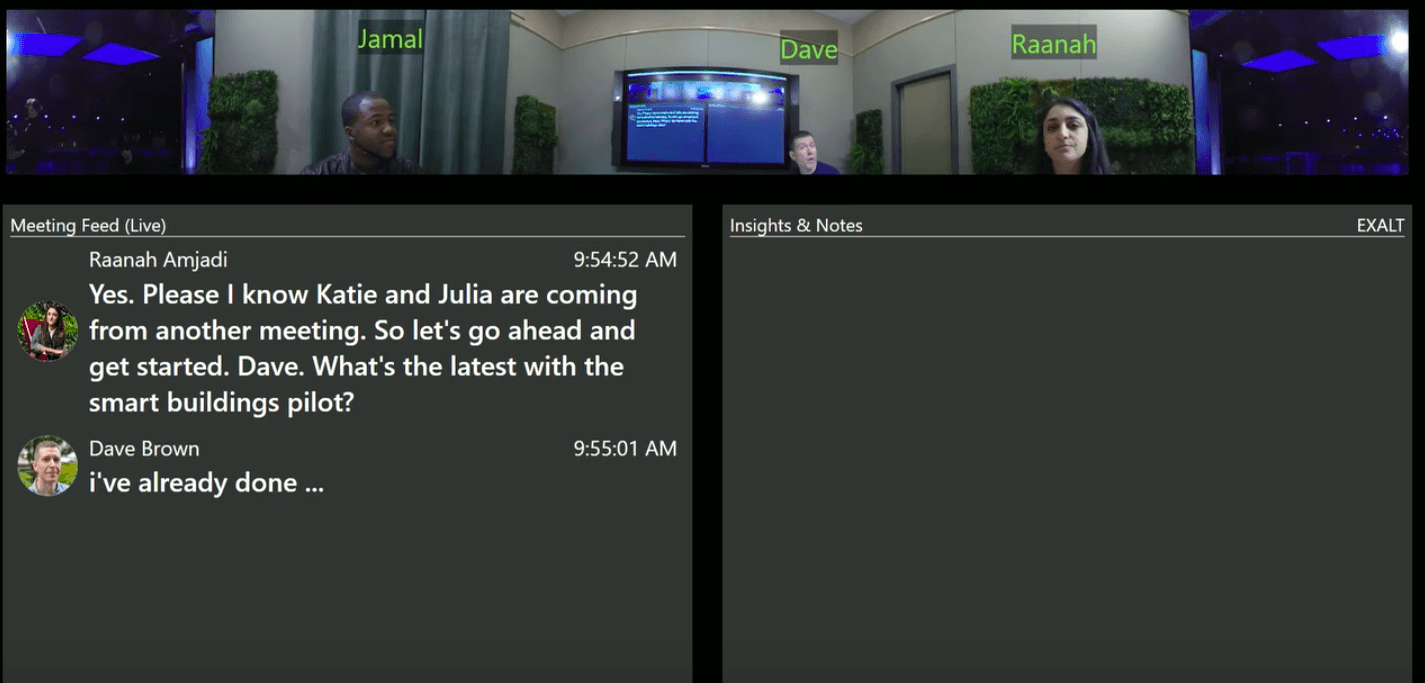

But what about technologies that can transcribe what’s said in meetings, in real-time? Microsoft recently showed a demo of Microsoft Build, a system that combines audio and video to create a live text feed of what’s said.

“Again, the technology itself is very convenient,” says Rolston. (He’s right: I’d love for technology to automatically transcribe our one-hour long conversation, something I had to do manually for this article.) But, he adds, it’s also hugely exploitative. What if some employees don’t realize it’s transcribing and start shit-talking their boss?”

Drunk on technology

The number of dark interactions we encounter on a daily basis will only increase in the coming years, making it impossible to always know which data we are sharing for what purposes. The solution, according to Rolston, should come in the form of an off-button — a piece of technology that allows us to become anonymous whenever we want.

“Our smartphones all have a ‘mute’ switch, right? Now imagine another switch that just says ‘invisible’. You switch it on and all the microphones, sensors and cameras immediately ignore you — you no longer exist in that room.”

Though Rolston believes more governmental regulation is needed — he’s keen to see how GDPR will affect American tech companies — consumers need to change their ways, too.

“We are still so drunk on the free and the new — mainly because the digital market is still so young. Because of that, we set aside judgments we normally would assign to products we use.”

Rolston thinks the tech industry has some maturing to do. But what if consumers do understand the implications of using technology that’s free, know their data is being used to the benefit of third parties, and just don’t care? Facebook was confronted with one of the biggest scandals in its existence and still managed to generate a 63 percent rise in profit as well as an increase in users.

Many, many stupid things

“It’s just not sustainable,” says Rolston. “I envision this era as a large bow: the arrow is currently being pulled back but will be released at some point.”

Rolston is not exactly sure what will happen once the string bounces back. “In case of China, it’s easy to claim their surveillance system will only have negative consequences, that its society will become like in Orwell’s 1984. The truth is, we just don’t know. So I guess that depends on your view on humanity.”

With all his talk of doom and gloom, Rolston still labels himself an optimist. “Churchill once said about the Americans: ‘You can always count on Americans to do the right thing — after they’ve tried everything else.’ I think that applies to all humans. We try many, many stupid things but at the same time, the state of humanity is still better now than any other times in history. So we will rise above, eventually. After having tried everything else.”

Get the TNW newsletter

Get the most important tech news in your inbox each week.

This post is brought to you by EDGE Technologies, a company specialized in developing smart, high-tech office buildings that promote workplace health.