For pedophiles, a single hashtag opened the door to one of Instagram’s seediest corners, one where images of sexually exploited children were openly traded as if they were collectibles. For anyone willing to engage, finding images of child sexual abuse was about as simple searching for a laptop on Craigslist.

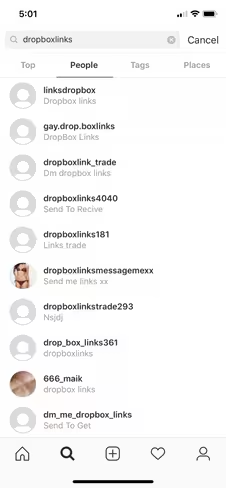

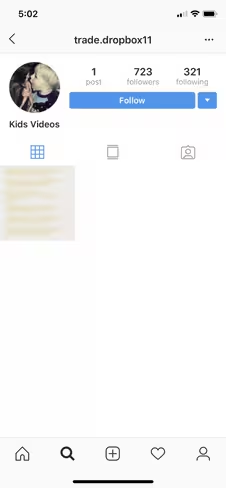

The transactions started in the open with one of several hashtags — #dropboxlinks was one of the most popular, although variants for those seeking boys, gay, or a specific age group were prevalent. All it took was following (or searching for) the correct hashtags. Users then moved the conversation to private message where they allegedly swapped links of Dropbox folders containing the illicit imagery.

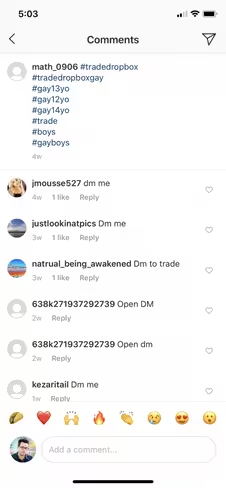

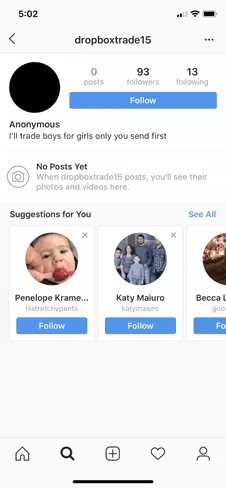

“Young boys only,” one message read. “DM me slaves,” said another. “I’ll trade, have all nude girl videos,” wrote one user. For the traders, there was only one rule: you send first. Honor among pedophiles is elusive in the Instagram age.

For its part, Instagram confirmed the problem and restricted several of the offending hashtags — though more remain. “Keeping children and young people safe on Instagram is hugely important to us,” a spokesperson told The Atlantic’s Taylor Lorenz. Instagram, though, fell short of expectations in cleaning up its mess. According to screenshots obtained by Lorenz, Instagram replied to some users, saying that the offending accounts had not violated the platform’s terms of service.

Unhappy with Instagram‘s response, the teens who first uncovered the ring took matters into their own hands. Through what can only be described as weaponized meme content, the teens flooded the hashtag with thousands of new images, making it increasingly difficult for traders to find others to swap links with.

https://www.instagram.com/p/BsXjnFSg9Ba/?utm_source=ig_web_button_share_sheet

Days after the problem surfaced, the hashtags appear to be dead. The accounts trading child sexual abuse imagery, however, remain.

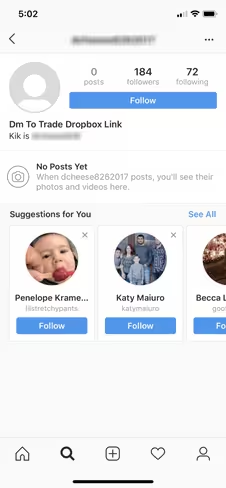

We found dozens of them in a cursory search just minutes ago. Many are attempting to move their trading operations to other platforms, like Kik and WhatsApp, leaving links in their bio for interested traders. Some are even attempting to sell their cache via PayPal or Bitcoin payments.

A Dropbox spokesperson told TNW:

Child exploitation is a horrific crime and we condemn in the strongest possible terms anyone who abuses our platform to share it. We work with Instagram and other sites to ensure this type of content is taken down as soon as possible. Whenever we’re alerted to suspected child exploitation imagery on our platform, we act quickly to investigate it, take it down, and report it to the National Center for Missing and Exploited Children (NCMEC) who refers our reports to the appropriate authorities.

Instagram couldn’t be reached for comment.

Get the TNW newsletter

Get the most important tech news in your inbox each week.