The iPhone 11 and 11 Pro reveal event was all about the new lineup’s impressive camera performance, night mode, and that awfully branded slow-motion video selfie, the “slofie.” But there was one other special camera feature which Apple didn’t focus on much: Deep Fusion. Of course, there was a good reason for that — the feature had yet to make its way to iOS 13. Well, the wait is over.

Deep Fusion has finally made its way in the latest iteration of Apple’s operating system for mobile, iOS 13.2 (but only the developer beta) — and the results are making the Android fan in me very upset.

For those unfamiliar, Deep Fusion relies on the iPhone’s A13 Bionic chip and its Neural Engine to perform pixel-by-pixel processing to improve texture, details, and noise reduction in less-than-ideal lighting. Apple says the feature will kick in automatically, similarly to its new Night mode.

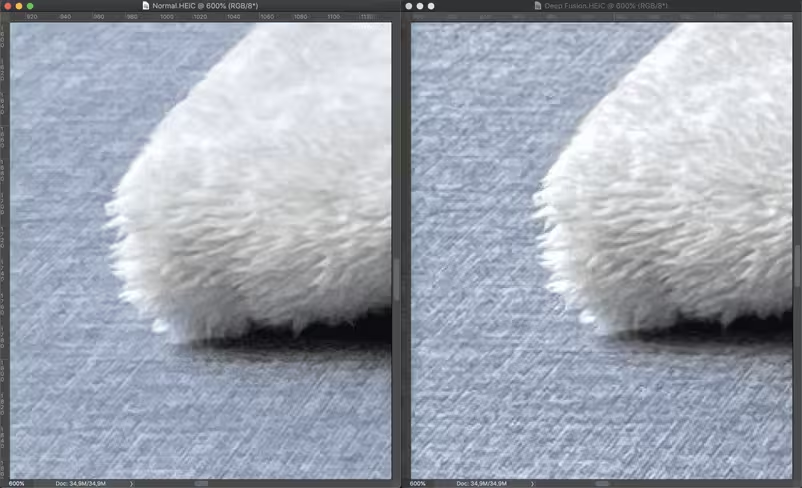

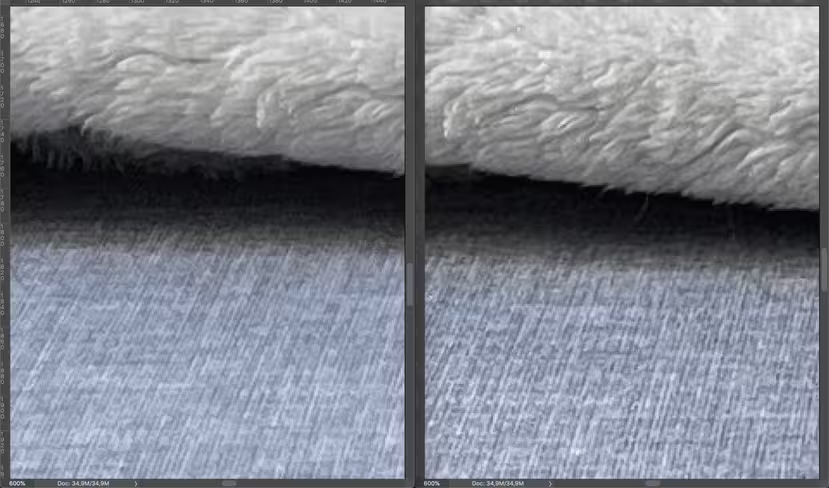

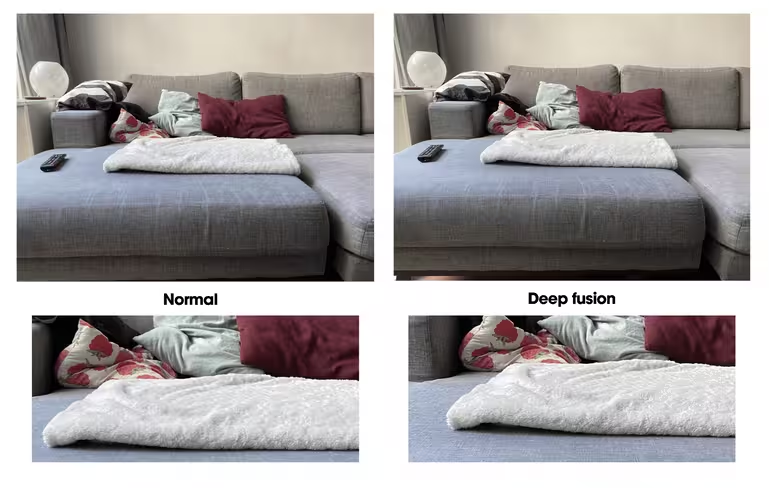

We ran some quick trials with Deep Fusion, and while the differences are hard to spot unless you zoom in, it makes a big difference in the finer details.

Of course, the iPhone 11’s camera is already excellent to start with, so that explains why some of you might think the improvements are marginal. As someone who obsesses over pixel density and sharpness, though, I’m truly amazed at the results.

Anyways, we’ll let the images speak for themselves. Here we go:

We’re still in the barely noticeable range here.

But here’s what happens if we zoom in a little:

I mean, look at the amount of detail Deep Fusion managers to recover in the texture of the sofa and the blanket. The blurriness is significantly less visible, too, compared to shooting in the standard mode. The brightness and overall color tones also seem to have benefited from Deep Fusion. These improvements should make it easier for the nit-pickers among you to confidently crop images you might’ve otherwise binned.

You can give Deep Fusion a go by updating to the latest developer beta version of iOS — 13.2. Needless to say, using developer beta versions comes with some risks. So if you’re a regular user, you might want to wait a bit longer until Apple rolls it out officially. It’s not yet clear when that’ll be, but it shouldn’t take long.

Just a heads-up: Deep Fusion only seems to work when the “Capture Outside the Frame” setting has been turned off. The trickiest part is that Apple has yet to implement a UI that indicates whether Deep Fusion is activated. From our experience, the easiest way to switch now is by turning “Capture Outside the Frame” on and off.

Yeah, I know, it’s a hassle, but the results are, quite frankly, impressive. I’ve always been a fan of Android, but factoring in the improvements in image quality… learning how to live without a fingerprint scanner doesn’t seem all that bad.

Get the TNW newsletter

Get the most important tech news in your inbox each week.