Summary: Zoom has partnered with World, Sam Altman’s biometric identity company, to let meeting participants verify they are human using World’s Deep Face technology, which cross-references iris-scanned biometric profiles with live video to display a “Verified Human” badge. The feature responds to deepfake fraud that cost businesses over $200 million in Q1 2025 alone, including a $25 million loss at engineering firm Arup, though World’s iris-scanning Orb system faces ongoing regulatory action in Spain, Germany, the Philippines, and several other countries.

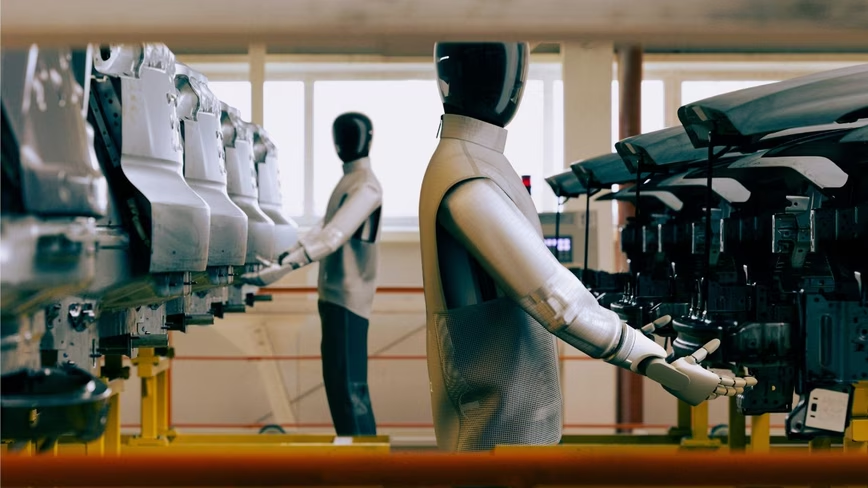

Zoom has partnered with World, the biometric identity company co-founded by Sam Altman, to let meeting participants prove they are real humans and not AI-generated deepfakes. The integration uses World’s Deep Face technology to cross-reference a participant’s live video feed against their iris-scanned biometric profile, and displays a “Verified Human” badge next to their name when the match succeeds. Hosts can enable a Deep Face waiting room that requires verification before anyone joins, and participants can request that someone verify themselves mid-call.

The feature addresses a threat that has moved from theoretical to expensive. In early 2024, engineering firm Arup lost $25 million after an employee in Hong Kong authorised a series of wire transfers during a video call in which every other participant turned out to be an AI-generated deepfake of his colleagues, including the company’s CFO. A similar attack hit a multinational firm in Singapore in 2025. Across the industry, deepfake-enabled fraud exceeded $200 million in losses in the first quarter of 2025 alone, and the average loss per corporate incident now tops $500,000.

How verification works

World’s Deep Face takes a three-pronged approach. It cross-references a signed image captured during the user’s original registration through World’s Orb device, a spherical biometric scanner that photographs iris patterns, with a real-time face scan from the user’s phone or computer and a live video frame visible to other meeting participants. Verification only succeeds when all three inputs match. The process runs locally on the participant’s device, and World says no personal data leaves the phone.

This is architecturally different from the deepfake detection tools already available on Zoom’s marketplace. Products from Pindrop, Reality Defender, and Resemble AI analyse video frames for telltale signs of AI manipulation, flagging synthetic media in real time. Both Zoom and World said that because video generation models are improving rapidly, those frame-by-frame detection methods are becoming increasingly unreliable. Deep Face sidesteps the detection problem entirely by verifying the person’s identity against a biometric record rather than trying to determine whether the pixels on screen were generated by software.

The trade-off is that Deep Face requires participants to have a World ID, which means they must have visited one of World’s physical Orb devices to have their irises scanned. The network currently has around 18 million verified users across 160 countries and roughly 1,500 active Orbs. That is a small fraction of Zoom’s user base, which limits the feature’s immediate utility. For most meetings, the existing frame-analysis tools will remain the practical option. Deep Face is designed for high-stakes calls where identity certainty justifies the friction of requiring biometric pre-registration.

The business case

Zoom’s spokesperson Travis Isaman described the integration as part of the company’s “open ecosystem approach, giving customers more ways to build trust into their workflows based on what matters most for their use case.” The framing is deliberate. Zoom is not endorsing World ID as its default identity layer; it is offering it as one option among several in a marketplace that already includes multiple deepfake detection and identity verification tools.

For Zoom, the partnership is defensive. The company’s revenue reached $4.67 billion in fiscal 2025, growing at a modest 3%, and its strategic challenge is to remain the default platform for business communication as competitors add AI features across the board. Zoom has responded with AI avatars, an AI-powered office suite, and cross-application AI notetakers. Adding human verification addresses a different vector: making Zoom the platform that enterprises trust for sensitive conversations. In a market where a single deepfake call can cost $25 million, that trust has a measurable commercial value.

For World, the Zoom integration is a distribution win. The company, which rebranded from Worldcoin in 2024, has struggled to move beyond crypto-adjacent early adopters. Its partnerships with Visa, Tinder, Razer, and Coinbase have expanded the contexts in which a World ID is useful, but none of those integrations create the kind of immediate, visceral demand that a corporate security use case does. If a company’s treasury team requires World ID verification for any video call involving wire transfer authorisation, that creates institutional adoption that individual consumer partnerships do not.

The privacy question

World’s Orb-based identity system has faced sustained regulatory scrutiny. Spain’s data protection authority issued a formal warning in February 2026 citing GDPR violations and insufficient data protection assessments. Germany’s Bavarian data regulator ordered the deletion of iris data in December 2024. The Philippines issued a cease-and-desist order in October 2025 for obtaining consent through financial incentives. Investigations or suspensions have occurred in Argentina, Kenya, Hong Kong, and Indonesia.

The governance frameworks emerging around biometric AI in 2026, including the EU AI Act’s high-risk classification for biometric identification systems, add further complexity. World maintains that its zero-knowledge proof architecture means verification happens without exposing personal data, and that iris images are encrypted and stored only on the user’s device. Critics argue that the collection process itself, requiring a physical visit to an Orb to have your eyes scanned, creates risks that privacy-preserving cryptography does not fully address, particularly when recruitment has disproportionately targeted lower-income communities.

For enterprises evaluating the Zoom integration, the calculus is whether the security benefit of biometric human verification outweighs the regulatory and reputational risk of requiring employees or counterparties to register with a company that multiple data protection authorities have sanctioned. That calculation will differ by jurisdiction and by industry. A Wall Street trading desk conducting a $100 million deal over Zoom may decide the risk is worth it. A European public-sector organisation almost certainly will not.

What this means

The Zoom-World partnership is a marker of how far the deepfake threat has advanced. Two years ago, the Arup incident was treated as an extraordinary outlier. Today, deepfake-enabled fraud is a billion-dollar category, AI-generated video is sophisticated enough to defeat frame-analysis detection, and the question of whether the person on a video call is real has become a legitimate enterprise security concern.

The solution Zoom and World are proposing, biometric identity verification anchored to iris scans, works technically but introduces its own set of complications around privacy, regulatory compliance, and the barrier to adoption that physical Orb registration creates. It is a feature for specific, high-value use cases rather than a default setting for every Monday morning stand-up. But the fact that Zoom considers it worth integrating at all tells you something about where the technology landscape is heading: toward a future where proving you are human is no longer something you can take for granted, even when you are looking someone in the eye.

Get the TNW newsletter

Get the most important tech news in your inbox each week.