Despite advances in visual technology that let machines emulate a vast degree of human brain function, one thing that mechanical instruments have yet to master is depth perception. Even with advanced technology, computers cannot emulate the calculations of the human visual cortex.

San Francisco-based Stereolabs aims to rectify that with the new ZED Stereo Camera and software kit, giving drones, robots and other machines skills like indoor/outdoor collision avoidance, autonomous navigation and 3D mapping capabilities. This advanced stereoscopic vision equipment is now available to the mass market.

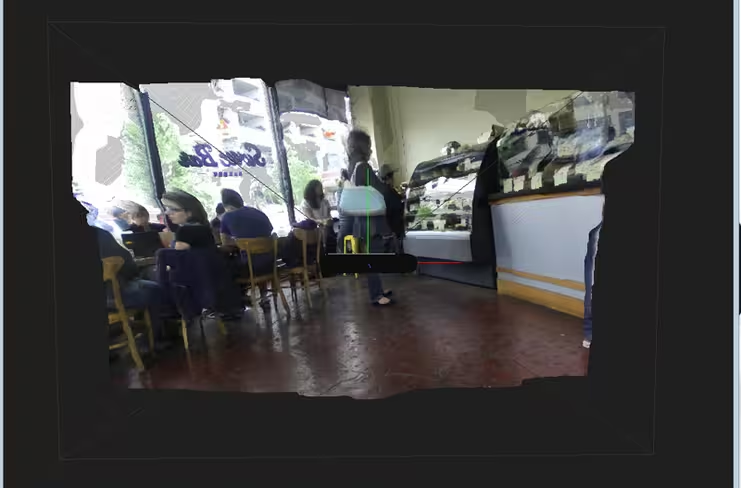

The ZED camera is based on CUDA, a programming model from top-tier Nvidia graphics cards. They allow computers running the camera’s accompanying software to process depth maps in real time at resolutions up to 4,416 x 1,242 pixels at 15 frames per second, with speeds of up to 120fps at lower resolutions.

“The commerical market for this technology is growing quickly with the advent of self-driving cars and drones,” Marc Beudet, Stereolabs’ product manager told TNW. “Tech hobbyists — people who like to tinker with Raspberry Pi or a Jetsen TK1 — are the market for this product. Even today, drones need human operators.”

The ZED Stereo Camera, a depth sensor based on passive stereovision, outputs high resolution side-by-side video on USB 3.0 with two synchronized left and right video streams. Using the ZED SDK, the graphics processing unit (GPU) from a host PC computes the depth map from the side-by-side video in real time from the software.

Dual sensors on the front of the device feed two high-definition videos to the software, which then compares the images to produce a complete “depth map” of every object up to 20 meters away in real time.

Dual sensors on the front of the device feed two high-definition videos to the software, which then compares the images to produce a complete “depth map” of every object up to 20 meters away in real time.

With the raw depth map the ZED produces, developers can use the software to manipulate data, including displaying a 3D “point cloud” visualization of recorded objects and connecting with Intel’s OpenCV library for motion tracking and gesture recognition.

How would a drone, for example, use ZED to comprehend where objects are and how to avoid them? First, it would be figured out on the computer. Then the drone owner/manufacturer would write a separate program that works with the depth map to determine where the drone is relative to surrounding objects. It then figures out what obstacles are in its path and determine how much it has to steer to avoid them. After that, it would tell the drone to steer the same way as a person with a controller.

It sounds hard and it is — it took seven years to develop this technology — but ZED’s creators are old hands in this genre. Stereolabs specializes in stereoscopic 3D depth sensing for entertainment and industrial markets and created the tech behind 3D coverage of the London Olympics, the French Tennis Open and 3D Hollywood feature films like Avatar.

While drones and self-driving cars are popular uses now, Beudet also sees the product being used for humanitarian purposes, like accessing disaster zones to search for survivors.

The ZED SDK requires a dual core processor that runs at 2.3GHz or faster, 4 GB of RAM, an Nvidia CUDA-compatible graphics card with a compute capability of 2.0 or better, a USB 3.0 port, and Windows 7, 8 or 8.1. For high resolution footage, ZED recommends using a SSD with 250MB/s transfer speeds or faster for storage.

ZED’s $449 developer kit includes the ZED stereo camera and tripod, a USB stick with the software development kit (SDK) and hardware drivers, a 2 meter USB 3.0 cable and a quick start guide.

Units have been available for pre-order and are shipping today.

Get the TNW newsletter

Get the most important tech news in your inbox each week.