While blame for the spreading fake news has often fallen on social media platforms like Facebook and Twitter, they’re hardly alone. YouTube in particular is hugely influential, with five billion videos watched every day. Earlier this year, Chief Product Office Neal Mohan said the average mobile viewing session lasts “more than 60 minutes.”

Imagine then, how such content can shape viewer opinions, especially when fake news has sometimes dominated trending stories.

YouTube is finally stepping up and making improvements, rolling out some changes to fight fake news and unfounded conspiracies, while “making authorative sources readily accessible.” Some of these we’ve heard about before, others are new, but all are for the better.

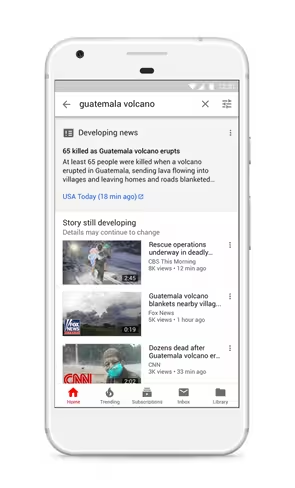

You’re going to start seeing links to breaking news articles in your searches. Yes, articles, not videos. Simply put, it takes longer to verify and produce high quality video than articles. In YouTube‘s own words:

After a breaking news event, it takes time to verify, produce and publish high-quality videos. Journalists often write articles first to break the news rather than produce videos. That’s why in the coming weeks in the U.S. we will start providing a short preview of news articles in search results on YouTube that link to the full article during the initial hours of a major news event, along with a reminder that breaking and developing news can rapidly change.

That last sentence is particularly important, because videos have far more permanence than articles. When I write a breaking story, I’m able to easily make as many updates as necessary for accurate reporting.

On YouTube, making a correction usually amounts to a small blurb in the description that nobody is going to read anyway. You can’t just edit your video with more accurate information. If you re-upload the video, you lose your views. And even if you could make those edits without a negative impact, video is time consuming enough that I imagine most creators would be dissuaded from doing so regularly.

That’s why getting it right the first time matters. This change helps make sure that when people search YouTube for information on a breaking story, they can easily access reputable information rather than just watching a poorly reported video from whomever just wants to rack up views first.

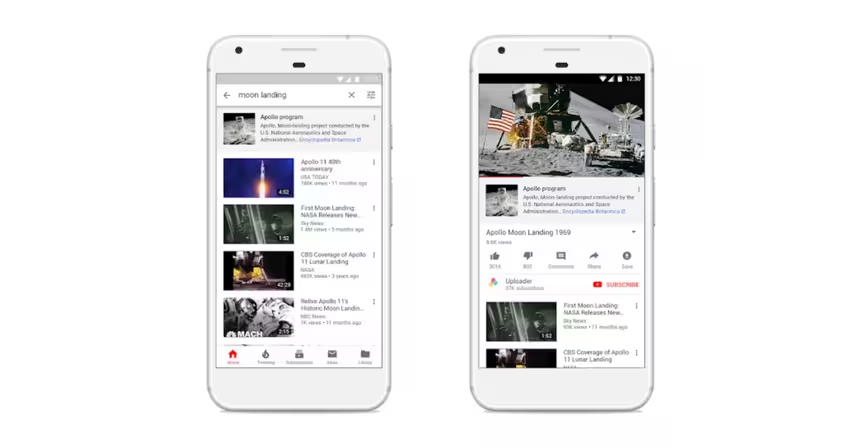

In a similar vein, YouTube is addressing the epidemic of conspiracy theories and other misinformation by providing proper context. Starting today, users will see links to third parties such as Wikipedia and Encyclopædia Britannica when they come across “videos on a small number of well-established historical and scientific topics that have often been subject to misinformation, like the moon landing and the Oklahoma City Bombing.” I really hope flat-earthers are included in that list.

Other updates include sharing more content from local news, investments in digital literacy education for teens, and promises of more improvements on the way. For more information, check out the company’s blog post.

The changes will likely cause with their own share of controversy. I wouldn’t be surprised to see some fake news creators complaining their videos are being demeaned by YouTube‘s new policies. But freedom of speech isn’t freedom from consequences. If you spread blatant misinformation, you deserve to be called out.

Get the TNW newsletter

Get the most important tech news in your inbox each week.