Initially, the internet was built for text, and technology has learned how to read at a pretty advanced level. However, the web has become increasingly visual, and tech has not fully kept up: the internet can read, but it can’t see.

In 2016, that will change. Image recognition and visual search have made impressive strides and will become adult technologies. The vast number of web companies that have acquired image-recognition startups proves that expectations are high.

So let’s look at where we are and where we’re going.

Technology is ready for it

When the media report on visual search and image recognition, it’s often about the humorous side effects of looking at the world through the eyes of AI.

Take DroneSweetie, a twitter bot that tries to describe photos of drones using a Google AI. The resulting descriptions range from “a helicopter spins up its blades on the deck of an aircraft carrier” to more absurd ones such as “a green blue white and black peacock and a plate and silverware.”

It’s funny, but also outdated.

The artificial intelligence that DroneSweetie uses gained fame when it taught itself to recognize cats. Today, it’s capable of recognizing photographs and videos with far greater accuracy than before, reaching near- human levels of understanding.

Last year, an artist used the tech to annotate a walk through Amsterdam in real time. It proves how close the tech is to “seeing” the world. It still makes mistakes, but what’s remarkable is how often it’s right: “A man holding a hot dog in a bun with mustard and ketchup” is 100 percent correct.

According to Li Deng, a leading deep-learning expert at Microsoft Research, most quantum leaps in machine learning have been made. In specific verticals, image recognition software now achieves human accuracy levels, with error rates lower than 4.7 percent.

Li Deng argues that we shouldn’t chase marginal improvements in accuracy anymore – researchers should focus on solving real-world problems from now on.

Visual search and image recognition will be huge technological drivers in verticals like medical imagery, retail and e-commerce, self-driving cars and even smart cities.

The Android app BlindTool is a good example. It tells people with vision impairment what is standing in front of them. As one tester put it, “My phone is seeing for me.”

Thanks to image recognition in public trash bins, smart cities will be able to empty only the full ones, saving the garbage collector a lot of time and reduce CO2.

The National Institute of Health is working on a software system that will recognize pills. Unidentified and misidentified prescription pills present challenges for patients and professionals. For the nine out of ten U.S. citizens over age 65 who take more than one prescription pill, misidentifying those pills can result in adverse drug events that affect health or cause death.

Business is ready for it

Big tech is aware of the opportunity. Over the past few years, Facebook, Yahoo, Drobox, Google and Pinterest acquired startups that are working on image recognition and deep learning. Mid-2015, Facebook’s algorithm was strong enough to identify users 83 percent of the time even if their face isn’t visible.

Apart from image recognition, there’s an equally important, if less well known evolution in visual search. Image recognition converts images to information (image to text). Visual search, on the other hand, recognizes products in an image and then matches it to similar items (image to image).

Pinterest is already using visual search, with search results now showing buy buttons — a feature introduced last summer.

Flipkart, India’s leading e-commerce marketplace, also offers visual search. The SaaS they use is able to distinguish pieces of western wear, as well as traditional Indian wear like saris, based on their individual color, patterns, silhouette, cut and more.

Expect to see publishers increasingly adopt visual search: they are sitting on tons of visual content but they struggle with monetization. Visual search allows them to make money directly from content (because it’s searchable and leads to similar products to buy), instead of selling intrusive advertising.

The same goes for video.

Online video is the fastest-growing category of internet advertising, which will grow by 22.5 percent this year according to forecasts. Consumers are tired of typing text queries into their mobile devices, and equally tired of intrusive advertising. Instead, they expect to have the content on their devices easily actionable.

Consumers are ready for it

An interesting application of this video recognition technology is in second screen experiences. According to a report, 87 percent of consumers use a second screen as they flip channels. One of the reasons is that they want to know more about what they are watching on TV, and if possible, see where they can buy it.

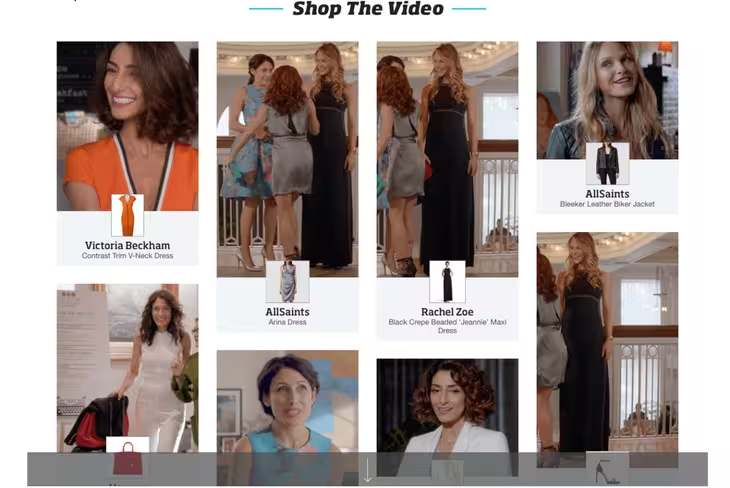

For a very early idea of what’s in store, check The Lookbook, launched by BravoTV. It features fashion and beauty content from the series ‘The Girlfriend’s Guide to Divorce’ – and it comes with a buy button.

“We get a lot of inquiries asking about the fashion and styles of what viewers see on air,” said Aimee Viles, senior VP of emerging media at Bravo parent NBC. “So we saw a great opportunity.”

“We get a lot of inquiries asking about the fashion and styles of what viewers see on air,” said Aimee Viles, senior VP of emerging media at Bravo parent NBC. “So we saw a great opportunity.”

The tech behind The Lookbook is driven by the startup The Take, which uses human curation with a sprinkle of tech to offer shoppable, clickable images to viewers.

Imagine this being driven by intelligent image recognition very soon – and expect consumers to adopt it eagerly, because they vastly prefer this type of intent-driven ad clicks to intrusive popups.

We’ve never had an inventory this big

Finally, the internet has really become the visual web.

Every second about 800 pictures are uploaded on Instagram. 350 million a day on Facebook. Every minute, about 300 hours of video are uploaded to YouTube. We are drowning in visual content, and yet we continue to create more. All that video and photo content is an unlit part of the internet, getting harder to find and unlock.

Dropbox’s VP of Engineering, Aditya Agarwal comments, “The rate at which we’re all creating memories is going up so quickly that the ability to organize, curate, and make sense of the memories we’re accumulating is a huge opportunity.”

Images are seldom indexed well – let alone the content of the images. Joe Veix refers to this as “the lonely web” – a place where images with titles like 085772.jpeg and videos with names like 5115d7.wmv go to die, never to be seen by anyone.

It’s not long before image recognition, visual search, and AI will start being able to see and understand all this information, and I expect this shift to start this year. We will have visual search that approaches human-like levels of indexing and finding information. And it will blow our minds!

The experience will be straight from Clarke’s famous third law – “Any sufficiently advanced technology is indistinguishable from magic.”

Read next: 14 best data visualization tools

Get the TNW newsletter

Get the most important tech news in your inbox each week.