What if I told you that, just like modern VR was born from smartphone technology, the next generation of smart homes will share technology with autonomous vehicles? The centerpiece is machine learning-based activity prediction. We’ll get to how that works and what it means, but first, let’s look at what’s wrong with today’s smart homes.

Smart homes today consist of smart devices connected to one or more hubs, often with voice interfaces via smart speakers or smartphones. There may be cameras, but they are largely for monitoring and security. Turning on lights with voice feels magical at first, and remotely checking home devices like thermostats is useful. We’ll call current smart homes “Class 1”: a collection of internet-connected devices that respond to direct commands, and have basic responsiveness.

There’s a problem with Class 1 homes: the operational complexity grows with each new device. New voice commands. New device names. New errors. Besides the novelty, the complexity growth negates the value of the smart home, also making them unusable to older generations who didn’t grow up using computers.

But there’s a solution: “Class 2” smart homes. A smart home with new technologies that are each complex, yet culminate in a simple system…even as device count grows.

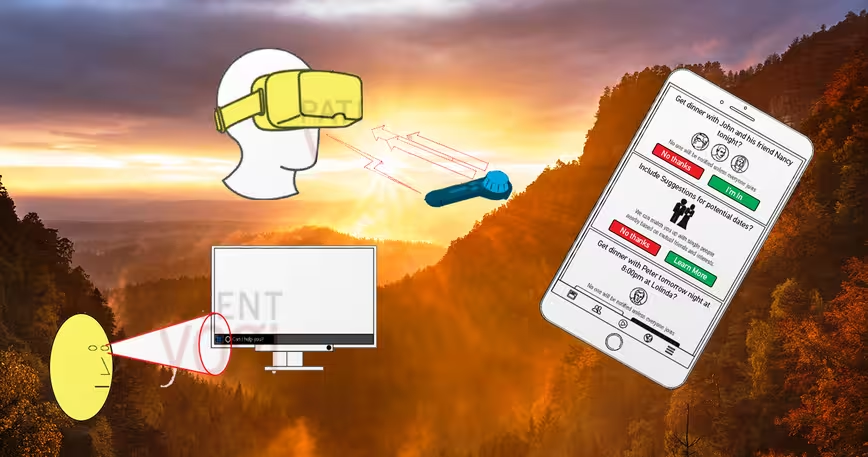

Class 2 homes will be distinguished by always-on predictive assistance – a system that enhances the day-to-day interactions that comprise our relationship with where we live. Unlike current smart homes where we rely on devices (like smartphones) for control, Class 2 homes will have built-in interfaces using cameras, displays and speakers. You’ll express intent with movements, gaze, gestures, and voice (but we’ll eliminate wake-up commands like “Alexa.”)

Class 2 homes will learn and map your house – including inanimate objects like the 19th century heirloom dresser, making “dumb” objects interactive via computer vision (and possibly robotic assistance).

Why predictive?

When you do things at home, you have intent which is conveyed by your location, movements, gaze, gestures and (quite rarely) voice.

Imagine a Class 2 home that measures these supplemental signals in addition to your voice. Imagine this home is set up for your grandparents. Grandma won’t need to memorize the den lights’ name. Rather, she can say “Turn on the lights.” Meanwhile, when she’s in the den for too long, her home can realize she left the stove on in the kitchen, and give her a reminder, or turn the stove off itself. Via automatic activity recognition, the smart home could remind grandpa to water the plants by the front door, as he’d done it every week until now. No complex setup. No syntax to remember. For older generations, this Class 2 home is, finally, simpler to operate than the good old fashioned way.

For younger generations, the Class 2 home enhances ordinary tasks as if you’re Batman with Alfred in your earpiece. For instance, you might find a few ingredients in your fridge, gesture at them and ask, “what can I make?” A recipe will appear on a display for an exotic dish. Because your smart home is already aware of what is missing from your pantry, a doorbell ring will alert you to the arrival of a rare spice required for the finishing touch.

So how does this all work?

Remember the three cutting-edge technologies we referenced in the title? Here they are: visual perception, semantics, and, most importantly, activity prediction.

Let’s look at each one.

- Visual Perception and Mapping: Class one smart homes largely rely on audio assistants to enable capabilities. These assistants analyze 44,000 samples per second to understand commands. While that seems impressive, it pales compared to the billions of pixels a class two smart home continuously consumes to inform its model of your home. The compute required for this processing exceeds that inside a fleet of the most powerful self-driving cars.

- Semantics and Activity Recognition: While our homes may appear to be static, the people and situations within are highly varied and changing. Identifying, classifying and tracking this ever-growing set within a home requires machine learning that goes beyond today’s ML classifiers. In particular, combining an understanding of people (and, in the case of homes, pets) and what they do requires a new branch of ML: activity recognition. For instance, if you’re on the couch and looking towards a television, you’re probably watching TV. In addition, we need not just train a system on the activities people do, but also who in a home partakes in which activities.

- Activity Prediction: This is it – the cornerstone of the class two smart home. It is also the most sophisticated element, and making it work requires integrating research at the cutting edge of machine learning. While activity recognition adds new levels of classification tasks to multi-factor problems, activity prediction goes one step further to be informed by complex webs of learned patterns, behaviors, and other contextual information unique to each person and scenario. This prediction requires an incredible amount of training data. It took Microsoft 300,000 images to train the original Kinect to track human body motion from a single frame. It will take orders of magnitude more data to understand and predict activities.

Does any of this sound familiar? Surprisingly, these are the same underlying technologies that are starting to power self-driving cars. Roadways and obstacles are mapped, activities and types of vehicles and pedestrians are recognized, and self-driving systems predict the future to find the best route and avoid unsafe situations.

We’re not far off from realizing these technologies in the home. Compute power is increasing at an incredible pace, consumers can now map their homes in 3D, and home robots are reaching consumer adoption.

Complex technology aside, this won’t matter if it doesn’t simplify life for everyone. How long until that happens? You’ll know the Class 2 smart home has arrived when grandma doesn’t call to ask for tech support for her smart home, but instead tells you how to get it to do something you didn’t even know was possible.

Get the TNW newsletter

Get the most important tech news in your inbox each week.