Religious wars have been a cornerstone in tech. Whether it’s debating about the pros and cons of different operating systems, cloud providers, or deep learning frameworks — a few beers in, the facts slide aside and people start fighting for their technology like it’s the holy grail.

Just think about the endless talk about IDEs. Some people prefer VisualStudio, others use IntelliJ, again others use plain old editors like Vim. There’s a never-ending debate, half-ironic of course, about what your favorite text editor might say about your personality.

Similar wars seem to be flaring up around PyTorch and TensorFlow. Both camps have troves of supporters. And both camps have good arguments to suggest why their favorite deep learning framework might be the best.

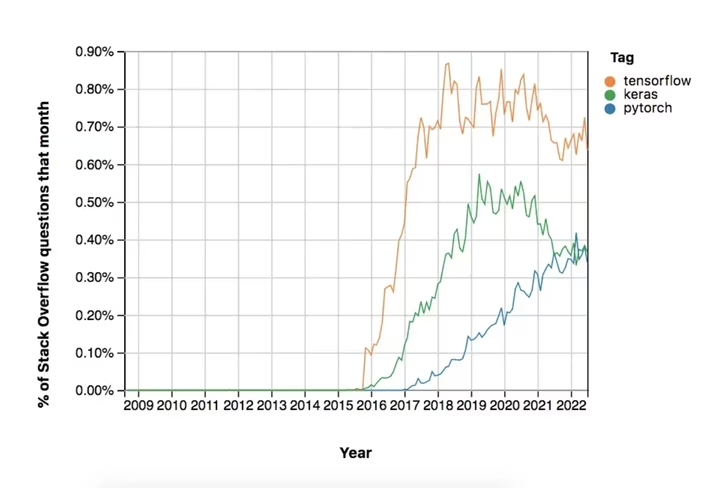

That being said, the data speaks a fairly simple truth. TensorFlow is, as of now, the most widespread deep learning framework. It gets almost twice as many questions on StackOverflow every month as PyTorch does.

On the other hand, TensorFlow hasn’t been growing since around 2018. PyTorch has been steadily gaining traction until the day this post got published.

For the sake of completeness, I’ve also included Keras in the figure below. It was released at around the same time as TensorFlow. But, as one can see, it’s tanked in recent years. The short explanation for this is that Keras is a bit simplistic and too slow for the demands that most deep learning practitioners have.

StackOverflow traffic for TensorFlow might not be declining at a rapid speed, but it’s declining nevertheless. And there are reasons to believe that this decline will become more pronounced in the next few years, particularly in the world of Python.

PyTorch feels more pythonic

Developed by Google, TensorFlow might have been one of the first frameworks to show up to the deep learning party in late 2015. However, the first version was rather cumbersome to use — as many first versions of any software tend to be.

That is why Meta started developing PyTorch as a means to offer pretty much the same functionalities as TensorFlow, but making it easier to use.

The people behind TensorFlow soon took note of this, and adopted many of PyTorch’s most popular features in TensorFlow 2.0.

A good rule of thumb is that you can do anything that PyTorch does in TensorFlow. It will just take you twice as much effort to write the code. It’s not so intuitive and feels quite un-pythonic, even today.

PyTorch, on the other hand, feels very natural to use if you enjoy using Python.

PyTorch has more available models

Many companies and academic institutions don’t have the massive computational power needed to build large models. Size is king, however, when it comes to machine learning; the larger the model the more impressive its performance is.

With HuggingFace, engineers can use large, trained and tuned models and incorporate them in their pipelines with just a few lines of code. However, a staggering 85% of these models can only be used with PyTorch. Only about 8% of HuggingFace models are exclusive to TensorFlow. The remainder is available for both frameworks.

This means that if you’re planning to use large models, you’d better stay away from TensorFlow or invest heavily in compute resources to train your own model.

PyTorch is better for students and research

PyTorch has a reputation for being appreciated more by academia. This is not unjustified; three out of four research papers use PyTorch. Even among those researchers who started out using TensorFlow — remember that it arrived earlier to the deep learning party — the majority have migrated to PyTorch now.

These trends are staggering and persist despite the fact that Google has quite a large footprint in AI research and mainly uses TensorFlow.

What’s perhaps more striking about this is that research influences teaching, and therefore defines what students might learn. A professor who has published the majority of their papers using PyTorch will be more inclined to use it in lectures. Not only are they more comfortable teaching and answering questions regarding PyTorch; they might also have stronger beliefs regarding its success.

College students therefore might get much more insights about PyTorch than TensorFlow. And, given that the college students of today are the workers of tomorrow, you can probably guess where this trend is going…

PyTorch’s ecosystem has grown faster

At the end of the day, software frameworks only matter insofar as they’re players in an ecosystem. Both PyTorch and TensorFlow have quite developed ecosystems, including repositories for trained models other than HuggingFace, data management systems, failure prevention mechanisms, and more.

It’s worth stating that, as of now, TensorFlow has a slightly more developed ecosystem than PyTorch. However, keep in mind that PyTorch has shown up later to the party and has had quite some user growth over the past few years. Therefore one can expect that PyTorch’s ecosystem might outgrow TensorFlow’s in due time.

TensorFlow has the better deployment infrastructure

As cumbersome as TensorFlow might be to code, once it’s written is a lot easier to deploy than PyTorch. Tools like TensorFlow Serving and TensorFlow Lite make deployment to cloud, servers, mobile, and IoT devices happen in a jiffy.

PyTorch, on the other hand, has been notoriously slow in releasing deployment tools. That being said, it has been closing the gap with TensorFlow quite rapidly as of late.

It’s hard to predict at this point in time, but it’s quite possible that PyTorch might match or even outgrow TensorFlow’s deployment infrastructure in the years to come.

TensorFlow code will probably stick around for a while because it’s costly to switch frameworks after deployment. However, it’s quite conceivable that newer deep learning applications will increasingly be written and deployed with PyTorch.

TensorFlow is not all about Python

TensorFlow isn’t dead. It’s just not as popular as it once was.

The core reason for this is that many people who use Python for machine learning are switching to PyTorch.

But Python is not the only language out there for machine learning. It’s the O.G. of machine learning, and that’s the only reason why the developers of TensorFlow centered its support around Python.

These days, one can use TensorFlow with JavaScript, Java, and C++. The community is also starting to develop support for other languages like Julia, Rust, Scala, and Haskell, among others.

PyTorch, on the other hand, is very centered around Python — that’s why it feels so pythonic after all. There is a C++ API, but there isn’t half the support for other languages that TensorFlow offers.

It’s quite conceivable that PyTorch will overtake TensorFlow within Python. On the other hand, TensorFlow, with its impressive ecosystem, deployment features, and support for other languages, will remain an important player in deep learning.

Whether you choose TensorFlow or PyTorch for your next project depends mostly on how much you love Python.

This article was written by Ari Joury and was originally published on Medium. You can read it here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.