A leaked list of Clearview AI’s clients shows that the controversial company’s facial recognition software has spread way beyond law enforcement, into household names ranging from the NBA to Walmart.

Clearview claims to have scraped more than three billion images from websites and social media platforms into a database that police can use to match with photos of suspects. Clearview CEO Hoan Ton-That that the software is “strictly for law enforcement,” but the client list obtained by BuzzFeed shows that the software is also being by some of the world’s biggest companies.

They include retailers (Walmart, Kohl’s, BestBuy and Macy’s); banks (Wells Fargo and Bank of America), sports leagues (the NBA); entertainment venues (Madison Square Garden), mobile carriers (AT&T, Verizon, and T-Mobile); casinos (Las Vegas Sands and Pechanga Resort Casino); gyms (Equinox); ticketing platforms (Eventbrite); and cryptocurrency exchanges (Coinbase).

The NBA and Madison Square Garden both said that they had never deployed the software.

“While we conducted a limited test as we do with an array of potential vendors, we are not and have never been a client of this company,” an NBA spokesperson told BuzzFeed News.

“We demoed the product last year and didn’t even move forward with a trial,” said a Madison Square Garden spokesperson.

But how most of the companies have used Clearview remains unclear.

[Read: Leak shows EU police aim to create an international facial recognition database]

Coinbase is the only one that’s provided details on what’s it done with the software.

“Our security and compliance teams tested Clearview AI to see if the service could meaningfully bolster our efforts to protect employees and offices against physical threats and investigate fraud,” but not using any customer data, a Coinbase spokesperson told BuzzFeed.

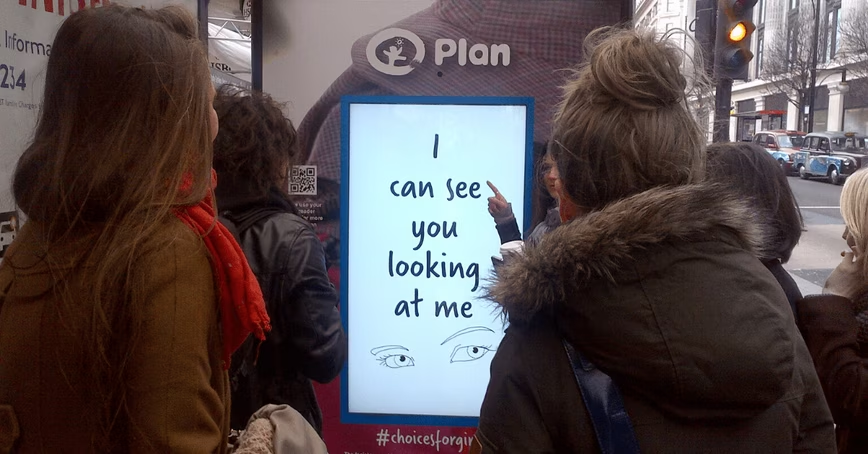

Clearview’s focus on law enforcement would suggest that other companies would find similar security uses, such as identifying shoplifters in stores and potential trouble-makers at basketball games. But this could quickly lead to the unconsented profiling of innocent consumers and passersby.

There are also more ostensibly innocent uses of facial recognition, from personalizing customer service to the needs of an individual to expediting airline boarding. It has even been used to track down missing children in India and reunite them with their families.

But these deployments can distract us from the dangers of becoming high-tech surveillance states.

An expanding client list

The leaked client showed that Clearview had been used more than 2,200 people at law enforcement agencies, government institutions, and companies across 27 countries.

The majority of them appeared to have only used the software on free trials. BuzzFeed’s Ryan Mac tweeted that multiple organizations had initially denied using the tool, only to later reveal that employees had used it without senior approval. This suggests that Clearview is seeking clients from individual workers as well as senior executives.

Some of them may even include the White House. The leaked documents contain one entry for “White House Tech Office,” where a single user had logged in to perform six searches. A White House official told BuzzFeed News that no authorization would have been given for this.

The leak also confirms suspicions that the company is pursuing rapid overseas expansion, with clients from Europe, the Middle East, Asia Pacific, and South America all on the list.

They include authoritarian regimes with records of human rights violations, such as Saudi Arabia and the UAE, stoking fears that the software could be used for brutal repression of dissent.

Clearview attorney Tor Ekeland claimed that there were “numerous inaccuracies in this illegally obtained information.”

How to stop Clearview

The leaked client list is another worrying chapter in Clearview’s controversial story. Since The New York Times revealed last month that Clearview was working with police, the company has been attacked for its unconsented use of people’s photos, dubious claims of accuracy, and efforts to work with repressive regimes.

It has also received cease and desist letters from a growing list of social media platforms but has shown little interest in complying with their demands. And It may not even have to.

The lack of federal regulation means there are few national safeguards against abuse of facial recognition tech. The leak shows that Clearview can’t protect its clients either. The revelations that these businesses are privately using facial recognition will undermine the trust of customers and could force them to reconsider the benefits of using the tech and working with such a controversial company.

The only way of curtailing this descent into ubiquitous surveillance is by imposing federal laws that restrict the use of facial recognition — or better still, outright ban its use by law enforcement.

Update February 28 6PM CET: Added statements from the NBA and Madison Square Garden.

You’re here because you want to learn more about artificial intelligence. So do we. So this summer, we’re bringing Neural to TNW Conference 2020, where we will host a vibrant program dedicated exclusively to AI. With keynotes by experts from companies like Spotify and RSA, our Neural track will take a deep dive into new innovations, ethical problems, and how AI can transform businesses. Get your early bird ticket and check out the full Neural track.

Get the TNW newsletter

Get the most important tech news in your inbox each week.