App developers are familiar with the importance of App Store Optimization – the holy grail of organic traffic. After all, most downloads stem from basic App store search, and making sure your app is among the first options presented to users is the best way to drive traffic more organic than Wholefoods.

Performing a solid keyword research is a crucial part of ASO (some might say it’s the only part, and they’d be absolutely wrong, but that’s for another post), and yet many developers still believe they can do it all on their own – just give them the right tools and reliable Wi-Fi. And while we cannot disagree with a serious techie’s ability to tackle pretty much every task, there’s still the issue of finding the right tools.

Numerous platforms offer app developers a way to perform keyword research independently, using free online ASO tools. But what exactly do these tools give users? How much can app developers rely on the results produced by them? Moburst set out to answer these questions on the new research titled “Which ASO Tool Can You Actually Trust?” comparing the most popular and promising ASO tools in the market, including: Sensor Tower, App Annie and MobileDevHQ.

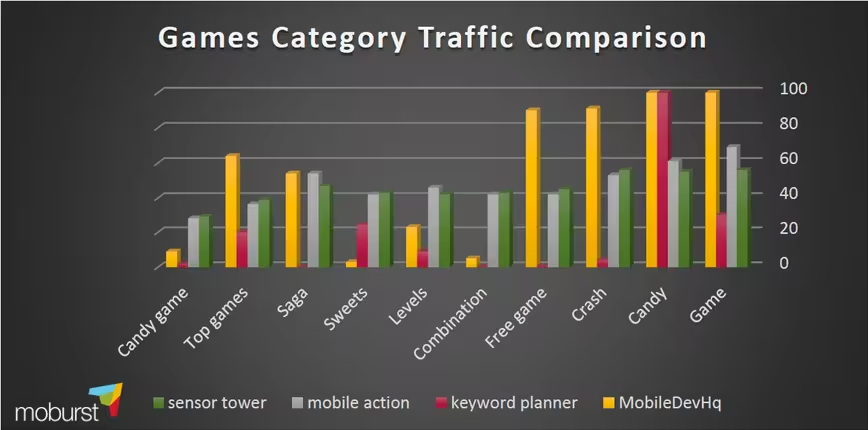

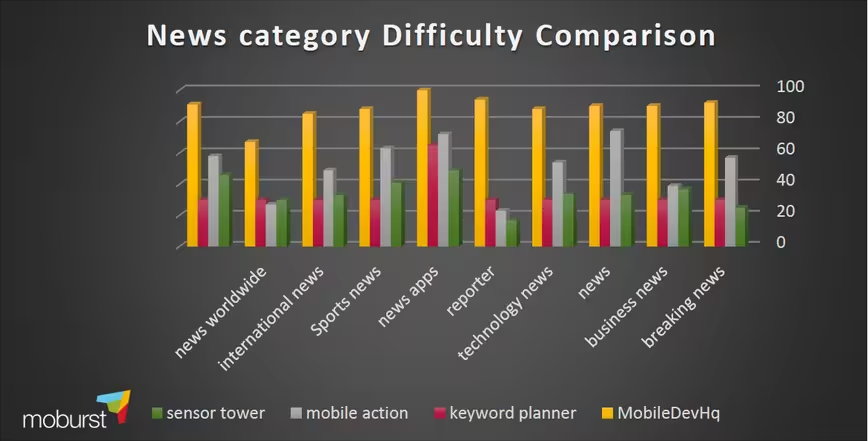

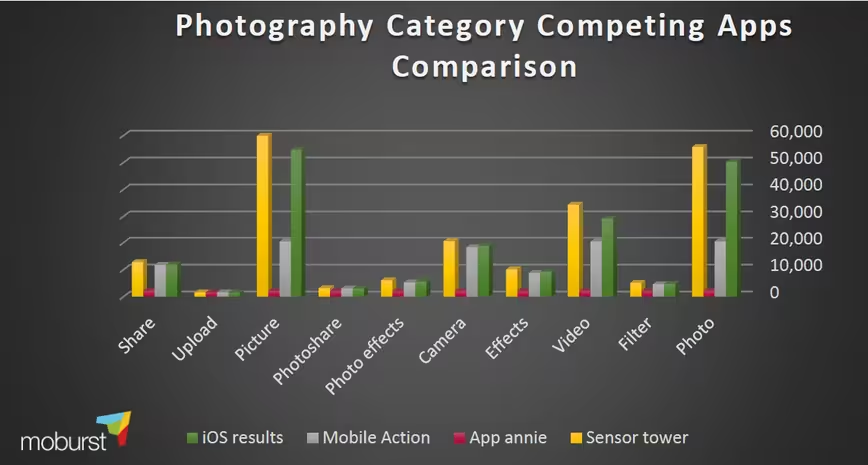

The research compared three main parameters: traffic rate (how popular is this term?), difficulty (how badly will you struggle to get high rankings for it?) and the number of competing apps (you get it).

We found that each tool produces dramatically different results in each of the parameters measured, sometimes massively so. The research raises the question: can app developers truly count on easy online solutions to conduct a thorough keyword research?

When testing various search terms for their “competition” score, which tells developers how many apps out there are competing for the same search term, we were amazed to see the difference between the platforms, which in some cases exceeded 800 percent. The results were also dramatically different compared to the number of apps that appeared in Apple’s results for many of the search terms we’ve tested.

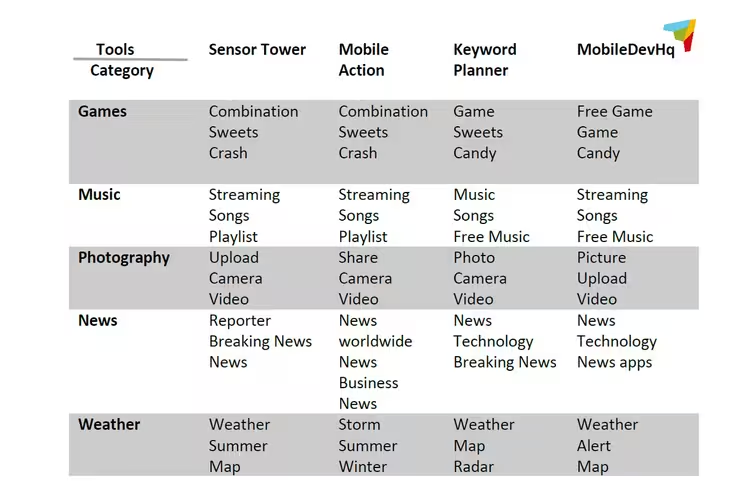

The following table presents the top keywords that were chosen, based on the data produced by each of the tools we have tested:

As you can see, out of fifty terms that were tested, only three would have been recommended by all platforms as one of the leading terms. This means that the massive gap between one tool to the next may very well lead developers into choosing the wrong keywords.

The average app takes about eight months to generate significant ASO results, which could very well be the result of a chaotic and ineffective process, done by amateurs using tools that were made for professionals.

There is a clear need for serious work and know-how in order for app developers to draw the right conclusions, a reason why Moburst’s research recommends combining different tools in order to create a more established foundation

The overwhelming inconsistency we’ve witnessed suggests that app developers who wish to discover the exact keywords to include in their app page are in trouble. Not only are they unable to base their research solely on one tool, combining different tools might only contribute to their sense of confusion. The research presents a disturbing picture every mobile dev out there should take into consideration with their next project.

The full research can be downloaded here

Read Next: 3 SEO tips to boost mobile app downloads

Image credit: Shutterstock

Get the TNW newsletter

Get the most important tech news in your inbox each week.