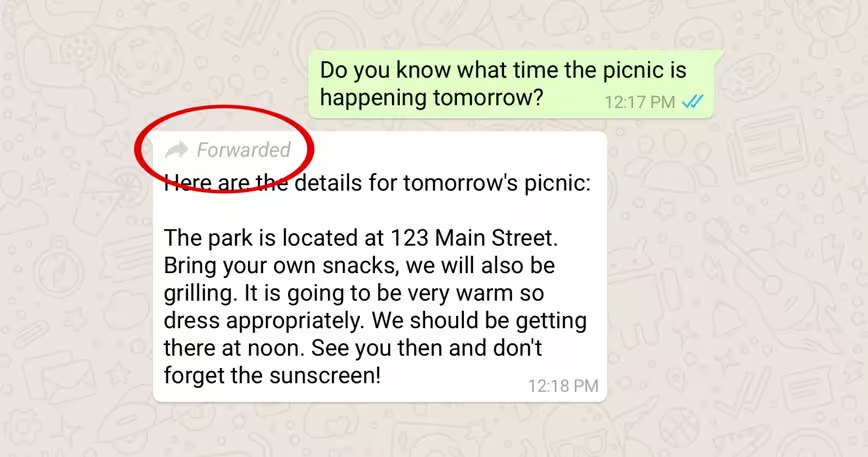

Continuing its mission to help fight the spread of misinformation on its platform, WhatsApp is introducing a label to help identify forwarded messages.

Basically, if a message wasn’t composed by the sender in your conversation, it’ll have a ‘forwarded’ label at the top.

That’s a start in the war against fake news. WhatsApp came under fire after a string of lynchings took place across India owing to misleading messages spread through the service. The government called on the company to address this issue in its largest user base in the world, and this is one of the steps it’s taking to help curb the spread of misinformation.

While it’s good to see WhatsApp acting quickly, the new feature likely won’t help much. The fact that a message about kidnappers in one’s area is a forward (and not originally composed by whoever sent it) may not influence recipients to immediately assume it’s false. It could even have the opposite effect, and encourage them to believe that if it’s been shared from elsewhere, it might be information that should be taken seriously.

In case you’re wondering why WhatsApp can’t simply scan the contents of messages, look for misinformation, and censor those on its own, the reason is that your correspondence is encrypted from end to end; the company can’t intercept messages when it’s passing through WhatsApp servers to read them.

But why not build a tool to empower everyone in a conversation to tackle misinformation instead? WhatsApp could allow people to mark forwarded messages as ‘bogus’ if they believe it contains misleading content, and then restrict these messages labeled ‘bogus’ from being forwarded to other conversations if it’s been voted as such by multiple users.

I also like Medianama editor Nikhil Pahwa’s idea for allowing users to identify select messages as ‘public,‘ i.e. forwardable. He also proposed adding a unique ID to these messages so they can be traced back to their origin by law enforcement if necessary, and also wiped from every conversation on the platform after they’ve been reviewed by WhatsApp and found to contain misinformation.

Implementing solutions like these will require careful deliberation and plenty of testing to see if it works across a wide range of scenarios. There are also privacy concerns and issues of censorship that will need to be considered before deploying any solutions. But if WhatsApp is keen on tackling the spread of fake news among 1.5 billion users, it’ll have to do better than a ‘forwarded’ label.

Get the TNW newsletter

Get the most important tech news in your inbox each week.