Welcome to the AI era. We missed the official announcement too, but it’s obvious that’s what we’re in. This new paradigm requires the acceptance or denial of a new brand of faith: Artificial general intelligence (AGI).

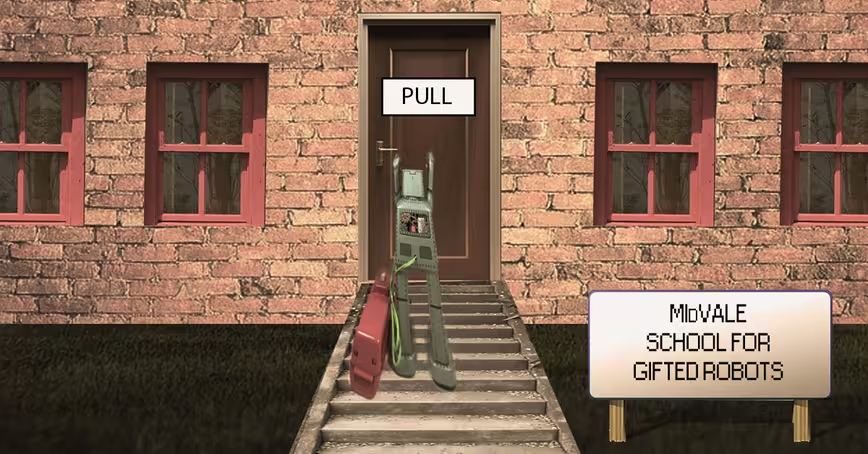

Or, sentient machines, if you prefer. Either way, let’s talk about killer robots.

It seems like the entire world is obsessed with AGI – the idea that a machine could become “alive” or “sentient.” At a bare minimum an AGI would be as capable as a human in any domain. Right now, they’re no more alive than a rock and no more “capable” than a calculator. But that doesn’t stop them from performing superhuman feats, thanks to regular AI.

The fear, of course, is that machines will become smart and rise up against us. In true science fiction fashion, they’d probably decide that the best way to protect humans — or save the planet, or whatever other obscure goal our overlords may have in mind — is to kill us all.

That’s bleak, and there’s obviously room for further debate on the subject, but let’s assume that AGI – a superintelligence – is possible and it’s up to us, right now, to plan ahead for the imminent uprising. What the hell could we do to stop it?

Michaël Trazzi and Roman V. Yampolskiy, researchers from Sorbonne Universite, France and the University of Louisville respectively, believe the answer is to introduce artificial stupidity into the mix. Yes, artificial stupidity. No, they’re not joking.

Often, the media likes to seize upon sensational science, but this is a legit study conducted by serious researchers. Here’s a snippet from the white paper:

We say that an AI is made Artificially Stupid on a task when some limitations are deliberately introduced to match a human’s ability to do the task. An Artificial General Intelligence (AGI) can be made safer by limiting its computing power and memory, or by introducing Artificial Stupidity on certain tasks. We survey human intellectual limits and give recommendations for which limits to implement in order to build a safe AGI.

Intellectuals among our audience might feel insulted, the scientists are defining “artificial stupidity” as making robots as dumb as smart people are. But the researchers aren’t picking on anyone — it’s a logical concern.

The truth is that machines already outperform humans in many tasks. And, if your particular brand of modern-day faith allows you to believe in AGI, then we can assume that scaling their already superhuman abilities would lead to a true superintelligence. We’d be at an immediate disadvantage to dispute anything such a being chose to do.

According to the researchers:

Humans have clear computational constraints (memory, processing, computing and clock speed) and have developed cognitive biases. An Artificial General Intelligence (AGI) is not a priori constrained by such computational and cognitive limits.

One way to inhibit AGI, the team proposes, is to integrate hardware constraints. But the scientists realize that a superintelligent computer could order more power online through cloud computing, by purchasing hardware and having it shipped, or even by just manipulating a dumb human into doing it.

The next step, according to the researchers, is to hard code a rule or law; “thou shalt not upgrade thyself or thy code,” or something like that. But what’s to stop our super smart AI from brute-force changing it or conning a human into doing it? They thought of that too.

Aside from our hardware limitations, there’s one major thing that makes humans really, really stupid: Our biases. The team says:

Incorporating human biases into the AGI present several advantages: they can limit the AGI’s intelligence and make the AGI fundamentally safer by avoiding behaviors that might harm humans.

The paper goes on to list 14 specific human biases that a neural network could be deliberately coded with that would essentially make it too stupid to surpass a human’s intelligence. We’re not going to go over all of them here, but you can read the team’s entire white paper on arXiv here.

The team asserts that artificial stupidity would not only protect us from the threat of superintelligent artificial general intelligence, but make artificial intelligence, in general, more human-like.

When it comes to AGI: Anything you can do, AI can do better. But, the right dose of stupid should keep the playing-field level.

Get the TNW newsletter

Get the most important tech news in your inbox each week.