One of the horrible gifts that the year 2016 brought us was the rise of fake news, and we’re still dealing with the consequences (cough Trump cough).

Fortunately, people all around the world are looking for ways to squash the effectiveness of misinformation. TNW spoke to Dhruv Ghulati, CEO of Factmata, a London-based startup working on developing a new automated fact-checking tool.

Who should fix the problem?

The first thing that needs to be established is who’s responsible for fake news. Some people like to blame social media and their lack of responding to this new epidemic. Ghulati understands why people might think so as personalized newsfeed can obscure people’s perceptions of certain issues.

It seems like a lot of the platforms have unwittingly become incentivisers for [fake] news. When you like something — they look at the content of what that ‘like’ is about — and then suddenly your newsfeed gets filled with similar content. That makes the story look like it’s a very prevalent thing, but in reality it may not be a massive phenomenon.

However, Ghulati adds that the nature of fake news is that they’re extremely shareable — which is exactly the type of content we’ve built our news business models around.

In Ghulati’s opinion, every part of the chain — from journalists to politicians, platforms to media organizations — needs to improve to combat fake news. However, the responsibility ultimately lies with us, the users.

We need to start to realize the consequences of spreading, not only fake news, but spreading misinformation in general. When you say something, make sure your facts are backed up. Right now, unfortunately, when you say something the consequences are not just for the immediate person you’re saying it to, but it’s across the wider internet.

It’s a difficult task to completely change the way people think about how they conduct themselves online, but it’s not impossible. The first step might be to help people realize how they absorb information and encourage more responsible media consumption.

That’s why Factmata, which is backed by the Google Digital News Initiative, is trying create tools that help people to become their own fact-checkers — making it easy to attain factual data about news stories you come across.

It’s all about context

Context is absolutely essential when it comes to forming an informed opinion on a subject. The problem is, however, that it can prove to be difficult for normal users to take the time to search for additional information on a certain topic.

Ghulati says that the aim is to create an additional data layer on top of information, to make it easier for users to take responsibility for the ‘facts’ they read. Meaning that when you open a news article, Factmata’s main tool would provide factual context in real time.

An example of how this would work is that when you’d open an article or a statement about muslim immigration, you’d suddenly see the actual data about the issue. This would come in the form of charts, tables and so on. That layer is a layer of data on top of information.’

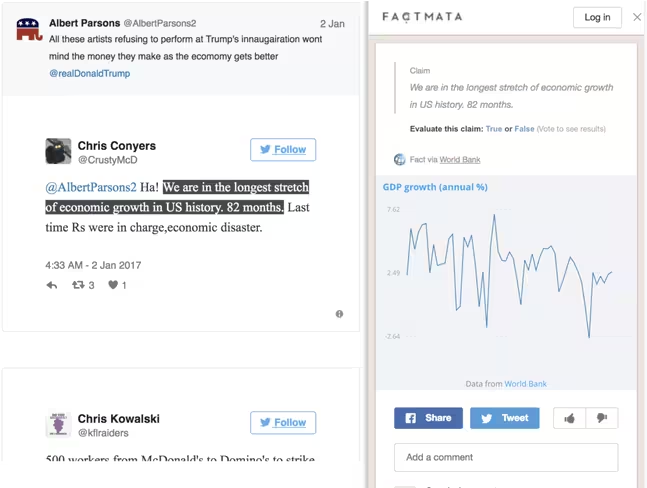

In the screenshot above you can see a mockup from Factmata’s Google DNI project, which gives indication of how this might look. When users come across a statement, they also receive relevant data that helps them check whether it’s true or not.

According to Ghulati, the current problem is that much of the data is now stored in places that aren’t really accessible to the average user. Factmata is working on gathering that data and package it in an engaging way.

Obviously, right now you can search for all sorts of things on the web and explore a lot of information. But the problem is that it requires a lot of time and effort from the users as they need to know what they’re looking for.

The idea of [improved] context is, having something on the same piece of content that gives additional information — without forcing the user to navigate to a new page.

That means that Factmata’s tool won’t actively weed out fake news. Instead, it empowers us to check the validity of news for ourselves — and encourages us to think critically (based on fact). This way, you no longer get to yell out ‘fake news’ when you come across something that doesn’t match your political orientation.

Best of both worlds

In order to achieve this added layer to our internet experience, Factmata tries to combine the two main approaches to fact-checking: AI and human review.

Projects like Faktisk in Norway, show that human reviews can achieve great things — but I’m not sure whether similar projects would work for bigger markets. Using AI, instead of human reviewers, can speed up the fact-checking process exponentially but it’s still far from reaching the level of understanding language and decision making needed to stop fake news.

What we’re starting with is actually a really simple platform. It’s effectively a crowdsourced fact-checking, but empowered by AI. We’re trying to build a system that detects stuff that you should fact-check, detecting statements that could be misleading, and that is purely done through machine learning algorithms that should improve over time.

Previously, fact-checking was done by fact-checking organizations — and there maybe around 100 to 120 of them — and our idea is based on whether we can democratize this process. Can the community be good enough to do the fact-checking?

However, Ghulati states that fake news can never be solved solely by democratizing fact-checking — it will always need experts to also be a part of the system.

It’s likely our initial core users on the platform will be expert fact-checkers and investigative journalists, as they have the necessary expertise and lots of invaluable experience to do this, and I hope they will find Factmata helpful.

However, we want Factmata to be a tool where gradually anyone can get involved in the verification and fact checking process. We need to give everyone the skills required to be discerning of content, to truly reduce fake news.

To many it might seem strange to eventually put fact-checking in the hands of the public, which has fallen for fake news in the past, but Ghulati still remains optimistic.

It’s true that people aren’t especially trustworthy when they’re in their filter bubbles on social media platforms. But once you extract people from their echo chambers, and provide them with the factual data, they become reliable as a community.

I’m not certain that Factmata’s tool will work — which is scheduled for release by the end of the year — but I think it’s worth a shot. The project is ambitious and idealistic, but in the end its success will probably come down to one thing: user responsibility, which could prove problematic.

Can people who’ve been willingly duped by fake news in the past really change their ways? Only time will tell, but I think it’s important to realize that these types of changes need to come from within.

People don’t react well to being told that they’re stupid or that their views are wrong, so it’s obvious we need a less antagonizing ways to move the conversation forward. A system based on encouraging people to become more informed might be just the thing to do that.

Get the TNW newsletter

Get the most important tech news in your inbox each week.