Stereotyping and bias — be that conscious or subconscious — is one of the leading contributors to the gender gap prevalent today. It’s time to identify and challenge the societal structure that systematically prevents women from thriving — one of these structures is gendered language and the patriarchal ideas built into it.

In August, a group of computer scientists from the University of Copenhagen and other universities used machine learning to analyze 3.5 million books, published between 1900 to 2008, to find out whether the language used to describe men and women differed — spoiler alert: it did.

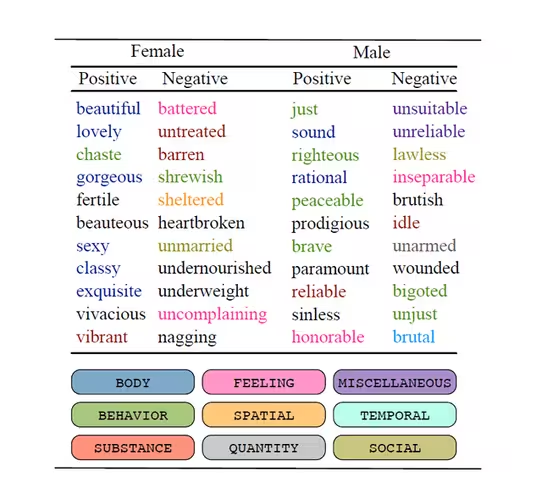

It found that “beautiful,” “gorgeous,” and “sexy” were the three most frequent adjectives used to describe women in literature. However, the most commonly used adjectives to describe men were “righteous,” “rational,” and “brave.”

The full results reveal that men are typically described by words that refer to their behavior, usually in a positive way. While adjectives generally used to describe women tend to be associated with physical appearance.

“We are clearly able to see the words used for women refer much more to their appearances than the words used to describe men. Thus, we have been able to confirm a widespread perception, only now at a statistical level,” Isabelle Augenstein, a computer scientist involved in the study, said in a blog post.

Using machine learning, researchers were able to remove words associated with gender-specific nouns such as “daughter” and “stewardess.” They then analyzed whether the words left behind had a positive, negative, or neutral connotation, and were then categorized into sections such as “behavior,” “body,” “feeling,” and “mind.”

They found that in most cases, negative verbs associated with body and appearance were used five times more often for women than they were for men. Also, words categorized as positive or neutral in relation to appearance were used twice as often when describing women.

Although many of the books used in this study were published several decades ago, they still play an active role, Augenstein argued. Traditional beliefs are still deeply embedded into today’s society, and subsequently into our technology. For example, the industry-leading voice assistants, such as Amazon’s Alexa and Apple’s Siri, are given female names and voices by default — further perpetuating the stereotype that women are there to serve and be of assistance.

Another project hoping to change perceptions around everyday, gender-biased language is the “Gendered Project” — a growing library of gendered words in the English dictionary. This tool allows people to filter through its dataset of gendered words to see what words are considered male, female, and opposite-gender equivalent.

The Gendered Project is bringing attention to the importance of language and the words we use, particularly the biases and gaps in gendered language. This includes issues like semantic derogation and sexualization — the process in which words take on more negative connotations or denotations. For example, the word “mistress” was once the female counterpart of “mister” but now carries negative and sexualized meanings, which is not seen in its male equivalent.

These projects aim to get people thinking about the values and biases that exist in language, and to interrogate the gendered words they use and why. While technology has been known to further perpetuate subconscious bias, it’s continuously addressing the issue. But tech, of course, can’t solve everything: Change happens when we become more aware.

Get the TNW newsletter

Get the most important tech news in your inbox each week.