The UK government today announced it would invest £250 million (roughly $300 million) into artificial intelligence technology, which would be used by the public healthcare system, known as the NHS, to improve the quality of care.

This pioneering move comes from Boris Johnson, the newly crowned prime minister, as well as Matt Hancock, the current Health Secretary, who already has a reputation for being one of the few tech-conscious members of government. Regular readers of TNW will recall when he launched his own ill-fated social network to connect with constituents.

The government claims this AI initiative will improve care and cut waiting times for vital procedures. It’s worth adding that the NHS also successfully uses AI in several important areas, so this isn’t necessarily a first for this beloved healthcare service. The Guardian notes that it’s used in cancer screening procedures, as well as in identifying patients who are likely to miss appointments, in order to send targeted reminders.

However, there are reasons to be concerned. Details are thin on the ground, and it’s not clear how this new government AI healthcare lab will work in practice. With that in mind, I’ve got some questions:

Who will do the work?

It’s fair to say that large healthcare IT projects don’t have the best reputation in the UK. During the 2000’s, the Tony Blair-led Labour government embarked on an ambitious initiative to digitize procedures that were otherwise analog. It was nothing short of a calamitous and costly failure that went over budget by several billion pounds, only to be scrapped by the coalition government in 2011.

The reasons for failure can be attributed to the sheer scope of the NHS. In 2015, it was ranked as the world’s fifth largest employer, behind McDonald’s and Walmart. Building a system to accommodate thousands of surgeries, community clinics, and hospitals is not an easy task.

But much of the blame was ultimately pointed at the Blair government’s affection for outsourcing. A vast proportion of work was done by large multinational IT companies, namely Computer Sciences Corporation (CSC) and Fujitsu, both of whom did a substandard job while simultaneously milking the public purse for anything they could get.

Another outsourcing firm used by the government on IT projects, Capita, has a similarly woeful reputation, earning the unkind moniker “Crapita” from the legendary British satirical magazine Private Eye as a result.

So, Boris: let me ask you a question. Who is actually going to build these systems? Will the contract go to these large multinationals, or will you take the work in-house?

Personally, I’m hoping for the latter. When government tech projects are done by internal teams, they have a tendency to be better executed, as well as cheaper to build. A great example of that is the Government Digital Service (GDS), who have done an amazing job of standardizing how the government’s public-facing IT system’s work, and was the product of the 2010 Tory/Lib Dem coalition.

They’ve also done some genuinely innovative stuff, too. An example of this is GOV.UK Verify, which allows freelancers and sole-traders to file their tax returns without having to remember arcane ID numbers and passwords.

The biggest problem for this new AI initiative, however, will likely be finding people to do the work.

Although the UK is a hotbed of AI talent, much of it has been snatched up by Google’s DeepMind and Microsoft Research. Therefore, the NHS will likely have to recruit from abroad, which will become a lot harder after Brexit. Candidates will have to deal with new bureaucracies, particularly when it comes to immigration, and the weak pound will make working in the UK far less attractive to foreign applicants.

How will you protect people’s privacy?

I’ve always felt that the term “artificial intelligence” is a bit of a misnomer, largely because much of the development and training process is guided by human hands. We witnessed this just yesterday with Microsoft, when it emerged that third party contractors were accessing recordings of translated Skype calls as part of quality improvement processes.

In recent weeks, the other three major tech companies — Google, Apple, and Amazon — have all found themselves embroiled in similar scandals.

So, Boris, given the inherent sensitivity of people’s healthcare data, how will you handle this tricky aspect of building AI applications? Will you inform patients that engineers and QA testers will be involved in the development and training process of any algorithm, and will therefore have access to their private healthcare data?

And crucially, what will you do to avoid this data being exfiltrated to a third-party, like how a Microsoft contractor provided Motherboard with snippets of people’s intimate (and sometimes deeply lurid) Skype calls?

Will these AI applications represent the diversity of British society?

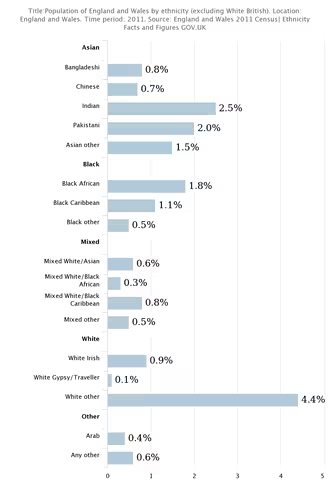

Britain is a wonderfully diverse country. Over 250 languages are spoken in London alone, making it the most linguistically diverse city in the world. Around 3.3 percent of people living in England and Wales are black. A further 7.5 percent identify as Asian, including Indian, Pakistani, and Chinese. Roughly 2.2 percent are of a mixed background, while 0.4 percent identify as Arab.

If these healthcare AI applications are solely trained on a specific demographic – say, white people – it could have negative healthcare outcomes for those of an ethnic minority background.

Boris, what efforts will you take to ensure that any applications represent the inherently diverse nature of British society, so everyone can get the appropriate and adequate healthcare they need?

Will this get in the way of clinicians?

The beauty of the NHS over the American healthcare system (besides the fact that it’s free and medical bankruptcy isn’t a thing in the UK) is that doctors aren’t lumbered with much administrative work, like billing or negotiating prices with insurance companies. We leave nurses to nurse, and doctors to doctor, and the end result is a healthcare system that’s among the most cost-efficient in the world.

Boris, can you guarantee that any new AI healthcare apps won’t become a hindrance to medical professionals, consuming time that would otherwise be spent dealing with patients?

The entire point of AI, I know, is to automate tasks that would otherwise be done by humans. But if clinicians are lumbered with tools that are clunky and complicated (see: any NHS IT project developed in the 2000s), this approach may backfire.

It’s also worth noting that there are many doctors and nurses (as well as assistants, scientists, and receptionists) who have worked in the NHS for decades. They’re good at their jobs, but they’re not digital natives, and they probably will struggle with complicated new computer systems.

Newer isn’t always better.

Are you sure the money couldn’t be better spent?

The NHS is a beloved institution, but it’s also one in crisis, largely thanks to years of neglect under successive coalition and Tory governments. The end result is that doctors and nurses are leaving in droves, and the NHS is struggling to replace them.

The toxic atmosphere created by Brexit, combined with the removal of financial incentives to train as nurses and doctors, has created a chronic recruiting crisis for the NHS, which faced 97,000 vacant positions in late 2017 alone.

Any new AI applications may lower the burden on struggling clinicians. It may not. Either way, Boris, are you sure this money couldn’t be better spent elsewhere? Perhaps on recruiting nurses, hiring more doctors, or paying towards the cost of restoring healthcare education bursaries, which were scrapped in 2017?

I’m not saying that spending £250 million on healthcare AI is a bad idea. But you have to understand my skepticism. The government has a track record for failure when it comes to ambitious IT projects. And there’s nothing more ambitious than changing how healthcare works.

Get the TNW newsletter

Get the most important tech news in your inbox each week.