Watching an AI train, for those of us who aren’t developers, is about as exciting as watching paint dry or grass grow. But, every once in a while someone throws some ninjas into the mix and makes it fun.

An AI programmer who goes by the GitHub handle Ash47 recently published a series of projects designed to demonstrate how machines learn. He creates psuedo-gaming environments where AI has to accomplish a simple goal: stay alive. The most interesting thing about the AI in his projects isn’t what they can do, it’s how they learn to do it.

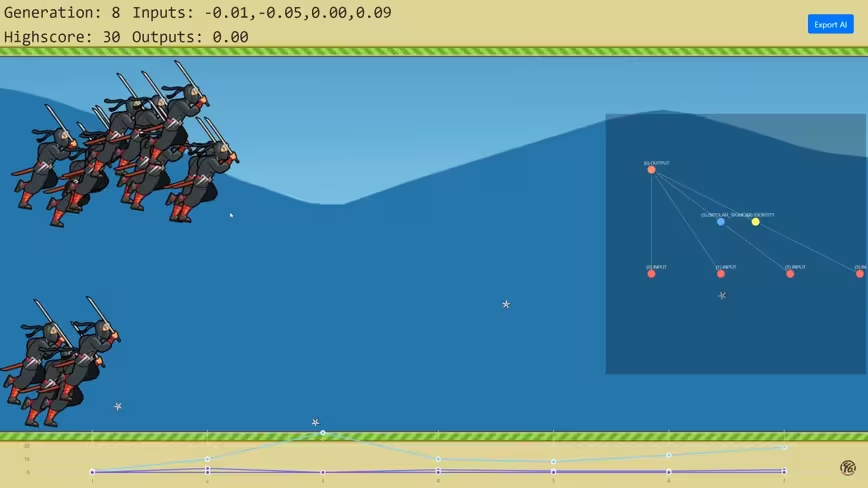

One project, Ninja AI Trainer, is an interactive “game” where AI-powered ninja characters attempt to dodge throwing stars. It’s basically humans VS ninjas, as you can control where the throwing stars appear.

The point of the game is to watch an AI train to dodge throwing stars through reinforcement learning. When it first loads, and the AI is on generation one, the ninjas are pretty easy to kill. You can set how many ninjas train on the screen at once – as many as your computer can handle – and train them all simultaneously.

And, if you’ve massacred enough ninjas, by the time the AI reaches a few dozen generations it starts to spit some out that can dodge pretty well. Hypothetically, you could wait around while the simulation ran a few hundred thousand generations and watch as it becomes borderline perfect.

This is a fantastic and accessible representation on how almost all deep learning-powered AI networks function. And it’s incredibly relevant to what’s happening at the cutting edge of AI technology. For example, the brains of Waymo’s driverless cars go through millions of miles of simulated driving before they’re allowed to run on public roadways.

You can check out all of Ash47’s AI projects here, and his GitHub here. And don’t forget to visit our artificial intelligence section for all the latest machine learning news and analysis.

Get the TNW newsletter

Get the most important tech news in your inbox each week.