While many people already play around with their mobile phones or tablets while watching TV, perhaps following discussion about the show on Twitter, the BBC is doing some fascinating R&D work which could help mobile devices become more useful ‘second screens’ for television.

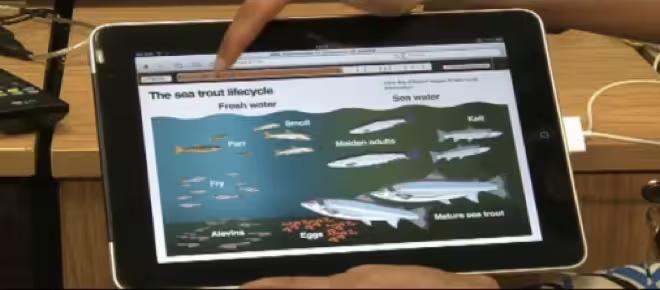

The Orchestrated Media project is working to display relevant, supporting content for the show you’re watching, in sync with the show. So, a documentary on dinosaurs may display information about the beasts currently depicted on screen, or a news broadcast could display detailed information about the current story without you having to look for it.

The BBC detailed some of its work in a blog post this week. A field trail involving around 200 people is running in which they play a mobile game in sync with a popular Saturday night gameshow. (UPDATE: The BBC’s Jerry Kramskoy has been in touch to clarify that this is a “pilot with no guarantee of future service from the BBC” rather than a ‘trial’).

This is impressive stuff and not only does the mobile content stay in sync with the TV show, it’s possible to jump to different points in the show right from the mobile device too (for more on this see the update below). This is all possible using audio or video recognition of the show currently being watched to match up content correctly. For shows being watched as they’re broadcast, the app could take its cues to display content over the Internet from the broadcaster.

As we reported earlier this week, media check-in app Miso is developing into a ‘social TV platform’, signing a deal to display supporting ‘second screen’ content for the new season of Dexter in sync with the show.

The work has already caught the attention of Google Executive Chairman, Eric Schmidt, who mentioned it in his recent lecture at the Edinburgh TV Festival. The BBC says that it’s engaging with the Web standards organisation W3C and other players in the TV industry to agree common standards for such technology to become a widespread reality.

UPDATE: The BBC’s Jerry Kramskoy has told us a little more about the technology behind Orchestrated Media:

“Audio watermarking works by striping the audio in the content, which is broadcast. The companion device listens out for this to sync up. So this is a one-way flow of sync … the TV is the master, the companion the slave … always. This means the companion cannot control the TV.

“To make the TV content follow the companion device content, as in the Autumnwatch video on my blog, requires s/w in the TV that the companion talks to, to allow the companion to be the master when it wants. This latter scenario is whate we refer to as symmetric sync, and the TV and companion are in a peer-to-peer relationship, whereas the previous one-way sync we refer to as asymmetric sync, where the TV and companion are in a mster-slave relationship. Standardisation is necessary around what and how features are exposed by the TV to support symmetric sync”

Get the TNW newsletter

Get the most important tech news in your inbox each week.