At Springboard, we pair mentors with learners in data science. We often get questions about whether to use Python or R – and we’ve come to a conclusion thanks to insight from our community of mentors and learners.

Data science is the sexiest job of the 21st century. Data scientists around the world are presented with exciting problems to solve. Within the complex questions they have to ask, a growing mountain of data rests a set of insights that can change entire industries. In order to get there, data scientists often rely on programming languages and tools.

This is an excerpt of our free, comprehensive guide to getting a job in data science that deals with two of the most common tools in data science, Python and R.

Python

Python is a versatile programming language that can do everything from data mining to plotting graphs. Its design philosophy is based on the importance of readability and simplicity. From the The Zen of Python:

- Beautiful is better than ugly.

- Explicit is better than implicit.

- Simple is better than complex.

- Complex is better than complicated.

- Flat is better than nested.

- Sparse is better than dense.

- Readability counts.

As you can imagine, algorithms in Python are designed to be easy to read and write. Blocks of Python code are separated by indentations. Within each block, you’ll discover a syntax that wouldn’t be out of place in a technical handbook.

Photo Credit

Many data scientists use Python to solve their problems: 40 percent of respondents to a definitive data science survey conducted by O’Reilly used Python, which was more than the 36 percent who used Excel.

This has spawned one of the largest programming communities in the world, filled with brilliant and versatile problem-solvers who collaborate together to push Python forward. You’ll be sure to find any answers to your Python questions on StackOverflow or on Quora. The official Python website is filled with a community building out its future.

The community is so large, you’ll find all kinds of open source code libraries suited to solving very specific problems. One example is NLTK, a library of code that makes it easier for computers to process language. With one import statement, you can parse through large texts and get intelligent text analysis from a machine. You’d be able to tell if your Twitter followers are sending you positive or negative messages in a matter of seconds.

The Swiss-Army knife that Python represents fits quite well into data science work:

An introduction to Python and data science

The following introduction to Python for data science will get you set up on the basics. You’ll get set up on a powerful environment manager known as Anaconda. Once you’re done with the tutorial, you’ll have installed all of the essential code libraries you need to start in data science with Python.

Python is a language you can use for nearly every step in the data science process thanks to its versatility.

Collect raw data

Python supports all kinds of different data formats. You can play with comma-separated value documents (known as CSVs) or you can play with JSON sourced from the web. You can import SQL tables directly into your code.

You can also create datasets. The Python requests library is a beautiful piece of work that allows you to take data from different websites with a line of code. It simplifies HTTP requests into a line of code. You’ll be able to take data from Wikipedia tables, and once you’ve organized the data you get with beautifulsoup, you’ll be able to analyze them in-depth.

You can get any kind of data with Python. If you’re ever stuck, google Python and the dataset you’re looking for to get a solution.

Process the data

To unearth insights from the data, you’ll have to use Pandas, the data analysis library for Python. It can hold large amounts of data without any of the lag that comes from Excel. You’ll be able to filter, sort and display data in a matter of seconds.

Pandas is organized into dataframes, which can be defined and redefined several times throughout a project.

You can clean data by filling in non-valid values such as NaN (not a number) with a value that makes sense for numerical analysis such as 0.

You’ll be able to easily scan through the data you have with Pandas and clean up data that makes no empirical sense. If you only have 1,000 users, and you have data that indicated that 2,000 users have successfully been invited in, you might have an encoding error where somebody added an extra zero. Now is the time to look through your data with Pandas and make sure they correspond to the context of your problem.

Explore the data

Once you dive deep into Pandas, you’ll realize how powerful it is. You’ll be able to take complex rules and apply them to the data. Do you want to find how many actors have played Hamlet in the 1950s, in a dataset of every movie that has ever been released? Use the filtering functions and the sorting method to get that information with a few lines of code.

You’ll be able to do deep time series analysis and match data points with their temporal context to the exact second. You can track variations in stock prices to their finest detail.

You’ll be able to group data points by their context using the groupby() function. This will allow you to track changes in stock prices based on the day of the week, or the industry they’re in.

If you need any help getting started, check out the Pandas Cookbook, written by Julia Evans. Get started with a few projects and you’ll quickly get the hang of it.

Perform in-depth analysis

Now that you have your data processed, you can start doing numeric analysis with Numpy. You can do scientific computing and calculation with SciPy. You can access a lot of powerful machine learning algorithms with the scikit-learn code library. scikit-learn offers an intuitive interface that allows you to tap all of the power of machine learning without its many complexities.

Visualizing data

The IPython Notebook that comes with Anaconda has a lot of powerful options to visualize data. You can use the Matplotlib library to generate basic graphs and charts from the data embedded in your Python. If you want more advanced graphs or better design, you could try Plot.ly. This handy data visualization solution takes your data through its intuitive Python API and spits out beautiful graphs and dashboards that can help you express your point with force and beauty.

You can also use the nbconvert function to turn your Python notebooks into HTML documents. This can help you embed snippets of nicely-formatted code into interactive websites or your online portfolio. Many people have used this function to create online tutorials on how to learn Python and interactive books.

The benefits of Python

Indeed.com notes that the average salary of those that have Python in their job description ranges around $102,000. Python is the most popular programming language taught in universities: the community of Python programmers is only going to be larger in the years to come. Using Python, you can solve a data problem end-to-end, and you’ll be able to do so much more than data science if you want to explore other computer science problems.

Takeaway: Python is a powerful, versatile language that programmers can use for a variety of tasks in computer science. Learning Python will help you develop a versatile data science toolkit, and it is a versatile programming language you can pick up pretty easily even as a non-programmer. This introduction to data science with Python will take you where you need to go.

R

R is slightly more popular than Python in data science, with 43 percent of data scientists using it in their tool stack compared to the 40 percent who use Python. It’s a programming environment and language dedicated to solving statistical problems. It is much more focused on the domain than the versatile Swiss-Army knife that Python is. R specializes in solving data science problems.

R can be difficult to get into if you have experience with a previous programming language: it isn’t constructed by computer scientists for computer scientists. One programmer has documented his struggles and built a tutorial to help others out with some of the unconventional quirks of R, something you should be aware of if you have familiarity with other programming languages. Unlike Python which is built to have a simple syntax, R has a tricky syntax with a bit of a steep learning curve.

UCLA has a great resource to get you started in R and our learning path in data analysis offers several more resources. This introduction to R is comprehensive in going from A to Z for R in data science, and you’ll be able to see what a sample session looks like. You’ll find a healthy StackOverflow community for R in case you bump into any problems.

An introduction to R and data science

R really shines when it comes to building statistical models and displaying the results. It is an environment where a wide variety of statistical and graphing techniques, from time series analysis to classification, can be applied. Every scientist has had to build regressions and test for statistical significance of results, and R is perfectly suited for visualizing those models, from bell curves to Bayesian analysis.

R is a staple in the data science community because it is designed explicitly for data science needs. The community contributes packages that, similar to Python, can extend the core functions of the R codebase so that it can be applied to very specific problems such as measuring financial metrics or analyzing climate data.

Collect raw data

You can import data from Excel, CSV, and from text files into R. Files built in Minitab or in SPSS format can be turned into R dataframes as well. While R might not be as versatile at grabbing information from the web like Python is, it can handle data from your most common sources.

Many modern packages for R data collection have been built recently to address this problem. Rvest will allow you to perform basic web scraping, while magrittr will clean it up and parse the information for you. These packages are analogous to the requests and beautifulsoup libraries in Python.

Process the data

R is a flexible environment for adding new columns of information, reshaping information, and transforming the data itself. This tutorial goes into the exact steps you’ll need to perform to get clean data. Many of the newer R libraries such as reshape2 allow you to play with different data frames and make them fit the criterion you’ve set.

Explore the data

R was built to do statistical and numerical analysis of large data sets, so it’s no surprise that you’ll have many options while exploring data with R. You’ll be able to build probability distributions, apply a variety of statistical tests to your data, and use standard machine learning and data mining techniques.

Basic R functionality encompasses the basics of analytics, optimization, statistical processing, optimization, random number generation, signal processing, and machine learning. For some of the heavier work, you’ll have to rely on third-party libraries.

Perform in-depth analysis

In order to do specific analyses, you’ll sometimes have to rely on packages outside of R’s core functionality. There are plenty of packages out there for specific analyses such as the Poisson distribution and mixtures of probability laws.

Visualizing data

R was built to do statistical analysis and demonstrate the results. It’s a powerful environment suited to scientific visualization with many packages that specialize in graphical display of results. The base graphics module allows you to make all of the basic charts and plots you’d like from data matrices. You can then save these files into image formats such as jpg., or you can save them as separate PDFs. You can use ggplot2 for more advanced plots such as complex scatter plots with regression lines.

The benefits of R

Takeaway: R is a programming environment specifically designed for data analysis that is very popular in the data science community. You’ll need to understand R if you want to make it far in your data science career. This interactive tutorial will help.

Python vs R

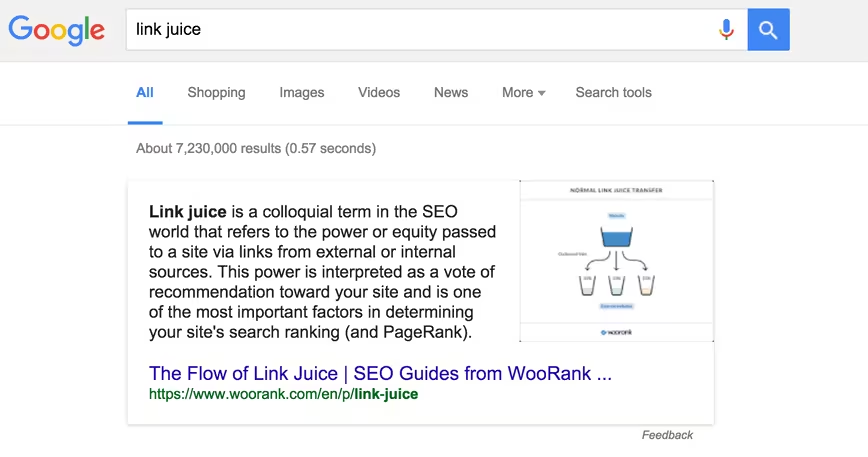

You’ll see a lot of content that compares and contrasts R with Python. This analysis by Datacamp on the differences is often regarded as the definitive take on the topic.

Usage

Python, as we noted above, is often used by computer programmers since it is the Swiss knife of programming languages, versatile enough so that you can build websites and do data analysis at the same time.

R is primarily used by researchers and academics who don’t necessarily have a background or knowledge of computer science.

Syntax

Python has a nice clear “English-like” syntax that makes debugging and understanding code easier, while R has unconventional syntax that can be tricky to understand, especially if you have learned another programming language.

Learning curve

R is slightly harder to pick up, especially since it doesn’t follow the normal conventions other common programming languages have. Python is simple enough that it makes for a really good first programming language to learn.

Popularity

Python has always been among the top 5 most popular programming languages on Github, a common repository of code that often tracks usage habits across all programmers quite accurately, while R typically hovers below the top 10.

Focus on data science

Python is a general-purpose language, and there is less focus on data analysis packages then in R. Nevertheless, there are very cool options for Python such as Pandas, a data analysis library built just for it.

Salary

The average data scientist who uses R will receive a salary of $115k compared to the $102k they would earn with Python.

Conclusion

Python is versatile, simple, easier to learn, and powerful because of its usefulness in a variety of contexts, some of which have nothing to do with data science. R is a specialized environment that looks to optimize for data analysis, but which is harder to learn. You’ll get paid more if you stick it out with R rather than working with Python.

Yet, that’s only one way to frame the debate: seeing it as a zero-sum game. The reality is that learning both tools and using them for their respective strengths can only improve you as a data scientist. Versatility and flexibility are traits any data scientist at the top of their field. 23 percent of data scientists surveyed by DataCamp used both R and Python.

O’Reilly found in their survey of data scientists that using many programming tools is correlated with increased salary. While those who work in R may be paid more than those that work in Python, those that used 15 or more tools made 30k more than those that just used 10 to 14.

Takeaway: The Python vs R debate confines you to one programming language. You should look beyond it and embrace both tools for their respective strengths. Using more tools will only make you better as a data scientist.

Read next: What customer success managers actually do all day

Get the TNW newsletter

Get the most important tech news in your inbox each week.