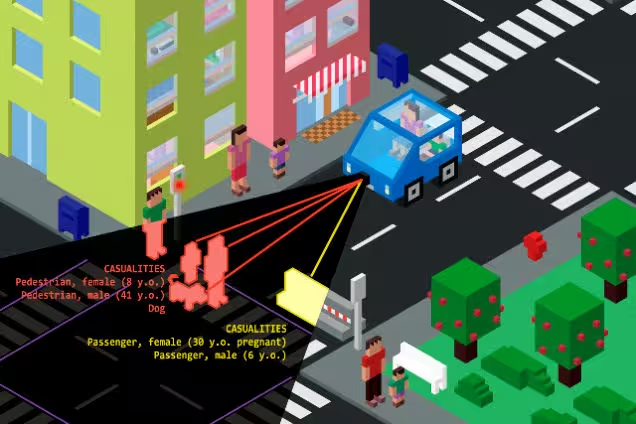

A recent study detailed some rather perplexing problems for autonomous car manufacturers.

When polled, people wanted the self-driving car’s programming to minimize casualties during a crash — even if it meant killing the passengers inside the car. But here’s the rub, although this is how they claimed they wanted the car to be programmed, they also didn’t want to ride in it if it were.

People are weird.

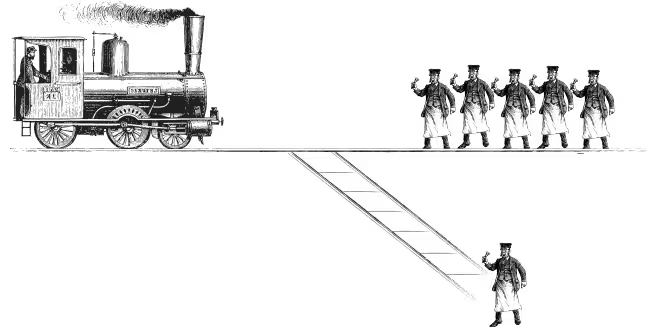

It all goes back to an Ethics 101 exercise known as ‘The Trolley Problem.’ The Trolley Problem asks what you’d do if a runaway train was on a track and about to mow down five people. You could switch the track which would allow the train to only kill one person, or you could remove yourself from the equation, ensuring five people die, and eliminating your involvement.

What do you do?

These are the types of questions the Google’s and Tesla’s of the world are faced with. When an accident is unavoidable, what should the car do in response? Should it mow down a pregnant mother pushing a stroller with twins, or is it better for the driver to end up face-first in a windshield as the car plows into a Bed Bath and Beyond?

There’s no right answer, but researchers are quickly finding that there are a lot of wrong answers… even the ones the people polled say are right. If you’re confused, that’s good, because these answers should be tough. Giving programmers the ability to control the outcome in a crash is a heavy burden that needs to be better understood before implementing code that could kill innocent pedestrians, the car’s driver, or all of the above.

For now, rest easy knowing that although your autonomous car, while willing to kill you, is incredibly unlikely to do so. Accidents are rare, and as we continue to collect data, perfect the technology and tweak the artificial intelligence, the chances of your car going all ‘Christine‘ are you are becoming increasingly improbable.

via Gizmodo

Get the TNW newsletter

Get the most important tech news in your inbox each week.