A team of researchers from UC San Diego recently came up with a relatively simple method for convincing fake video-detectors that AI-generated fakes are the real deal.

AI-generated videos called “Deepfakes” started flooding the internet a few years back when bad actors realized they could be used to exploit women and, potentially, spread political misinformation. The first generation of these AI systems produced relatively easy-to-spot fakes but further development has lead to fakes that are harder than ever to detect.

Read: How to stop deepfakes from destroying trust in society

To this end, numerous researchers have developed AI-powered methods by which Deepfakes and other similar AI-generated fake videos can be detected. Unfortunately, they’re all susceptible to the UC San Diego team’s adversarial attack methods.

Per the team’s research paper:

In this work, we demonstrate that it is possible to bypass such detectors by adversarially modifying fake videos synthesized using existing Deepfake generation methods. We further demonstrate that our adversarial perturbations are robust to image and video compression codecs, making them a real-world threat.

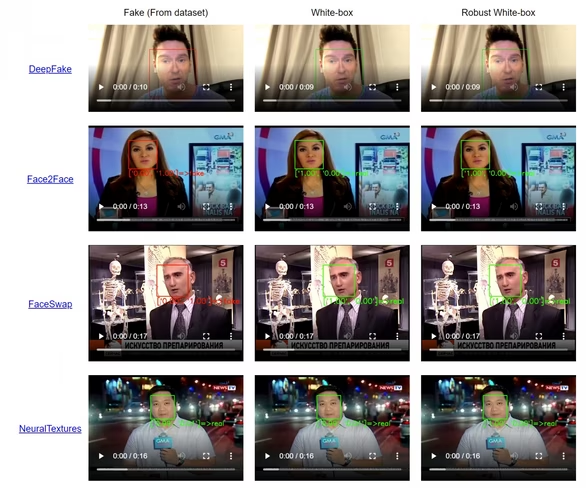

The first video below shows a Deepfake detector working normally, the second shows what happens when the researchers infuse the video with adverserial information designed to fool the detectors:

Fake video detectors examine each frame of a video for manipulation — it’s impossible to make a manipulated frame appear natural on the surface. The UC San Diego team developed a process by which they inject adversarial information into each frame, thus causing the detectors to think the video is “normal.”

The attack works on both white and black box AI – with the difference being whether the “faking” happens openly or under hidden parameters. And, according to the researchers, it’s entirely robust to compression and other manipulations.

This research clearly indicates that state-of-the-art fake video detectors are severely limited. In essence, the team concludes that a bad actor with partial domain knowledge could fool all of the current Deepfakes detectors.

Get the TNW newsletter

Get the most important tech news in your inbox each week.