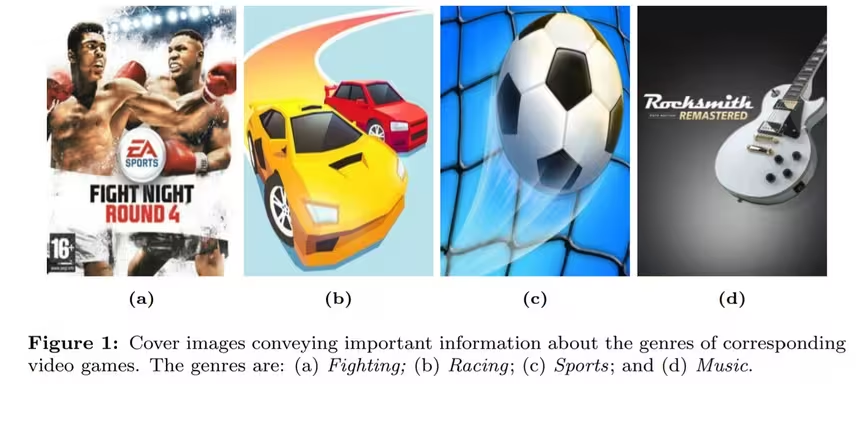

Have you ever seen the promo art or box cover for a video game and thought “what the hell is this even about?” Well, wonder no more. A pair of researchers have combined cutting-edge image recognition and natural language processing to create an AI system for video game genre classification.

Yuhang Jiang and Lukun Zheng, in their recently published pre-print research paper “Deep learning for video game genre classification,” describe the creation of a large training database and its use in developing a novel classification system.

Per the authors:

We created a large dataset of 50,000 video games including game cover images, description text, title text, and genre information. This dataset can be used for a variety of studies such as text recognition from images, automatic topic mining, and so on and will be made available to the public in the future. In addition, we evaluated several state-of-the-art image-based models and text-based models. We also developed a multimodal model by concatenating features from a image modality and a text modality.

Once compiled, the researchers used the database to train text and image recognition models. The team then tested each model to determine which one worked best. Unsurprisingly, they found the text-based models fared better than image-based ones and that hybrid models using both did best.

[Read: Why this security engineer loves working in INFOSEC]

Quick take: Video game genre classification is a difficult problem for AI researchers. Unlike music or movies, video games contain an extra dimension of definition, that being that they are an interactive form of entertainment.

The ability to accurately automate game classification could be a boon for the industry. Such a system could make it easier for players to find games they might like and for storefronts to properly organize their catalogs. But perhaps most importantly, it’s easy to imagine this classification system integrating with recommendation algorithms and other AI-based data-gathering-and-execution services.

Read the whole paper here for more information.

Get the TNW newsletter

Get the most important tech news in your inbox each week.