In today’s connected world, you’d be hard pressed to find a population (disregarding technophobes and lost tribes) who actively shun activities that are digitally mediated. Unfortunately for those who do align themselves with Luddism, advances in mobile computing (and digital tech in general) appear to be exponential. Technologically-enhanced areas of information are all around us, from our PCs to smart gadgets made pocket-portable.

In the digital sci-fi series “H+”, wireless implants are regularly injected into a population who seem comfortable with the idea of invasively combining biology and technology. The characters of H+ willingly insert the equivalent of our current mobile device tech into their bodies, transforming them into mobile connected entities. Although this may seem like a nightmare scenario to some, many techno-evangelists would welcome the chance to dispense with manual gadgets totally and embrace a connection method where humans become perpetually, and irrevocably, connected.

The H+ World: Fiction imitating life?

In the first episode of the H+ digital series, we watch a couple driving through an airport parking lot. The passenger views the interior of the car and the external environment through a Heads-Up Display interface. The interface shows transparent icons with scrolling titles such as “Productivity”, “Utilities” and “Communication”, with the user rapidly flicking between each. At first, the icons and text seem somehow integrated into the car’s windscreen in an Augmented Reality setup. However, when the icons stay floating directly in the passenger’s field of vision even when she’s turned her head away from the windscreen, it’s obvious that the display is actually embedded in the passenger’s visual field. This system is an advanced (and yet to be concretely realised) example of what, in terms of current technology, is termed a Brain-Computer Interface (BCI).

H+ users engage with a wireless network directly via their central nervous system: the system works directly with the microscopic device that allows the characters to perform a wide array of tasks purely by “thinking” them into action.

In the gradually-revealed dystopia created in H+, characters must deal with catastrophic consequences springing from combining hardware and software into such an advanced platform. Although this type of integrated system could allow for potentially seamless connectivity, we have yet to realise such an arrangement in the “real” world: but we do have two technologies that strongly relate to this version of humanity as being perpetually connected via ubiquitous means. The first is Wearable Computing, and the other is BCIs in the style pioneered by technophile and guru Steve Mann.

Wearable computing: Google Glass

Wearable Computing is a discipline devoted to exploring and creating devices that can either be worn directly on the body, or incorporated into a user’s clothing or accessories. The MIT Computing Webpage describes wearable computing as aiming:

“…to shatter this myth of how a computer should be used. A person’s computer should be worn, much as eyeglasses or clothing are worn, and interact with the user based on the context of the situation. With heads-up displays, unobtrusive input devices, personal wireless local area networks, and a host of other context sensing and communication tools, the wearable computer can act as an intelligent assistant, whether it be through a Remembrance Agent, augmented reality, or intellectual collectives.”

The Wearable Computing field has grown markedly since the early 1960s. One example of such tech from that era was a system designed specifically for roulette prediction by combining signals obtained from a tiny analogue computer with output from microswitches embedded in a player’s shoe. In the 1970’s, wearable computers continued this trend with inventor Alan Lewis constructing a digital camera-case computer that could predict roulette wheels. Fortunately, the field also expanded in this decade to include more altruistically oriented devices: in 1977, C.C. Colins from the Smith-Kettlewell Institute of Visual Sciences produced a prosthetic vest for the blind. This early wearable tech utilized a head-mounted camera to convert visuals into tactile imagery via a grid embedded in the vest, allowing a visually impaired wearer the ability to “see”.

Wearable Computing progress continued in the 1980s, with Steve Mann (who we’ll look at in detail later) creating a computer held in a metal-framed backpack – with an accompanying head-mounted camera – that controlled photographic equipment. In 1993, Thad Starner developed a customized general purpose computer, which was designed specifically to be reconfigurable. Thad has famously worn this device since the 90’s, with it being dubbed “The Lizzy” due to perceived parallels with the original Model-T Ford – originally the car was labelled the “Tin Lizzy”.

There’s been significant advancements in the field of Wearable Computing over the last two decades, including the development of smartwatches like IBM’s Linux Watch and the currently-kickstarted Strata by Metawatch which connects “…with phone apps to see calls, SMS, workouts, email, FB, Twitter, calendar events, weather & more”. Other wearable devices include exercise monitoring wearables such as Jawbone, Pebble, and Fuelband, in addition to the Q-Belt “Buckle” Integrated Computer and Erik De Nijs’ keyboard input jeans.

In current wearable tech terms, real interest lies in the rapidly growing field of Head-Mounted Displays. Even though Innovega’s development of its iOptik Augmented Reality/Internet enabled contact lenses caused quite the stir in wearable tech circles earlier this year, this interest seems to have been eclipsed by current developments in computer-enabled headwear such as Google Glass, the Sony’s HMZ-T2 3D display, TTP eyewear, Vuzix Video sets, Social Video Sharing Glasses from Vergence Labs, and the Occulus Rift unit.

More eyewear than traditional head-set, Google Glass still exemplifies just how far wearable computing has come since its initial development. The Google Glass Development Team refers to the project on their Google+ page:

“We think technology should work for you—to be there when you need it and get out of your way when you don’t. A group of us from Google[x] started Project Glass to build this kind of technology, one that helps you explore and share your world, putting you back in the moment.”

When Project glass was initially revealed by the Google X Research team, only vague details were provided, with an emphasis on the concept design rather than practical specifications. In an attempt to cut through the product hype and highlight the concrete potential of the project, Sergey Brin (who heads up the Google X Division) took the Google Glasses to the recent Diane von Furstenberg show at 2012 New York Fashion Week. During the show, Brin – flanked by runway models and Hollywood celebrities – showed off colour-matched minimalistic versions of the eyewear, which are equipped to take photos when given specific verbal commands, and come equipped with a time-lapse mode where photos are automatically snapped every 10 seconds.

Although technically Google Glass units are still in prototype development, the wearable tech was recently allowed out of the testing bag and into the hands of the media via Spencer Ante from the Wall Street Journal. The results proved mixed, with Ante emphasizing the awkwardness of the software and the absence of messaging/phone capabilities, map functions or access to geolocative data. Such functional aspects seem to have been superseded in the headlong rush towards media exposure, possibly timed to pre-emptively chip away at the hype cycle generated by the latest Apple iPhone launch.

Additional criticism levelled at Google Glass includes the lack of dual visual overlay: the eyewear relies on a camera and a tiny display window that’s restricted to the upper side of the right-hand lens bracket (there are no lenses) and set at a level distinct from a user’s direct line of sight. A user must constantly reorient their gaze towards this window, rather than having information superimposed directly over their field of vision. Another type of eyewear-based wearable computer that attempts to tackle this problem is new tech produced by a company called The Technology Partnership (TTP). This tech looks like standard eyewear, complete with traditional frames and (what appear to be) clear lenses. In fact, the lenses reflect back to the viewer a projected image directly on the centre of the lens, which insures users maintain their natural gaze rather than repeatedly redirecting it in order to access the augmented information.

Although the TTP Glasses seem to present a positive solution to potential asymmetrical/redirection vision problems stemming from Google Glass use, it – like most wearable computer proof-of-concept versions – still has its fair-share of possible limitations. Uncomfortable display formats and clunky manoeuvrability seems to plague most wearable prototypes, making the gaps in the everyday practicalities of design seem all the more problematic.

Another issue that wearable computing tech needs to address – preferably before any mainstream commercial production kicks into gear – includes the establishment of reliable network availability. If wearable tech advocates are forced to deal with geo-restrictions or patchy coverage prone to dropouts (think, as an example, of past coverage provided by LTE networks), early adopters may be understandably unimpressed.

Other concerns centre on the long-term health impacts of using such technology, which could be addressed by longitudinal studies examining possible detrimental sensory effects. For instance, if the commercial version of Google Glass still doesn’t offer the capacity for dual vision whilst continuing to restrict viewing to a specific visual region, what impact could that have on overall sensory development (especially of children)?

Alternatively, Wearable Computing does offer substantial benefits for users keen to adopt such technology, including speedy data retrieval via a sense of “intrinsic connectedness”, and the ability to maintain social bonds through lightning-fast content sharing. This idea of having seamless online access without the unnecessary sense of removal that comes from having to physically man-handle a device is a very attractive one (who needs haptic controls when an eye-tracked glance will do?). The potential for enhancing existing education models are enormous, as are harnessing the immediacy value for stockbrokers, emergency response teams, and sport replay junkies.

Steve Mann and ‘Bearable Computing’

Steve Mann has been an advocate of Wearable Computing since the 1970s, but he has pushed the concept far beyond tech that is merely placed on the human body: Steve Mann is, instead, a self-proclaimed cyborg. He’s been instrumental in the development of what he terms “Bearable Computing”. Such bearable (or body-borne) devices can be worn on the body or incorporated into the body itself, much like the implants injected into the H+ characters.

Mann’s use of the term Cyborg takes wearable technology a step further towards the realm of the Brain Computer Interface (BCI). In a 1997 paper describing his “WearCam and “WearComp” concepts, Mann says:

“We are entering a pivotal era in which we will become inextricably intertwined with computational technology that will become part of our everyday lives in a much more immediate and intimate way than in the past. The recent explosion of interest in so-called “wearable computers” is indicative of this general trend…`personal imaging’, originated as a computerized photographer’s assistant…it later evolved into a more diverse apparatus and methodology, combining machine vision and computer graphics, in a wearable tetherless apparatus, useful in day-to-day living. Personal imaging, at the intersection of art, science, and technology, has given rise to a new outlook on photography, videography, augmented reality, and `mediated reality’, as well as new theories of human perception and human-machine interaction.”

Image Credit: Steve Mann/WearCam

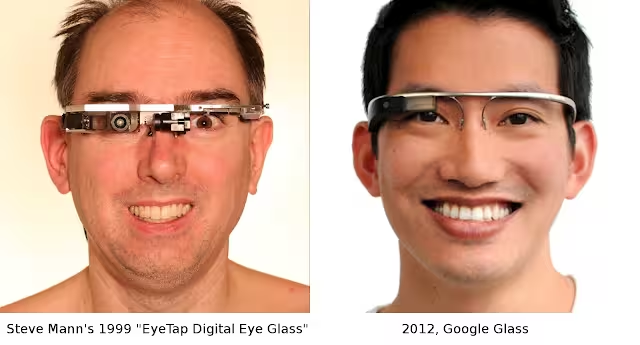

One of Mann’s recent projects is his EyeTap Digital Eye Glasses, which incidentally bear a striking resemblance – both aesthetically and functionally – to those produced by Google Glass.

Image Credit: Steve Mann

These EyeTap Glasses can be fixed to Mann’s skull and connect to his brain via a BCI. This allows Mann to record a permanent visual log of his life – called Lifelogging – which recently resulted in a difficult situation for Mann and his family when attempting dine at a Paris McDonalds. According to a post outlining the events on Mann’s blog, the staff at the restaurant allegedly assaulted Mann by trying to remove his attached Glasses, after requesting that Mann stop filming.

Even after being showed appropriate documentation explaining the purpose of the physically-attached hardware, the employees still attempted to remove the device. In doing so, the employees actually photographed themselves during the entire disagreement, providing visual evidence to back up Mann’s claims.

The trauma undergone by Mann and his family highlights several of the potential problems of BCIs, which include issues to do with privacy, surveillance and trade secrecy concerns. Corporations obviously take the idea of unauthorised observation via BCIs very seriously, whereas individuals may have health-related fears regarding the integration of electronic and mechanical components into living tissue, such as leakage, corrosion or implant rejection.

Consumers fitted with BCIs may also have legitimate concerns regarding the safety of logged data, especially of a personal/intimate nature (it may be all-too-easy to forget that information is being recorded via an implanted device). Other users may object to being constantly documented.

Image Credit: Emotiv

“Based on the latest developments in neuro-technology, Emotiv has developed a revolutionary new personal interface for human computer interaction. The Emotiv EPOC is a high resolution, neuro-signal acquisition and processing wireless neuroheadset. It uses a set of sensors to tune into electric signals produced by the brain to detect player thoughts, feelings and expressions and connects wirelessly to most PCs.”

The potential for such a sensor-based headset seems vast, especially in the areas of gaming and health services. However, any tech that melds neurological activity with hardware carries associated risks related to identity theft, illegal information extraction and data saturation. Thankfully, related BCI benefits also seem high, such as enhanced memory improvement, simulation potential benefitting students, and replay technology assisting in legal proceedings.

There may also be a reduction in response times when utilizing BCIs (the symbiotic meld of organic tissue with mechanical/electronic component could act to reduce lag). Another positive of BCI use would be beating the gadget hype cycle through the removal of external design characteristics. For instance, if the Apple iPhone 5 had been constructed and deployed as a BCI, the issues of “delight fatigue” – that threatens to overwhelm previously reliable Apple devotees intent on snapping up every new gadget version – just wouldn’t apply. Also, if the temptation to remotely disable any user’s smartphone camera ever gets too much for Apple (now that the patent has been granted), a BCI version could prove much harder to disable – would Apple want to admit to even temporarily blinding customers?

More mobile than human

“‘Man-computer symbiosis’ is a subclass of man-machine systems. There are many man-machine systems. The hope is that, in not too many years, human brains and computing machines will be coupled together very tightly and the resulting partnership will think as no human brain has ever thought and process data in a way not approached by the information handling systems.” – J. Licklider, 1960, “Man-Computer Symbiosis”.

Through wearable technology or bio-implanted fusions of the organic and mechanical (just as in the H+ fictional universe), the future of computing seems inextricably linked to mobility. Are you prepared for a world where your mobile device melds directly with your cortex, or do you assume functional wearable computers and BCIs are to remain purely entrenched in the realm of fiction?

Featured image credit: AFP/Getty Images

Get the TNW newsletter

Get the most important tech news in your inbox each week.