Caitlin Kalinowski spent 16 months building OpenAI’s physical AI programme. On Saturday, she said the company moved too fast on something too important.

The week that began with Anthropic being blacklisted by the Pentagon and ended with OpenAI taking its contract has now claimed OpenAI’s most senior hardware executive.

Caitlin Kalinowski, who joined OpenAI in November 2024 to lead its robotics and consumer hardware division, announced her resignation on Saturday on X. Her statement was short, direct, and more candid than anything OpenAI itself has said about the deal.

“AI has an important role in national security,” she wrote. “But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got.”

In a subsequent post, she was more precise about the nature of the complaint. “It’s a governance concern first and foremost,” she wrote. “These are too important for deals or announcements to be rushed.”

Kalinowski was careful to frame her departure in personal terms. “This was about principle, not people,” she wrote. “I have deep respect for Sam and the team.”

That last note carries some weight: Sam Altman has himself acknowledged that the Pentagon deal was “definitely rushed,” and that the rollout produced significant backlash.

What Kalinowski’s resignation adds to that admission is a name and a title: the most senior person at OpenAI, whose job was to bring AI into physical systems, has decided that the process by which it will now enter weapons systems and surveillance infrastructure was not good enough.

What the deal involved

The sequence of events that led here unfolded over roughly a week. Anthropic, which had been the only AI company cleared to operate on the Pentagon’s classified networks, following a $200 million contract awarded in July 2025, spent several weeks in tense negotiations with the Defense Department over the terms of continued use.

Anthropic’s position was that its models should not be deployed for mass domestic surveillance or fully autonomous weapons. The Pentagon, under Defense Secretary Pete Hegseth, insisted on language permitting use “for all lawful purposes,” without specific carve-outs.

On 28 February, with negotiations collapsed, President Trump directed all federal agencies to stop using Anthropic’s technology and called the company “radical woke” on Truth Social.

Hegseth formally designated Anthropic a supply-chain risk to national security, a classification previously reserved for foreign adversaries, and one that requires DoD vendors and contractors to certify they do not use Anthropic’s models.

Hours later, Altman posted on X that OpenAI had reached its own agreement to deploy its models on the Pentagon’s classified network.

OpenAI’s stated position is that its deal includes the same core protections Anthropic sought: no mass domestic surveillance, no autonomous weapons.

The company published a blog post outlining its approach and arguing that its cloud-only deployment architecture, retained safety stack, and contractual provisions, anchored to existing US law rather than bespoke prohibitions, make its agreement more robust than any previous classified AI deployment, including Anthropic’s.

What Kalinowski’s departure means for OpenAI

Kalinowski’s career before OpenAI was unusual in its breadth. She spent nearly six years at Apple as a technical lead on the Mac Pro and MacBook Air programmes, including the original unibody MacBook Pro, before moving to Meta’s Oculus division, where she led virtual reality hardware for more than nine years.

Her final role at Meta was heading Project Nazare, later named Orion, the augmented reality glasses initiative Meta unveiled as a prototype in September 2024 and described as the most advanced AR glasses ever made.

She joined OpenAI the following month.

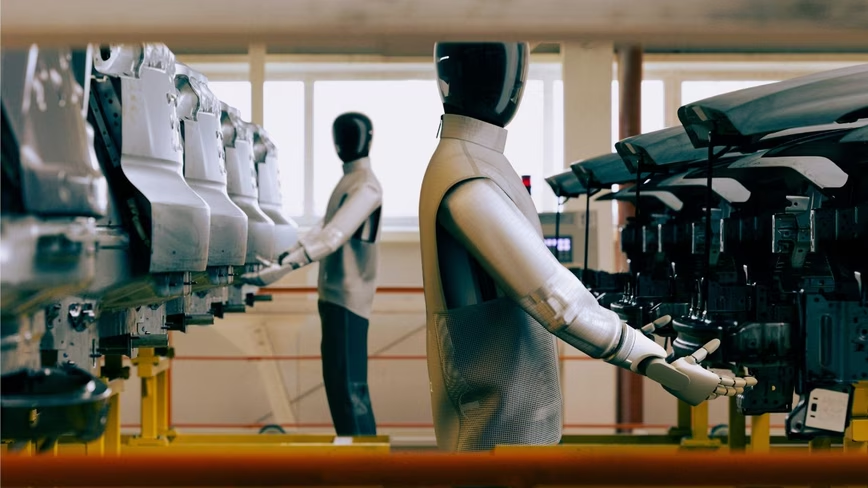

During her 16 months at OpenAI, Kalinowski built out what the company describes as its physical AI programme, including a San Francisco lab employing roughly 100 data collectors training a robotic arm on household tasks.

Her departure leaves that effort without its most experienced hardware leader at a moment when OpenAI has staked considerable ambition on moving beyond software.

OpenAI confirmed her resignation on Saturday and said in a statement: “We believe our agreement with the Pentagon creates a workable path for responsible national security uses of AI while making clear our red lines: no domestic surveillance and no autonomous weapons.

We recognise that people have strong views about these issues and we will continue to engage in discussion with employees, government, civil society, and communities around the world.”

The wider picture

The fallout from OpenAI’s Pentagon deal has not been limited to internal dissent. ChatGPT uninstalls reportedly surged 295% following the announcement, and Anthropic’s Claude climbed to the number-one position in the US App Store, displacing ChatGPT. As of Saturday afternoon, the two apps remained first and second, respectively.

What Thursday’s resignation of the company’s robotics chief confirms is that the deal’s costs for OpenAI are still being counted. Altman wanted to de-escalate a confrontation between the government and the AI industry. He may yet have succeeded. Whether the price of that de-escalation, in talent, in trust, and in the specific question of who was right about the guardrails, was worth paying is a question that will take longer to answer.

Get the TNW newsletter

Get the most important tech news in your inbox each week.