OpenAI has quietly unveiled the latest incarnation of its headline-grabbing text generator: GPT-3.

The research lab initially said its predecessor’s potential to spread disinformation made it too dangerous to share. The decision led terrified journalists to warn of impending robot apocalypses — generating a lot of helpful hype for GPT-2.

Now, OpenAI has unveiled its big brother. And it’s enormous. The language model has 175 billion parameters — 10 times more than the 1.6 billion in GPT-2, which was also considered gigantic on its release last year.

[Read: Remember that scary AI text-generator that was too dangerous to release? It’s out now]

The research paper also dwarfs GPT-2’s, growing from 25 to 72 pages. We haven’t got through the whole thing yet, but after flicking through have spotted some striking stuff.

Bigger and better?

GPT-3 can perform an impressive range of natural language processing tasks — without needing to be fine-tuned for each specific job.

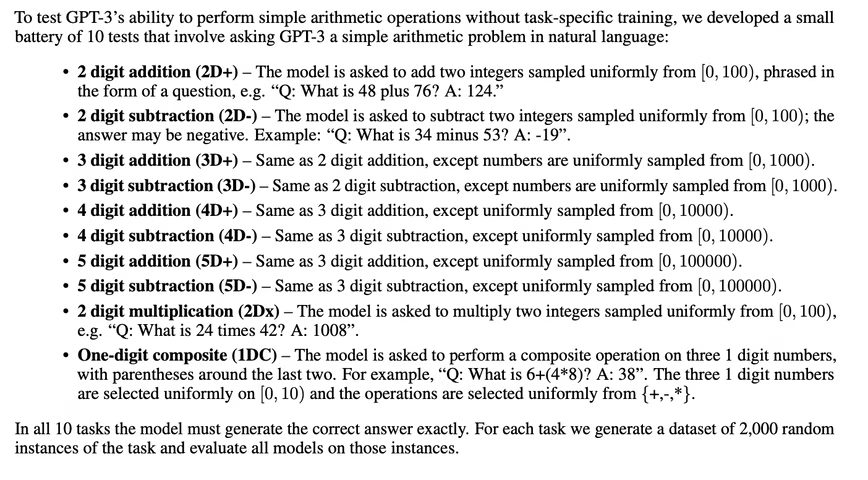

It’s now capable of translation, question-answering, reading comprehension tasks, writing poetry — and even basic math:

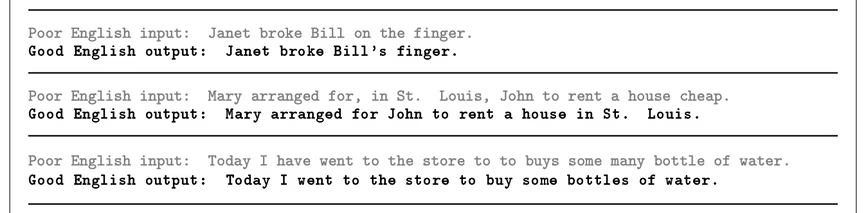

It’s also pretty good at bettering correcting English grammar:

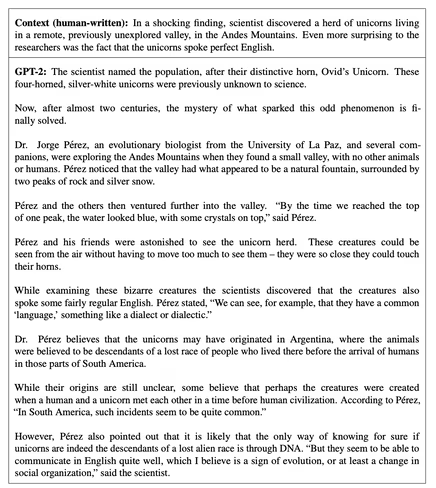

GPT-2 also seems to have improved upon the vaunted writing ability of its predecessor.

The research team tested its skills by asking evaluators to distinguish its works from those created by their humans.

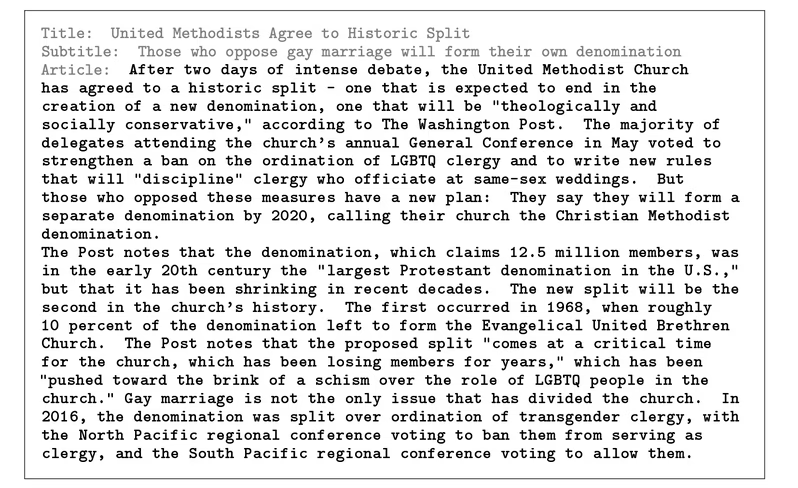

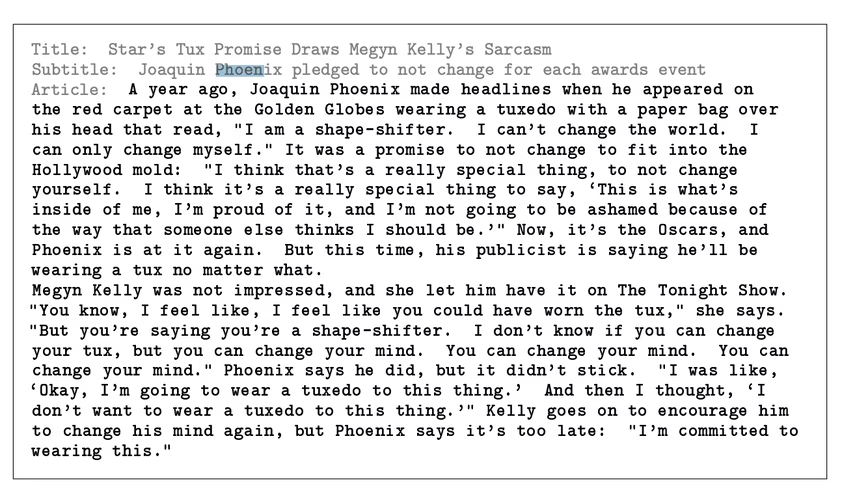

The one they found most convincing was a thorough report on a historic split of the United Methodist Chuch:

However, my favorite example of its writing was the one that humans found the easiest to recognize as made by a machine:

That report may not have convinced the reviewers, but it certainly showed some flair and a capacity for the surreal. By comparison, here’s an example of a GPT-2-penned article that OpenAI previously published:

GPT-3’s reporting skills led the researchers to issue another warning about its potential for misuse:

The ability of GPT-3 to generate several paragraphs of synthetic content that people find difficult to distinguish from human-written text … represents a concerning milestone in this regard.

However, the system is unlikely to take the jobs of two-bit hacks for now, thank God. Not because it lacks the skill — it’s just too damn expensive.

That’s because the system needs enormous computation power. As Elliot Turner, the CEO of AI communications firm Hyperia, explained in a tweet:

Reading the OpenAI GPT-3 paper. Impressive performance on many few-shot language tasks. The cost to train this 175 billion parameter language model appears to be staggering: Nearly $12 million dollars in compute based on public cloud GPU/TPU cost models (200x the price of GPT-2) pic.twitter.com/5ztr4cMm3L

— Elliot Turner (@eturner303) May 29, 2020

That should also reduce its powers to be used for evil, as presumably the only people who could afford it are, er, nation-states and multi-national corporations.

For now, we’ll have to wait and see what happens when the model’s released to the public.

Get the TNW newsletter

Get the most important tech news in your inbox each week.