TL;DR

Meta’s Q1 2026 earnings call focused entirely on AI spending ($125-$145B capex) while ignoring the company’s growing child safety crisis: a lost addiction trial with $6M damages, a $375M New Mexico penalty, 40+ state attorney general suits, youth bans in Indonesia, Australia, France, and Spain, an EU probe this week, and US Senate legislation targeting AI chatbots for minors. CFO Susan Li’s prepared remarks acknowledged the risk of “material loss,” but no investor asked Zuckerberg about children.

Mark Zuckerberg’s earnings call on Wednesday was about AI. It was about the $125 billion to $145 billion Meta plans to spend on capital expenditure in 2026. It was about Llama models and recommendation engines and the advertising systems that generate $56 billion in quarterly revenue. It was not about children. No investor asked Zuckerberg about the social media addiction trial that Meta lost in March, or the thousands of similar lawsuits waiting their turn, or the EU probe into underage users announced this week, or the Senate committee that backed legislation requiring AI companies to stop minors from using chatbots, or the Indonesian ban on under-16s that took Meta’s products offline for millions of young users. Zuckerberg told employees the 8,000 layoffs this month are about reallocating capital from people to infrastructure. He did not tell investors what the company plans to do about the growing legal, regulatory, and reputational cost of the products it already has.

The verdict

On 25 March, a Los Angeles County Superior Court jury found Meta and Google liable for designing addictive platforms that harmed a young user, awarding $6 million in damages with Meta found 70 per cent responsible. It was the first social media addiction case to reach a verdict. In a separate trial in New Mexico, a jury determined that Meta had violated the state’s Unfair Practices Act by concealing what it knew about child sexual exploitation and the effects of its platforms on children’s mental health, resulting in a $375 million penalty. Massachusetts’ highest court ruled in April that Meta must face a state lawsuit alleging it deliberately designed features to addict young users. More than 40 state attorneys general have filed child safety suits against Meta. Bellwether trials are scheduled throughout 2026. The company’s own chief financial officer, Susan Li, said in prepared remarks that Meta “continues to see scrutiny on youth-related issues” and that ongoing trials “may ultimately result in a material loss.”

The word “material” is doing considerable work in that sentence. The tobacco industry’s master settlement in 1998 cost $206 billion over 25 years. Meta’s quarterly revenue is $56 billion. A tobacco-scale settlement, scaled to Meta’s current financial size, would be the largest corporate liability in history. The cases moving through courts now are testing precisely the legal theory that produced the tobacco settlement: that the company knew its product was harmful, concealed the evidence, and continued to distribute the product to minors. Regulators in multiple jurisdictions are simultaneously investigating platforms for child safety failures under new online safety legislation, expanding the legal surface area beyond the American courts.

The bans

While the lawsuits address past harm, governments are moving to prevent future exposure. Indonesia became the first Southeast Asian country to ban social media for users under 16, prohibiting Google’s YouTube, ByteDance’s TikTok, and Meta’s Instagram, Facebook, and Threads from hosting minors. Australia enacted a similar ban in December 2025. France approved an under-15 ban in January. Spain announced its own under-16 prohibition. The European Commission escalated a probe this week into Meta’s failure to prevent underage users from accessing its platforms, a case that could result in fines of up to 6 per cent of global revenue. A US Senate committee backed legislation requiring Meta and other AI companies to prevent minors from using chatbots, extending the regulatory scope from social media feeds to AI-powered conversational products.

The pattern is global and accelerating. Each ban and each probe creates compliance costs, reduces Meta’s addressable market for young users, and adds to the regulatory scrutiny that Li acknowledged on the earnings call. The bans also create a natural experiment: if social media usage among minors declines in countries with prohibitions, and if measurable improvements in youth mental health follow, the evidence base for the addiction lawsuits in the United States strengthens.

The spending

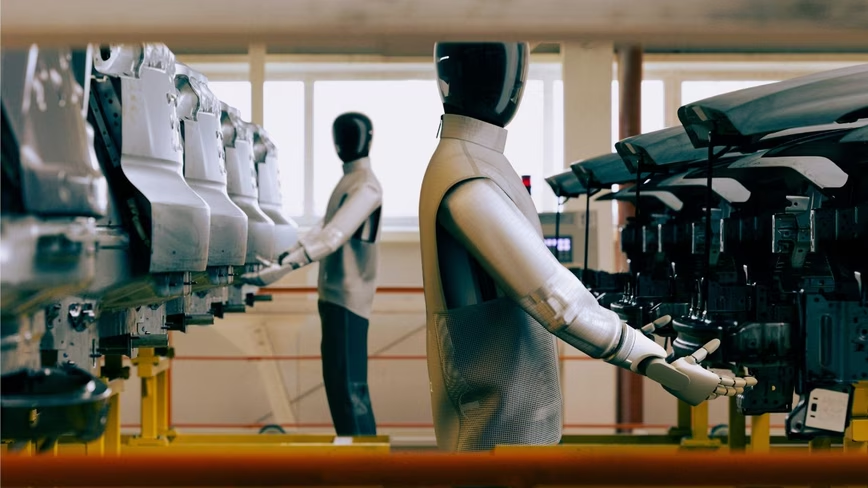

Meta has been cutting hundreds of jobs across Reality Labs, recruiting, and sales as it doubles AI spending. The $125 billion to $145 billion in 2026 capex is roughly double what the company spent last year, and nearly all of it goes to data centres, GPUs, custom silicon, and the infrastructure supporting Llama models and Meta’s Superintelligence Labs. The Broadcom chip deal, extended to 2029, commits Meta to a custom silicon programme that will cost additional billions. Wall Street was not persuaded. Meta’s stock fell the most in six months following the earnings call, with Bank of America analysts describing the company as “still a ‘show-me’ story on AI returns.”

The investment thesis is that AI will improve Meta’s recommendation models (keeping users engaged longer), improve its advertising models (selling better-targeted ads), and eventually generate new revenue streams from AI products. The problem is that the child safety lawsuits allege that Meta’s existing recommendation models are already too effective at keeping users engaged, and that their effectiveness in retaining young users is precisely what causes the harm. The algorithmic systems that Meta is spending $145 billion to improve are, in the plaintiffs’ legal theory, the same systems that cause the addiction. Zuckerberg’s AI vision and Meta’s legal liability are not separate problems. They are the same problem, viewed from different angles.

The silence

Snap will report earnings on 6 May. When CEO Evan Spiegel was asked about teen social media bans on the company’s previous earnings call, he dismissed them as having “little effect on the company’s bottom line” and moved on. Zuckerberg did not need to deploy a similar deflection this week. No one asked the question. The analyst community that covers Meta appears to have accepted, at least for now, that AI spending is the story and child safety is a footnote. This may be correct in the short term. The addiction lawsuits have not yet produced a verdict large enough to affect Meta’s financial statements. The international bans have not yet reduced revenue. The EU probe has not yet resulted in a fine.

But Li’s use of the word “material” in her prepared remarks suggests that Meta’s own legal team believes the risk is real. Prepared remarks are not extemporaneous. They are reviewed by lawyers. “May ultimately result in a material loss” is the language a company uses when its attorneys have told the board that the probability of significant liability is high enough to require disclosure. The question that no investor asked Zuckerberg, and that Zuckerberg chose not to address, is whether the $145 billion AI programme will generate returns faster than the child safety lawsuits generate costs. The AI programme is the future Meta is building. The lawsuits are about the present Meta already built. The present has a way of arriving before the future does.