One of the most controversial political theorists of the 20th century was Francis Fukuyama, who postulated that history had ended. What did he mean by that? His argument, summed up somewhat inelegantly, was that humanity had perfected the rules that would guide society from that point forwards. Only liberal democracy, with a free market economic system, can secure human prosperity, happiness, and evolution.

Obviously, that theory didn’t hold water for long. Fukuyama couldn’t have imagined the wave of populism that would sweep Europe in the 2010s. Another problem — and you can’t really fault him for this – is that he didn’t foresee the transformative power of big data, algorithms, and artificial intelligence.

If Francis Fukuyama was concerned about the end of history, author and researcher James Bridle is terrified about the end of the future. Specifically, he fears that, as the world inches towards peak-industrialization, with computers and software playing a more prominent role in decision making, we lose our ability to take control of our own lives.

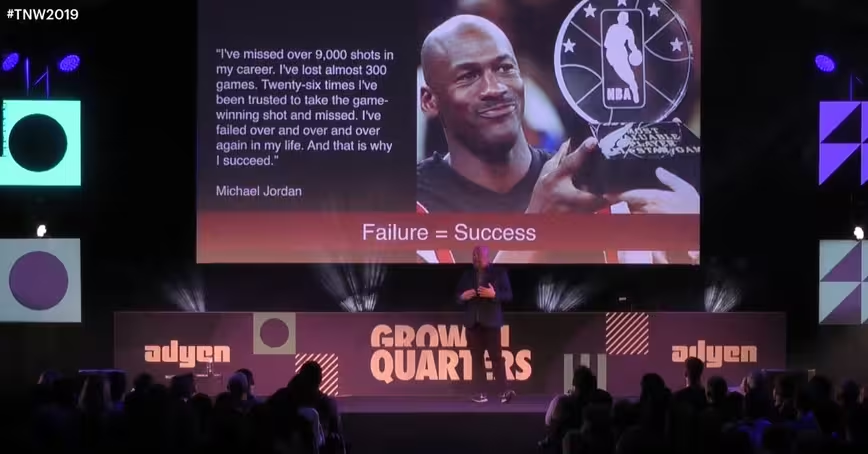

“The world is becoming less knowable by attempts to know it by computational means,” Bridle said on stage at TNW 2019.

“The idea that we can understand the world by the gathering of data is starting to fail. The worst part is that we’re failing to notice. We see that in things that we tried to predict in the first place.”

And what’s interesting is that, according to Bridle, this trend is evident in both big picture things, from predicting the weather, everyday realities, like how YouTube suggests content.

Bridle gave the example of Lewis Fry Richardson, a Quaker academic and stretcher-bearer during World War I, who essentially wrote the rules for meteorological prediction whilst he carried bodies during the frenetic violence of the Western Front. These rules, when combined with the introduction of electronic computational power in the 1950s (and I use the term “electrical” because prior to that point, computers were people), allowed scientists to predict the weather with a reasonable degree of accuracy.

Weather is important. The foreknowledge given by meteorologists lets us know if we need to take an umbrella out, or whether it’s the time to plant crops. In essence, it’s fundamental to our survival as a species.

What happened? Climate change. Now, the window that allows us to predict the weather has narrowed and narrowed, to the point where we only can see a few days into the future. In the trenches, Richardson created an algorithm that continues to have a manifest influence in today’s world. Essentially, he allowed us to predict the future. The implications of its failure is, frankly, terrifying. Ignorance really isn’t bliss.

And then there’s YouTube. Bridle achieved a wider notoriety in 2017 when he wrote an essay called “Something is wrong on the Internet.” This discussed how YouTube is being flooded with algorithmically-created, mass-produced, low-quality video content that’s precisely aimed at children – and let me be clear, we’re talking about primary school kids – and is often inappropriate and terrifying. His essay provoked a conversation about how YouTube protects its younger users that continues to echo.

Part of the problem isn’t just that the machines are making the content, but also they’re recommending it too. YouTube’s algorithm, which has a bias towards the sensational and the wild, recommends videos that are often misleading or harmful. Bridle gave the example of how, with just twelve clicks, YouTube’s algorithm went from recommending innocuous children’s videos, to endorsing horrifying Mickey Mouse porn.

And then there’s the harm done to our species, as YouTube promotes climate denialism propaganda against videos of actual scientists. YouTube is often described as a tool for radicalization, introducing fresh faces to anti-science and politically extreme content, simultaneous elevating crackpots and demagogues to celebrity. We’re seeing the consequences of this play out in our political and social sphere, and nobody’s quite sure how to fix it.

And then there’s the fact that, in some workplaces, algorithms have replaced human intuition and thought entirely. Vast swathes of human labor have been replaced by machines that think and decide.

Bridle cited the example of Amazon warehouses, which are heavily data driven. Items are stored on shelves not by any human systems, but rather by an algorithmically determined system. The company is also rumored to be working on wearable trackers that, if used, would provide workers with instructions about how to conduct their day-to-day business. A bit like a floor manager, it’d tell them where to go and what to do, each step guided. Each movement precisely selected.

Automation is often described as a threat to low-level, unskilled jobs. But here, we can see black-box logic could one-day surpass and replace managerial employees, creating a world where the boss doesn’t sit in a corner office and drive a Lexus, but is rather a few lines of code in a data center.

One of my favorite quotes comes from a video game, Bioshock. In a final, bloody confrontation with the player, the game’s primary antagonist, Andrew Ryan, says: “A man chooses, a slave obeys.” An as we cede our own natural, god-given sovereignty to machines, we risk sleepwalking into a state of unbreakable bondage. We risk becoming slaves.

Get the TNW newsletter

Get the most important tech news in your inbox each week.