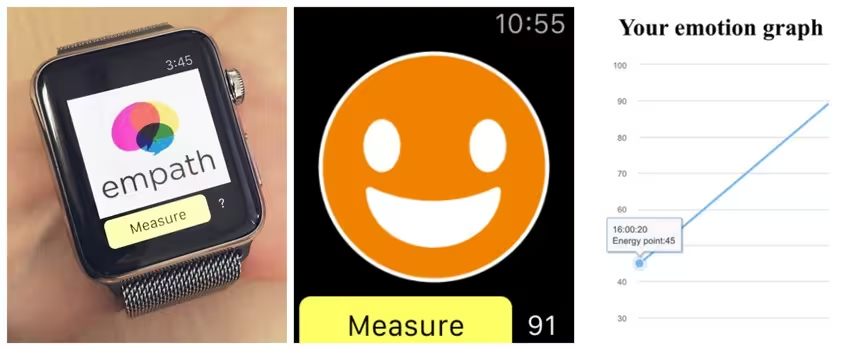

Have you ever wondered if your Apple Watch really cares about your feelings? Well, it doesn’t but this app will help it understand how you’re feeling from how you talk.

EmoWatch, from Tokyo-based Smartmedical, is an app for the Apple Watch that identifies and tracks users’ emotions through their voice. Vocal emotion-recognition isn’t new but the company claims it’s the first time that it is being done through the Apple Watch.

The technology it uses is called Empath and it works by analyzing things like the pitch, volume and speed of people’s voices. Through that information, the app can judge a person’s energy levels and depicts whether they are feeling one of four emotions – anger, calmness, joy or sorrow.

The app tracks and charts the various mental states and moods of its users over time so you can be aware of your patterns, which is beneficial.

EmoWatch will work with any language because it’s only detecting the characteristics of what the user says, not the meaning.

While it’s simplistic right now, the app is certainly useful for anyone who wishes to have an easy way to track their moods.

The company has also made its Empath API openly available for any developers who are interested in testing out vocal emotion-recognition.

Via TechCrunch

Get the TNW newsletter

Get the most important tech news in your inbox each week.