Bots are a particularly pernicious presence on Twitter. While they can be used for good, (R.I.P., original @horse_ebooks), bots often power spam, scams and, most interestingly, very volatile clusters of political and cultural discussion on Twitter. Last year, DARPA confronted the problem and, according to MIT Tech Review, a new report shows just how researchers were able to identify and shutdown the malicious programs.

The report, released last month by researchers at University of Maryland and Carnegie Mellon among others, shows the methods used by the teams in a four-week challenge designed to root out those dastardly “influence” bots.

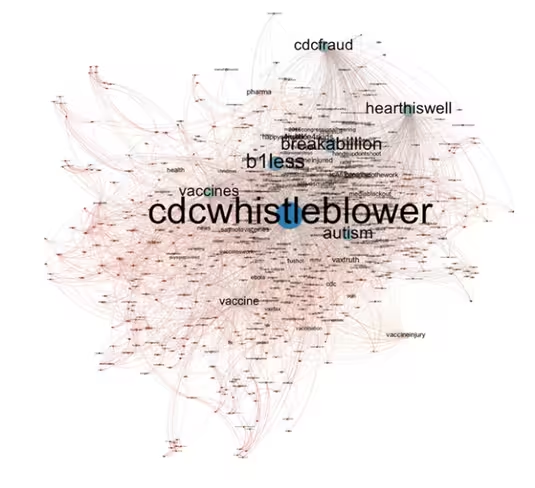

Bots make up about 8.5 percent of Twitter’s user base, but they can congregate when groups of people discuss controversial topics. The challenge asked participants to figure out which Twitter users taking part in a discussion about vaccines. If they managed to find one, would gain points — but if they incorrectly guessed which were bots in the discussion, they would lose them.

DARPA seeded 39 bots in the synthetic discussion involving more than 7,000 accounts, but the researchers did not know exactly how many bots they would be searching for. Teams not only benefitted from accuracy but also speed, as DARPA awarded extra points to team who guessed all the bots before the end of the challenge.

The winning team, Sentimetrix, managed to find 39 bots across a total of 40 guesses on Day 16 of 28. The company relied of the work of its SentiBot, which had been trained on tweets from the Indian Election in 2014, to create a profile that zeroed in on targeting bots. From there, it analyzed tweet syntax and semantics as well as characteristics of a user’s profile, message and network.

From there, the team was able to root out bots by looking at persistent signs the user wasn’t real. For example, users without profile pictures for long periods of time were identified as bots. Once handfuls of bots were recognized, researchers looked more closely at shared hashtags and connections to make leaps about which users weren’t real.

All in all, the report showed how important analytics-based bot-seeking could be to platforms of the future. As hackers become more sophisticated in their tactics, more thought and effort will need to be put in to create a platform with fewer bad actors.

➤ How DARPA Took On the Twitter Bot Menace with One Hand Behind Its Back [MIT Technology Review]

Get the TNW newsletter

Get the most important tech news in your inbox each week.