We’ve been hearing about how 3D and “holograms” will become an integral part of lives for a long time.

It infuses our pop culture, and from Panasonic’s first commercialized first 3D TV system in 2010 to our present day fascination with VR/AR, it has become more and more of a focus for both our imaginations and reality. The real world is not flat after all, so why constrain ourselves to experience the digital world on a flat screen?

The shift from 2D to 3D is as natural as adding color to movies and television was in the 1950’s.a. In many ways, it is even more impactful.

It’s not only about giving a more faithful or vibrant representation of the real world. It’s about bringing the digital world to life, creating a perfect illusion that is so real and reactive that it trumps our senses and becomes every bit as real as the physical world itself.

This coming watershed moment will impact everything from the way we work, learn and communicate to the way we relax and play. In many ways, we’ll be able to transcend the physical limitations of our world and our imagination will be the new frontier.

Of course this shift will also impact the devices we use and how we interact with machines around us – “Siri, give me a hug!”. This is why companies like Google, Facebook, and Apple are capturing the 3D market as fast as possible: the race to win 3D is about control over the next paradigm of user interaction.

But all this is still very much a dream right now In spite of early attempts to jumpstart the 3D market, we still rely on interactive flat screens to wander the digital world. Why is that? Well there are various shades of 3D, and what’s been available so far did not do a convincing job at creating that perfect illusion. So the consumer is still waiting.

My hope here is to give you a comprehensive overview of 3D from a user’s perspective and of the current challenges for 3D products, including VR and AR headsets and naked-eye 3D displays – and how they will soon become an intuitive, interactive interface to the digital world.

Why do we see in three dimensions?

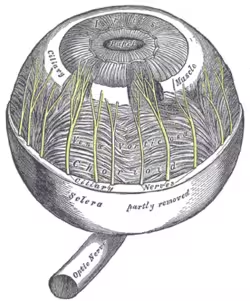

Before diving into the technology of 3D, it’s first necessary to understand the biology at play.

Try threading a needle or tying a knot with one eye. Harder than you thought right? Evolution has refined our perception of 3D so that we can get better information faster about the real world.

How we get to experience the sensation of depth at a physiological level is a complex subject. There are many depth cues available to the human eyes and the human brain, which, when they arise from the real world, reinforce each other to create a unambiguous mental picture of the 3D landscape before us.

How we get to experience the sensation of depth at a physiological level is a complex subject. There are many depth cues available to the human eyes and the human brain, which, when they arise from the real world, reinforce each other to create a unambiguous mental picture of the 3D landscape before us.

When we try to trick the visual system and the human brain into a 3D illusion, all these depth cues might not be faithfully re-created and the 3D experience is affected. Therefore it is important to understand the major ones.

Stereopsis (binocular disparity)

Believe it or not, your two eyes don’t get the same perception of the world. They see it from slightly different angles, resulting in different images on the retina.

If an object is far away, the difference, or “disparity,” between the right eye and left eye images will be small. If the object is close, the disparity will be large. This allows the brain to triangulate and “feel” the distance, triggering the sensation of depth.

Most modern 3D technologies rely on stereopsis to trick the brain into thinking it is perceiving depth. They present two different images separately to each eye. If you’ve ever been to a 3D movie, say Avatar or Star Wars, then you’ve probably experienced this effect through 3D glasses.

A more advanced “autostereoscopic” display is able to project different images in different directions of space so that different images reach the viewer, and no glasses needed.

Stereopsis is the strongest depth cue, but it’s not the only one. As a matter of fact it is possible to experience depth with only one eye!

Motion parallax

Close one eye and hold your index finger still in front of you. Now move your head slightly left and right, up and down. The background seems to move relatively to your finger. More precisely, your finger seems to move faster across your field of view than does the background.

This effect is called motion parallax and lets you experience depth even with one eye. It is critical in providing a realistic 3D impression when the viewer moves ever so slightly about the display.

Stereopsis without motion parallax still gives you a sense of depth, but 3D shapes are distorted. Buildings on 3D maps, for example, start to look crooked. Background objects seems to maliciously remain hidden from foreground objects. It’s rather annoying if you pay attention to it.

Motion parallax is tightly related to perspective — the fact that distant objects appear smaller than closer objects, which is a depth cue in itself.

Accommodation

Now even if you were sitting perfectly still (no motion parallax), with one eye closed (no stereopsis), you would still have a way to tell distant objects from close ones.

Repeat the finger experiment, hold your index finger still in front of you and make the mental effort to look at it. As the finger comes to focus, you will notice that the background becomes blurry. Now take your mind off the finger and “focus” on the background. Your finger becomes blurry as the background becomes clear. What happens is that similar to a modern camera, the eye is capable of changing its focus.

It does so by contracting the ciliary muscles resulting in a stretch of the biological lens in front of the eye.

How does the ciliary muscle know how much force must be applied? Well there is a feedback loop going on with the brain. Themuscle keeps contracting and relaxing until the brain perceives the target object as maximally crisp. This takes a fraction of a second and it becomes a strenuous exercise if repeated too often.

How does the ciliary muscle know how much force must be applied? Well there is a feedback loop going on with the brain. Themuscle keeps contracting and relaxing until the brain perceives the target object as maximally crisp. This takes a fraction of a second and it becomes a strenuous exercise if repeated too often.

The ciliary muscle action is triggered only for objects located at less than two meters. Beyond that distance the eye more or less relaxes and focuses at infinity.

Convergence and accommodation conflict

When your eyes focus on a point nearby, they actually rotate in their orbit . The convergence will stretch the extraocular muscles, a physical effect you can feel and actually recognize as a depth cue. You usually can “feel” your eyes converge if they focus on an object at less than 10 meters.

So when a person looks at the world with two eyes, two different sets of muscles are at play. One converges the eyes to the point of interest while the other changes the focusing power of the eye to form a crisp image on the retina. If the eyes mis-converge, the viewer will see double images; if they mis-accommodate, the viewer sees blurry images.

So when a person looks at the world with two eyes, two different sets of muscles are at play. One converges the eyes to the point of interest while the other changes the focusing power of the eye to form a crisp image on the retina. If the eyes mis-converge, the viewer will see double images; if they mis-accommodate, the viewer sees blurry images.

In the real world, convergence and accommodation always work in pair and reinforce each other. The nervous stimuli triggering both responses are actually linked.

In a 3D/VR/AR context though, it is often the case that images are seen in focus at a certain distance from the viewer (e.g. the movie screen in a 3D theater) while the binocular signal makes the eyes converge to another distance (e.g. the dragon coming out of the screen).

This creates a conflict for the brain which tries to reconcile two contradictory signals. This is why some viewers can experience eye fatigue or even nausea during 3D films.

This creates a conflict for the brain which tries to reconcile two contradictory signals. This is why some viewers can experience eye fatigue or even nausea during 3D films.

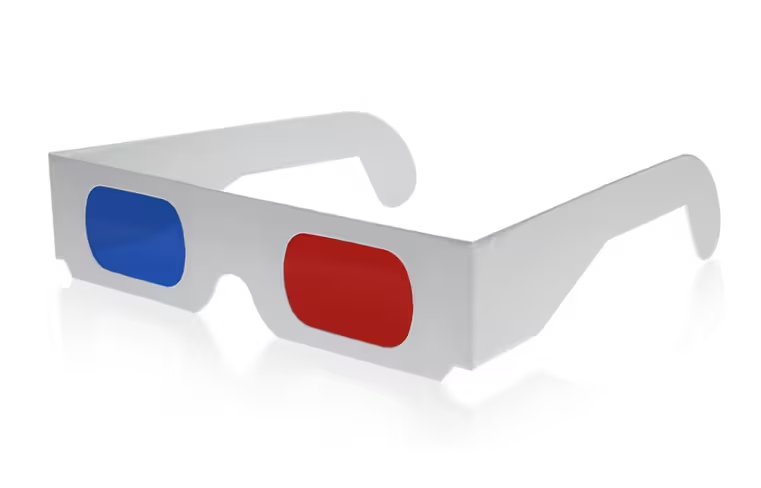

Seeing 3D through specialized eyewear

Some of us remember our first pair of red and blue 3D glasses made out of cardboard. These so-called “anaglyph glasses” let only red images reach the left eye and cyan images reach the right eye. A typical anaglyph (3D image) overlaps a red image corresponding to the worldview from the left eye with a cyan image corresponding to the worldview from the right eye.

When seen through the filtered glasses, each of the two images reaches the eye that it is intended for and the visual cortex of the brain fuses them into a perception of a three-dimensional scene. And yes, the colors are properly recombined, too.

All modern approaches to glasses-based 3D, including VR/AR headsets, function on this same principle. They physically separate the right eye and left eye images to create stereopsis.

Polarized 3D glasses (movie theater)

If you go to your local movie theater today, you’ll be handed a pair of 3D glasses that look nothing like the anaglyph version. Instead of filtering images based on their color, they filter light based on a different property, called polarization.

Think of photons as vibrating entities. They can vibrate in the horizontal direction or in the vertical direction.

On the movie screen, a special projector creates two superposed images, one sending only horizontal photons to the viewers, the other sending only vertical photons. The polarization filters on the glasses ensure that each image reaches the corresponding eye.

If you own a pair of polarized glasses (don’t steal from the theater!) you can see polarization in action in the real world. Hold your glasses in front of your face about two feet and look at a windshield or a body of water. When you rotate the glasses 90 degrees, you should see the glare through the glasses either appear or disappear, depending on your orientation. This is polarization doing its job of filtering light at the correct orientation.

If you own a pair of polarized glasses (don’t steal from the theater!) you can see polarization in action in the real world. Hold your glasses in front of your face about two feet and look at a windshield or a body of water. When you rotate the glasses 90 degrees, you should see the glare through the glasses either appear or disappear, depending on your orientation. This is polarization doing its job of filtering light at the correct orientation.

Polarized 3D glasses allow the full color spectrum of light to enter both eyes and the 3D image quality improves dramatically as a result.

In terms of 3D quality, these glasses only offer stereopsis as a depth cue. So although you see the image in depth, if you were to leave your seat and move about theater, you would not be able to see around objects.The background would seem to “move” with you in the opposite direction than what a correct rendering of motion parallax would dictate..

More problematic is the lack of support for accommodation, which results in the vergence-accommodation conflict. If you were to stare at a dragon coming out of the screen and a few feet closer to your face, you would quickly experience an extreme visual discomfort.

This is why dragons always fly by really fast – just enough to scare you and be gone before they strain your eyesight.

Active Shutter 3D glasses (TV)

If you have a 3D TV at home, chances are they are not the polarized type. Rather they use an “active shutter” technology. Right and left eye images are flashed one after the other on your TV set, and the glasses are synchronized to block access to the other eye for a short period of time. If the sequence is performed fast enough, the brain mixes the two signals and a coherent 3D picture emerges.

Why not use polarized glasses? Although it is sometimes done, the images corresponding to different polarization have to come from different pixels, so the image resolution is degraded. (By contrast, in the movie theater the two images are projected onto the screen and can coexist, so no loss of resolution occurs).

In a typical active shutter 3D system, the only supported depth cue rendered is stereopsis. Some more advanced systems use tracking to adjust the rendered content, taking into account the viewer’s head position so they can support motion parallax, albeit for only a single viewer.

Virtual Reality (VR) headsets

VR is completely new category for 3D rendering, and a technology that has gotten a lot of recent attention. By now, many people have been able to experience VR headsets, and many more will have the opportunity in the next couple of years. So how do they work?

Instead of filtering content that is coming from an external screen, a VR headset produces its own set of binocular

images and sends them directly to the correct eye. Headsets usually contain micro-displays (one for left eye, one for right eye) that are magnified and re-imaged at some distance from the viewer by some optical contraption.

If the resolution of the display is high enough, the magnification can be large, which results in a bigger “field of view” for the user and a very immersive experience.

If the resolution of the display is high enough, the magnification can be large, which results in a bigger “field of view” for the user and a very immersive experience.

Modern VR systems like the Oculus Rift track the user position to add motion parallax to stereopsis.

In their current version, VR systems do not support accommodation and are prone to the accommodation-vergence conflict, a major hurdle to be solved to ensure a good consumer experience. Today they also fail to completely support eye-motion (finite eye-box, distorsions) although this problem should be solved using eye-tracking in future models.

Augmented Reality (AR) headsets

Similarly, AR headsets are becoming increasingly popular. These are simply the see-through version of VR. This means that they blend the digital world to the real world.

To be convincing, an AR system must not only keep track of the user’s head position in the real world, but also have a detailed and frequent account of its physical 3D environment.

This is no small feat, but enormous progress have been done in the field if we believe the recent reports of Hololens and Magic Leap.

Near-eye light field displays

The same way that our two eyes see the world slightly differently, light rays entering your pupil at different location encode slightly different pictures of the world in front of us. In order to trigger accommodation, a near-eye display must be able to independently render light rays that are coming from every direction through every point in space. Such optical signal is called a light field, and it is the key to the VR/AR products of the future.

Until then be ready for the headaches.

These challenges to headsets will need to be overcome, as well as just the social and cultural awkwardness of headsets (as we’ve seen with Google Glass). The other challenge is providing multiple views of the world at the same time, which brings us to eyewear-free 3D display systems.

Naked-eye 3D displays

So how do we get to experience 3D imagery without strapping a device to our face?

If you’ve read this far, you understand that to provide stereopsis, the 3D screen must project different views of its content, aimed at different directions of space. That way, the viewer’s right and left eye will naturally be presented with different images, triggering the depth sensation. These systems are thus called “autostereoscopic.”

Because the 3D image is tied to the screen, autostereoscopic displays naturally support convergence and accommodation as long as the 3D effect is not exaggerated.

Because the 3D image is tied to the screen, autostereoscopic displays naturally support convergence and accommodation as long as the 3D effect is not exaggerated.

This does not mean that they automatically provide visual comfort. In fact, a new problem arises in those systems related to the zones of transitions between different views.

It is frequent to experience image jumps (the so called “cereal box” effect, referring to the cheap “holograms” that you can find on cereal boxes for kids), variations in brightness or even dark zones, intermittent loss of stereopsis, or worse, an inverted 3D sensation where the right eye and left eye content are switched.

So let’s examine different solutions and how they deal with these problems.

Prism film

3M started to commercialize this technology in 2009.

The film is inserted in the backlight film stack of a regular LCD display and illuminated from one of two ends. Upon illumination from the left, the LCD image is projected to the right viewing region of the display. Upon illumination from the right, the image is sent to the left.

Switching illumination at a fast pace and alternating right eye and left eye content on the LCD in synchronous manner results in stereopsis for a viewer, if they are located right in front of the display. For any other observation point though, the stereoscopic effect collapses and the image becomes flat.

Because the useful range of motion is so limited, this type of 2-view displays are often called “2.5D”.

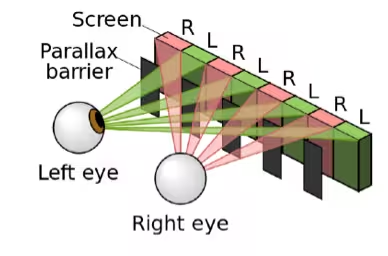

Parallax barrier

Take a 2D display and add a masking layer on top drilled with small openings. When observed through the openings, not all the underlying display pixels can be observed. The subset of pixels that are observable actually depends on the view point. In particular, right eye and left eye of the viewer will possibly see different pixel sets.

This “parallax barrier” concept was invented over a century ago, and first developed commercially a decade ago by Sharp.

This “parallax barrier” concept was invented over a century ago, and first developed commercially a decade ago by Sharp.

The modern twist includes a switchable barrier, essentially another active screen layer that can create the barrier or turn transparent to revert back to 2D mode with the full resolution of the display.

In 2011, the HTC EVO 3D and the LG Optimus 3D made headlines for being the first two globally-available 3D enabled smartphones. They are another example of “2.5D” technologies providing depth in a very narrow view range only.

Technically, the parallax barrier concept could generalize to more views in an extended field of view. The problem is that the more view you are trying to define, the more light you have to block and the power efficiency becomes an issue, especially for use in mobile devices.

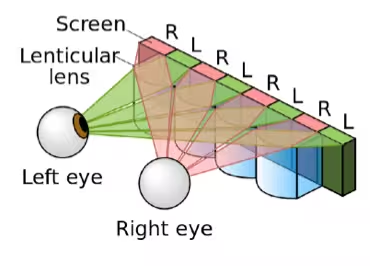

Lenticular lens

Take a 2D display and now overlay a sheet of micro-lenses on top. Lenses are known to be able to focus parallel light rays coming from a distant source into a small point, like how you would burn something with a magnifying glass as a kid.

Running the lens in reverse makes it possible to collect light from a display pixel and turn it into a directional beam of light. We call this effect – the reverse of focusing – collimation.

The direction of the beam changes with the position of the pixel under the lens. This way different pixels get mapped onto light beams propagating at slightly different directions. In the end this type of lenticular technology achieves the same goal as parallax barrier systems – making different sets of pixels be observable from different regions of space – without blocking any light.

The direction of the beam changes with the position of the pixel under the lens. This way different pixels get mapped onto light beams propagating at slightly different directions. In the end this type of lenticular technology achieves the same goal as parallax barrier systems – making different sets of pixels be observable from different regions of space – without blocking any light.

So why haven’t lenticular 3D displays invaded the market yet?

It’s not by lack of trying, since Toshiba released the first system in Japan in 2011. When you look at the details, there are some annoying visual artifacts that are fundamentally tied to the optics.

First, display pixels are usually composed of a small emissive area, surrounded by a large “black matrix” where no light is emitted. Through the lens, the emissive areas are mapped into certain directions of space, and the black matrix in some others. This results in very obvious dark zones between 3D views. The only way to solve that problem is to “defocus” the lens, but this introduces a large amount of cross-talk between views and the image becomes blurry.

Second, it is hard to obtain a good collimation property in a wide view-angle with a single lens. This is why camera objectives and microscopes use lens compounds instead of single lens elements. Therefore lenticular systems are bound to have only a rather small field of view (about 20deg) where one can observe true motion parallax. Past that range, the 3D image repeats itself as if seen from the wrong angle, and becomes increasingly blurry.

The narrow field of view and poor visual transitions between views are the nemesis of lenticular displays. For TV systems, current lenticular technology might be ok if we consider that viewers will automatically position their heads at the good viewing spots and not move much.

However as soon a we talk about mobile, automotive, or any other kind of application where arbitrary head motion must be tolerated, lenticular falls way short today.

So how do we go about designing a naked-eye 3D viewing system with a larger field of view and smooth transitions ?

Eye-tracking

If I know where your eyes are located with respect to the display, I can compute the corresponding perspective and try to steer those images into their respective eye. As long as I can detect your eye position fast enough, and have an image steering mechanism that responds equally fast, I can ensure stereopsis from any viewpoint, and a smooth rendering of motion parallax.

And that’s is the operating principle of eye-tracked autostereoscopic displays.

The beauty about this approach is that only two views need to be rendered at any time, thus preserving most of the display resolution. From a practical point of view, eye-tracked systems can be implemented with modern parallax-barrier technology which make them impervious to optical artifacts present in lenticular systems.

The beauty about this approach is that only two views need to be rendered at any time, thus preserving most of the display resolution. From a practical point of view, eye-tracked systems can be implemented with modern parallax-barrier technology which make them impervious to optical artifacts present in lenticular systems.

Half of the light is lost in the barrier, but turning off the barrier results in a 2D image at full resolution and full brightness.

Eye-tracking is still not a panacea, though. First of all, it only works for a single viewer at a time. Even then, tracking the eyes requires additional cameras in the device, and high complexity software running constantly in the background trying to estimate the next eye position.

This might be fine for TV systems where form factor and power consumption are not a huge issue, but not for mobile devices where these two metrics are key.

Moreover, even the best eye-tracking systems lag or fail rather often due to changes in lighting, eye obstruction by hair or glasses, another pair of eyes presented to the camera, or simply because the viewer’s head motion is too fast. When the system fails, the viewer perceives wrong views which makes for a very unpleasant visual experience.

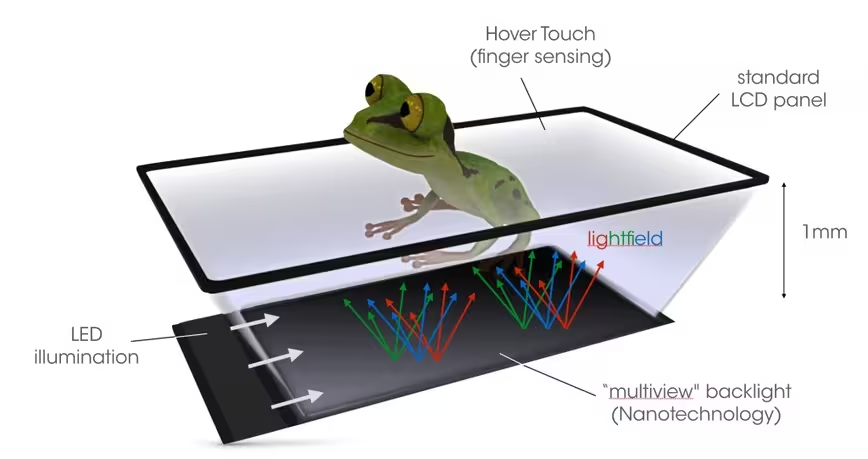

Diffractive backlighting

The most recent advancement in naked-eye 3D is the concept of a diffractive “multiview” backlight for LCD screens. Diffraction is a property of light that makes it bend when it interacts with sub-micron features, so this means we are entering the realm of nanotechnology.

So yes we are entering the realm of nanotechnology.

Normal backlighting for LCD screens produces randomly distributed light rays, so each LCD pixel sends light in a wide region of space. A diffractive backlight, however, can produce a well ordered set of light rays – a lightfield – and can be set up so that a single directional light ray emerges from a given LCD pixel. In this manner, different pixels can send their signal in different (and completely arbitrary) directions of space.

Like lenticular lenses, the diffractive approach makes full utilization of the incident light. Unlike lenticules however, it can deal equally well with small and large emission angles, and allows full control over the angular spread of each view. If designed properly, no dark zones arise between views and the 3D image can stay as crisp as in the parallax barrier approach.

Another feature of the diffractive approach is that the light steering features do not interact with light propagating directly through the display.

Another feature of the diffractive approach is that the light steering features do not interact with light propagating directly through the display.

In this way, complete transparency is preserved. This gives the option to add a regular backlight underneath and fallback to a 2D mode at the full resolution of the LCD panel, and paves the way to the development of see-through naked-eye 3D displays.

The main challenge of the diffractive method is color uniformity. Diffractive structures usually scatter light of different colors into different directions and this chromatic dispersion needs to be compensated at the system level somehow.

The next frontier: From 3D visuals to 3D interactions

The vision and hope of 3D does not stop at simply looking onto 3D images, though. It opens an entirely new paradigm of user interaction.

It is now only a short matter of time until 3D experiences reach a level of quality suitable for consumer and when we’ll lose that subconscious fear breaking the 3D illusion.

In VR/AR headset systems, this means increasing the field of view, supporting accommodation and increasing the responsivity of the system upon fast head motion.

In naked-eye 3D displays, this means providing enough freedom for the user to move about the display without experiencing visual artifacts such as loss of 3D, dark zones, view jumps, or motion parallax lags.

Blending worlds

Once the illusion becomes convincing and resilient, we’ll forget about the particular technology and start experiencing the virtual world as the real world, at least until we start walking into physical walls. If we want to complete the illusion, we need to take into account the fact that the real world is reactive.

Just as we turned digital data into physical light signals interpretable by us in the real world, we need to take real world data about the mechanics of our bodies and feed that information back to the digital world so it can react.

In VR/AR headsets, this is achieved via a combination of sensors and cameras carried by the user or located in the near-by environment. In the future we can envision smart clothing carrying sensor arrays, although some might balk at the thought of having electrical wires running through their bodies.

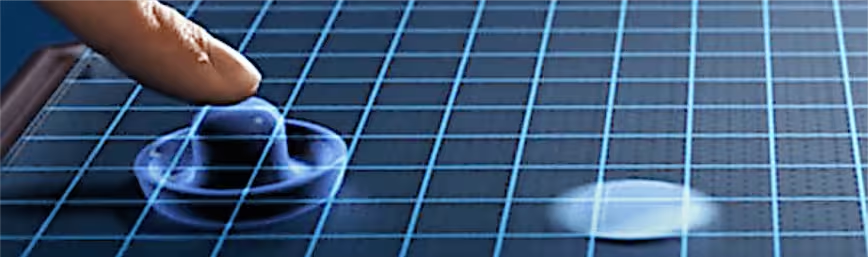

On a 3D screen, this task could be performed by the screen itself. In fact Hover Touch technologies developed by Synaptics and others already allow finger sensing above the screen.. Soon we’ll interacting with holograms in mid-air by simply bringing our fingers to the screen.

Touching a hologram

Once the digital world understands the mechanics of the user, the two can be more naturally blended. In other words, the digital signals could open a door in the wall before we walk into it.

But wouldn’t it be better if it let me experience contact with the wall as in the real world? How do we bring the sense of touch to complete the illusion?

This is question for the field of haptics, which you’ve already experienced if you’ve ever turned your phone to vibrating mode.

This is question for the field of haptics, which you’ve already experienced if you’ve ever turned your phone to vibrating mode.

Imagine wearing gloves and other clothes filled with actuators able to vibrate or to physically stimulate your skin via weak electrical signals. If well-tuned, they could allow you to actually feel contact in different parts of your body based on your virtual encounters.

Putting on clothes full of wiring and electric signals might not be for everybody, of course.

For screen-based devices, ultrasonic haptics could let you experience touch in mid-air without the need for any smartwear. This technique emits ultrasonic waves from the screen contour and adjusts the waves so they reinforce each other under the user’s finger.

Believe it or not, these reinforced sounds waves are powerful enough for your skin to feel them. Companies like Ultrahaptics are already trying to bring this technology to market.

Holographic Reality

While VR and AR headsets are trendy today, their mobile and social limitations present a challenge for any full-featured, interactive 3d experience. Screen-based 3D displays with touchless finger sensing and feeling could bridge that divide and provide us with a more direct way to interact with the digital world in the future.

In a recent blog post, I coined the term Holographic Reality (HR) to describe this kind of platform a platform, and described a world where HR displays couldl be used extensively in all aspects of our daily lives. Every window, table top, wall, door, will have HR capabilities, and every communication device in the office, home, car, or even in public places will be equipped with HR units. This would give us access to the virtual world anywhere, anytime, and without the need to wear headsets or electrical wires.

In a recent blog post, I coined the term Holographic Reality (HR) to describe this kind of platform a platform, and described a world where HR displays couldl be used extensively in all aspects of our daily lives. Every window, table top, wall, door, will have HR capabilities, and every communication device in the office, home, car, or even in public places will be equipped with HR units. This would give us access to the virtual world anywhere, anytime, and without the need to wear headsets or electrical wires.

The next 5 years

The last five years have yielded some of the most exciting developments in 3D technologies, displays, and headsets.

Technology is advancing at a radical pace, and it’s not beyond imagination that in the next five years we’ll all start communicating, learning, driving, working, shopping or playing in a holographic world supported by a well developed 3D ecosystem, compatible with headsets and 3D screens alike.

Feature image credit: Giphy and simplesmente-me

Get the TNW newsletter

Get the most important tech news in your inbox each week.