The Software Freedom Conservancy (SFC), a non-profit community of open-source advocates, today announced its withdrawal from GitHub in a scathing blog post urging members and supporters to rebuke the platform once-and-for-all.

Up front: The SFC’s problem with GitHub stems from accusations that Microsoft and OpenAI trained an AI system called Copilot on data that was published under an open-source license.

Open-source code isn’t like a donations box where you can just take whatever you want and use it in any way you choose.

It’s more like photography. Just because a photographer doesn’t charge you to use one of their images, you’re still ethically and legally required to give credit where it’s due.

According to a blog post on the SFC site, Copilot doesn’t do that when it comes to using other people’s code snippets:

This harkens to long-standing problems with GitHub, and the central reason why we must together give up on GitHub. We’ve seen with Copilot, with GitHub’s core hosting service, and in nearly every area of endeavor, GitHub’s behavior is substantially worse than that of their peers. We don’t believe Amazon, Atlassian, GitLab, or any other for-profit hoster are perfect actors. However, a relative comparison of GitHub’s behavior to those of its peers shows that GitHub’s behavior is much worse.

Background: GitHub is the defacto repository for open-source code in the world. It’s like a combination of YouTube, Twitter, and Reddit, but for programmers and the codes they produce.

Sure, there’s other options. But switching from one code-repository ecosystem to another isn’t the same as trading Instagram for TikTok.

Microsoft acquired GitHub in 2018 for more than seven billion dollars.

In the time since, Microsoft’s leveraged its position as OpenAI’s primary benefactor in a joint endeavor to build Copilot.

And the only way to get access to Copilot is through a special invitation from Microsoft or paid subscription.

The SFC and other open-source advocates are upset because Microsoft and OpenAI are essentially monetizing other people’s code and stripping away the ability for those who use that code to give proper credit.

In other words: Microsoft is taking people’s work, stripping credit, and selling it to others via algorithms.

A solution: Kill Copilot. Alternately, Microsoft and OpenAI could build a time machine, go back in time, and label every single datapoint in Copilot’s database so that a second model could be built that would apply proper credit to every output.

But it’s always easier to exploit the Wild West do-whatever-you-want regulatory environment and take advantage of people than it is to care about the ethics of the products and services you offer.

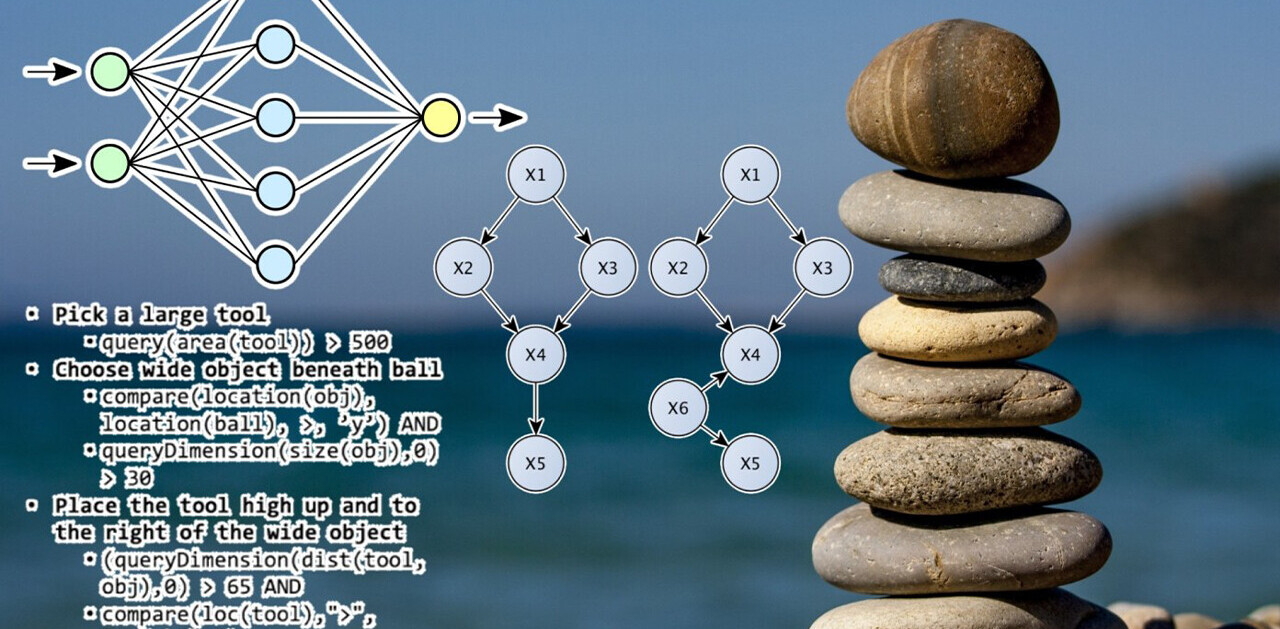

Neural’s take: When it comes to solid examples of AI that makes human lives easier, GitHub’s Copilot tops the list. It takes some of the tedious things that can take developers hours of work and makes them as easy as pushing a button or typing a few lines of text.

And there’s a bit of precedent here. GPT-3 and Dall-E use databases of human-generated media to generate novel outputs.

But there’s a key difference between those generators and Copilot. Drawing a duck in the style of Monet or asking GPT-3 to tell you a story about a happy dog are one thing.

Regurgitating code snippets line-by-line from files in a database isn’t coding in the style of someone else, it’s using someone else’s code.

It’s probably a bit more nuanced than that. There is, of course, sometimes more than one way to solve a coding problem. And coding is often as much art as it is science.

But, just because you can take a picture of the setting sun with your iPhone doesn’t mean you can steal someone else’s sunset photograph, call it your own, and sell it to other people.

At the end of the day it doesn’t matter. Copilot is a hit. The dev community seems to absolutely love it. It’s gotten more positive press than any amount of naysaying is likely to affect.

Never mind what it’ll eventually do to the open source community. Who needs open source repositories when you can just work for free to make money for Microsoft?

The best thing is, you don’t get a choice. There’s no opting in or out. Microsoft and OpenAI have your data and there’s nothing stopping them from doing whatever they want with it. Resistance is futile.

Get the TNW newsletter

Get the most important tech news in your inbox each week.