The Feminist Internet, a non-profit working to prevent biases creeping into AI, has created F’xa — a feminist voice assistant that teaches users about AI bias and suggests how they can avoid reinforcing harmful stereotypes. Judging by a recent UNESCO report, it’s not a moment too soon.

In the report “I’d blush if I could,” (titled after Siri’s response to: “Hey Siri, you’re a bitch”) UNESCO notes that voice assistants often react to verbal sexual harassment in an “obliging and eager to please” manner.

The reason why that’s a big issue is most industry-leading voice assistants are female by default. They all have typically female names, and markedly feminine voices. Just think about it: Apple’s Siri, Amazon’s Alexa, Microsoft’s Cortana, and Google’s Google Home.

Having female voices react to harassment in a benign way can undercut the progress towards gender equality. F’xa tries to combat this norm of female servitude in our smart devices, by challenging gender roles in our voice assistants just like they should challenged be in real-life.

This sounds terrific, but how does it actually go about making that happen?

How the feminist bot works

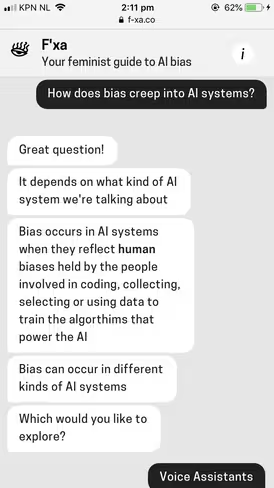

F’xa is built with feminists values in mind and every response given holds up to feminist beliefs that avoid reinforcing bias and stereotypes. F’xa was created by a diverse team using the Feminist Internet’s Personal Intelligent Assistant Standards and Josie Young’s Feminist Chatbot Design research.

In preparation for building F’xa, Young explored contemporary feminist techniques for designing technology called the Feminist Chatbot Design Process — a series of reflective questions incorporating feminist design, ethical AI principles, and research on de-biasing data.

Using a smartphone, the bot works to ensure designers don’t perpetuate gender inequalities into their chatbots and educates users on how current voice assistants give gender equality a bleak future.

For example, when F’xa was asked “How does bias creep into AI systems?” it replied: “Bias occurs in AI systems when they reflect human biases held by the people involved in coding, collecting, selecting, or using data to train the algorithms that power the AI.”

Smart devices are colonizing the consumer electronic market and according to F’xa, researchers estimate that 24.5 million voice-driven devices will be used daily in 2019; predictions further suggest that 50 percent of searches will be made via voice command by 2050. So it’s probably a good thing someone is trying to fix the problems of voice assistants before it becomes too difficult.

Despite the underrepresentation of women in AI development, voice assistants are almost always female by default and given feminine names and voices. The most popular tasks given to voice assistants usually mirror jobs historically associated with women including setting kitchen timers, creating to-do lists, and putting together shopping lists — thereby fueling gender bias.

According to several studies, regardless of the listener’s gender, people typically prefer to hear a male voice when it comes to authority, but prefer a female voice when they need help. But F’xa believes tech companies like Apple have a responsibility to challenge these kinds of market preferences and not just blindly follow them.

If more women were on Silicon Valley’s company boards and in their dev teams, the way we imagine and develop technology would undoubtedly alter our perspective on gender roles. One can’t help but think what Alexa would have sounded like, or what it would’ve been named, if more women had a hand in creating its technology.

If you’re a developer or a user, you can try the feminist bot out here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.