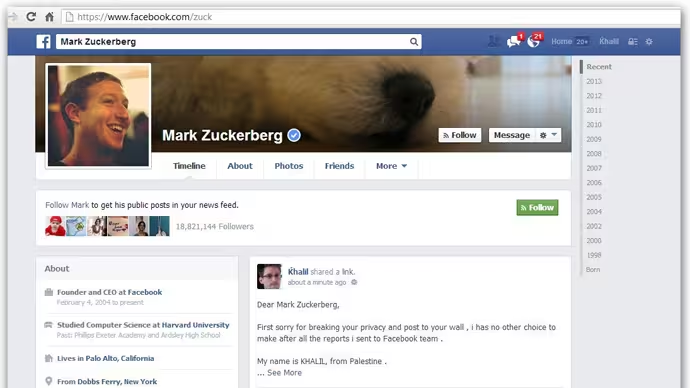

Facebook says it will make changes to clarify the processes behind its whitehat program for reporting bugs, after a frustrated researcher posted details of a vulnerability on CEO Mark Zuckerberg’s profile page having failed to gain the company’s attention.

Writing on the Facebook Security blog, Chief Security Officer Joe Sullivan explains that the company will improve its email messaging to make clear what is required to validate a bug, while it will also update its whitehat program pages to better explain how bugs should be reported.

The researcher, known only as ‘Khalil,’ found a way to post information on any user’s page. But he became frustrated after failing to convince Facebook that he had spotted a bug, despite three efforts emailing its whitehat program contacts. Indeed, at one point, he was even told that the issue he reported was “not a bug”.

The researcher decided to make his efforts public by posting details to Zuckerberg’s wall, after which the bug was quickly dealt with by Facebook.

Sullivan admits that Facebook “failed in our conversation” with him because it was “too hasty and dismissive” of his case.

“We should have explained to this researcher that his initial messages to us did not give us enough detail to allow us to replicate the problem. The breakdown here was not about a language barrier or a lack of interest — it was purely because the absence of detail made it look like yet another misrouted user report,” Sullivan writes, explaining that researchers typically provide more detail than Khalil did.

Despite the admissions, Facebook is not going to change its policy on excluding payouts to those that test vulnerabilities on users.

“It is never acceptable to compromise the security or privacy of other people. In this case, the researcher could have sent a more detailed report (like the video he later published), and he could have used one of our test accounts to confirm the bug,” Sullivan writes.

And yes, since Zuckerberg is a user — as well as CEO and founder — that means no money for Khalil, although his example has at least prompted Facebook to clarify its processes for the future.

We’ll see if the improved communication prevents other researchers from being forced into more visible actions to illustrate issues. Certainly, this incident was embarrassing for Facebook’s security staff, who will not ever want a repeat performance.

Headline image via laughingsquid / Flickr, screenshot via rt.com

Get the TNW newsletter

Get the most important tech news in your inbox each week.