Facebook’s quarterly earnings typically has one particularly eyebrow-raising statistic: the number of mobile users. That’s why the company’s new Oregon datacenter has a ‘Mobile Device Lab,’ which uses real devices to monitor Facebook performance in real-time.

Scaling Facebook

In helping connect the world, there’s a unique problem Facebook encounters. If it wants everyone to have the same experience, the app or mobile website has to act the same no matter which device you’re using.

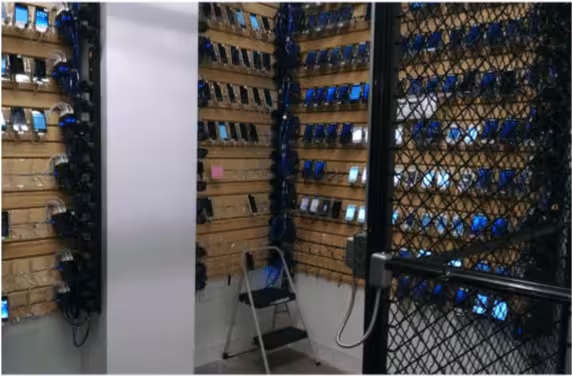

The Mobile Device Lab has racks of various devices — from flagships to bargain handsets — that hold up to 60 devices.

It started as a ‘sled’ that held devices in a metal rack, but the metal blocked Wi-Fi. A ‘Gondola’ was built (a skeezy plastic rack you’d see in a low-end department store display), but the cables running to and from it were clumsy.

The ‘Slatwall’ was an extension of the Gondola; basically an entire room that was a Gondola, and allowed Facebook to have 240 devices active at a given time. The company wanted 2,000 devices up and running, and keeping them at its Menlo Park headquarters wasn’t going to work.

So, it started rolling them out to its datacenters.

Racks and signals

It all sounds simple enough; plug some phones in, keep them on and run Facebook. There are also datacenter issues. Phones, like buildings, are almost always different.

And testing phones is a fairly detailed process. There has to be a certain amount of isolation to accommodate a robust Wi-Fi signal, and a rack to power things along.

Those racks, which either have eight Mac Minis (for iOS devices) or four OCP Leopard serves (Android), are custom built and designed to function in an electromagnetic isolation chamber. Each Mac is connected to four iPhones, while each OCP server runs eight Android devices.

Each rack can accommodate up to 32 phones, and has its own Wi-Fi access point for the devices. Phones are presented on a slight slant so a camera can record what’s happening on-screen.

Rack Wi-Fi is also carefully limited to the devices on its shelves. The physical wireless access point maintains a four-foot distance from the devices (which Facebook says provides “sufficient attenuation of the signal”), and are insulated from other racks so as not to cause any signal interference. Facebook says it’s planning to open source its plans for the racks.

Software

You may not realize it, but Facebook changes just about every day. Small tweaks and fixes are constantly incoming, and the apps are famously updated bimonthly.

For its servers, Facebook uses a package manager and a configuration manager named “Chef.” It helps the team make sure each server is running the proper configuration files, as well as the correct services. It also handles notifications for when configurations change.

It allows for uniformity across servers, but is critical in helping Facebook determine where any issues may lie. If it’s a server-side issue that’s causing a problem, Chef will let the team know. If Chef says everything is in tip-top shape — but there are still performance issues on devices — Facebook knows it has a more complex problem.

The future

Facebook engineers used to simply deploy changes and test them on whatever device they had within arms reach. That was cool in the early days, but it’s 2016: Facebook is a juggernaut. The company doesn’t want you to say ‘Facebook is being weird today’ ever again.

It has plans for scaling its racks to accommodate up to 64 devices, but has a few hurdles to overcome first (Android servers need in-memory repo check outs, and a new solution is needed; Chef’s support for iOS could be better, too). Racks may also have to be redesigned to fit larger phones.

Mobile Lab is also being thought of as a way any team within Facebook can do on-device testing, so Facebook wants to make it as simple as possible. Currently, only the production engineering team uses it, and their tests written in CT-Scan don’t fit many other use-cases in the company.

Get the TNW newsletter

Get the most important tech news in your inbox each week.