Facebook is more than just a social network, it is also officially an online science laboratory. The company has revealed in a research paper that it carried out a week-long experiment that affected nearly 700,000 users to test the effects of transferring emotion online.

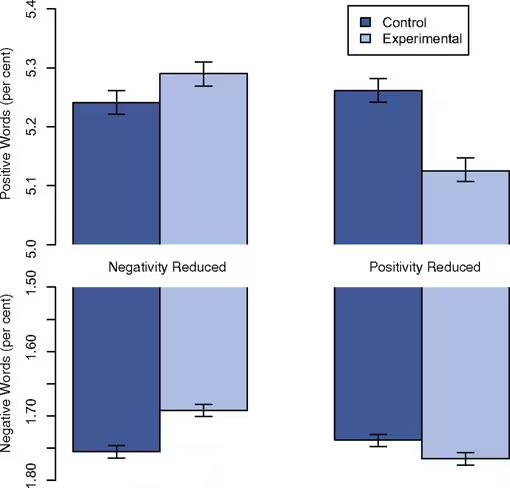

The News Feeds belonging to 689,003 users of the English language version were altered to see “whether exposure to emotions led people to change their own posting behaviors,” Facebook says. There was one track for those receiving more positive posts, and another for those who were exposed to more emotionally negative content from their friends. Posts themselves were not affected and could still be viewed from friends’ profiles, the trial instead edited what the guinea pig users saw in their News Feed, which itself is governed by a selective algorithm, as brands frustrated by the system can attest to.

Facebook found that the emotion in posts is contagious. Those who saw positive content were, on average, more positive and less negative with their Facebook activity in the days that followed. The reverse was true for those who were tested with more negative postings in their News Feed.

It’s fairly established that this is true for real life, i.e. seeing a friend upset can upset you personally, but Facebook found that “textual content alone appears to be a sufficient channel” to also have this effect.

The company believes that this study is one of the first of its kind to show these kind of results, and certainly one of the largest-scale to date, but users may well be upset to hear about it. The company points out that it didn’t breach its terms — users give permission for experiments, among other things, when they sign up — but testing theories on people without any warning could be seen as an abuse of the social network’s popularity and position.

Even the editor of the report found the ethics behind it to be problematic, as The Atlantic points out. What do you think?

Update: Adam Kramer, a data scientist at Facebook who co-authored the report, told the BBC: “We felt that it was important to investigate the common worry that seeing friends post positive content leads to people feeling negative or left out.

“At the same time, we were concerned that exposure to friends’ negativity might lead people to avoid visiting Facebook.”

The report has caused quite a stir among its users, and Kramer admitted Facebook failed to “clearly state [its] motivations in the paper”.

“I can understand why some people have concerns about it, and my co-authors and I are very sorry for the way the paper described the research and any anxiety it caused,” he added.

➤ Facebook Research Paper | Via New Scientist

Headline image via Adisorn Saovadee / Shutterstock

Get the TNW newsletter

Get the most important tech news in your inbox each week.