You can tell a lot about the direction of a company by looking at its tech stack. A great example of this comes from Facebook, which today announced the creation of a brand new open-source DHCP server, as it had outgrown the existing products. This tool, called DHCPLB, will allow it to more efficiently scale its data center efforts.

First, a little bit of technical dreariness. Hang tight, dear reader, as this bit’s important.

DHCP stands for Dynamic Host Configuration Protocol. Although it’s unlikely to be familiar to non-technical readers, it’s worth knowing about, as it’s fundamental to how computer networks work.

In a nutshell, DHCP automatically assigns networking information (like IP addresses) to hosts on a network, thereby saving a poor sysadmin from having to do it manually. This makes it possible to connect a laptop to a network, without needing a PhD in Computer Science. It also makes it easier for large companies, like Facebook, to add new server infrastructure without needing much manual involvement.

In the case of Facebook, it uses DHCP to assign IP addresses to out-of-bound gear, as well as to provision operating systems on bare-metal hardware. As Facebook’s traffic and hardware needs grow, so too does its dependence on this one crucial protocol. The problem is that the existing DHCP servers aren’t exactly equipped for operating at the same scale as Facebook, which is one of the world’s most intensely trafficked websites.

For much of the company’s life, it used the open-source Kea DHCP server, which is a single-threaded application. Over the years, it’s become increasingly apparent to the company that it’s outgrown it, forcing it to look elsewhere for alternatives.

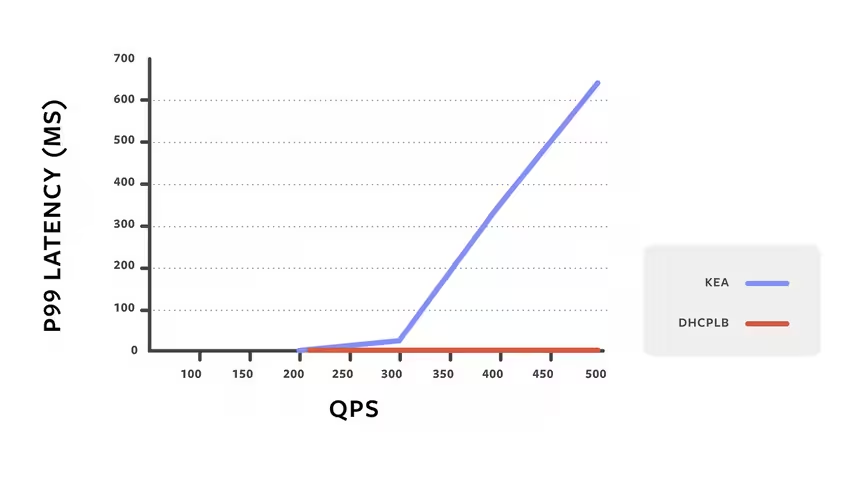

“The single-threaded nature of the software means that only a single transaction may be processed at a time; thus, if each backend call takes 100ms, then a Kea instance will be capable of doing, at maximum, 10 queries per second (QPS),” wrote Pablo Mazzini, Production Engineer at Facebook, in a blog post.

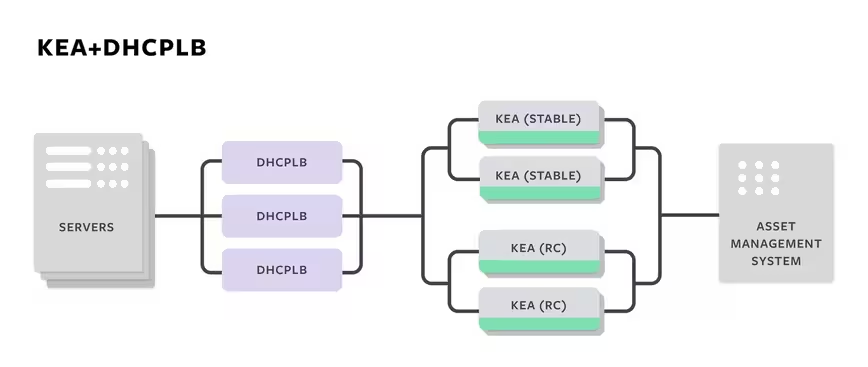

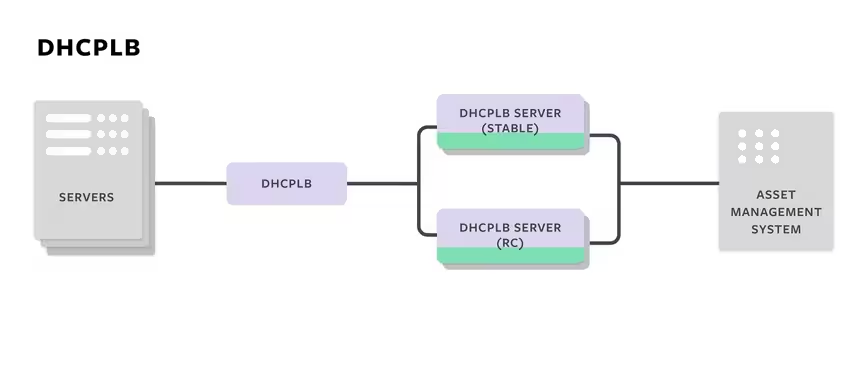

In 2016, Facebook built a load-balancer called DHCPLB (in fact, the server announced today by Facebook is an evolution of this load balancer.) This was built using Google’s Go programming language, which is designed to make it easier to build programs that take advantage of multi-core processors.

In its earliest incarnation, DHCPLB allowed Facebook to balance DHCP requests across its Kea servers, whilst also allowing it to experiment with its DHCP setup by A/B testing configurations.

Although this improved performance, it wasn’t enough for Facebook’s uniquly demanding needs. The fundamental problem with Kea, namely that it was a single-core application, hadn’t been addressed. As a result, Facebook was still facing massive bottlenecks.

It then decided to reinvent DHCPLB as a fully-featured DHCP server.

“The new setup allowed us to take advantage of the multithread design to prevent the new server from blocking and queuing up packets when doing back-end calls. We first had to make changes to DHCPLB to add a new mode that doesn’t forward packets but instead generates responses,” wrote Mazzini.

Facebook wanted to reduce the latency of DCHP requests, and so far, it’s been a rousing success. A chart published by the company shows that as DHCP requests rise, latency remains pretty much constant. This has allowed Facebook to remove Kea from its infrastructure.

“DHCPLB gives us the ability to A/B test changes on the server implementation. Even after we rolled out DHCPLB, we continued to run the Kea servers in parallel so we could monitor error logs until we were confident that the replacement would be at least as reliable as Kea had been. We have since deprecated the Kea DHCPv6 server,” he added.

Most tech companies, even the most successful ones, wouldn’t need to reinvent this particular wheel. Broadly speaking, this problem is a unique one. It serves as an indicator of the immense scale at which Facebook operates, and in particular, emphasizes its constant need to expand its server infrastructure to cope with user demand.

Everyone knows that Facebook is mind-bogglingly big. The existence of DHCPLB shows how mind-bogglingly big.

If you’re the CTO of Amazon or Google, or just want to have a look under the hood of DHCPLB, you can download it from GitHub here. And if you’re curious to read the reasoning behind its existence in more detail, the announcement blog is here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.