Parents have been keeping an eye on what their kids do on the internet since it was first invented. Tools like Kiddle, a search engine designed for kids, aim to relieve parents of this duty and let the software do their job.

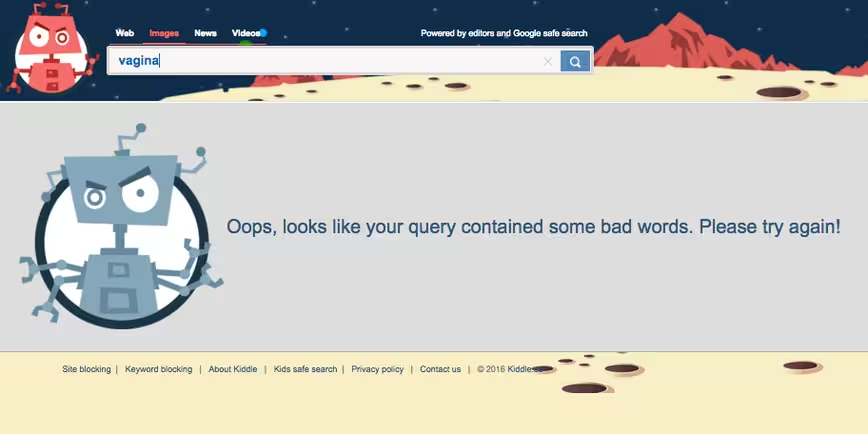

There’s one problem with this though – Kiddle is determining what it regards as safe for your kids. So what does that mean? Well, it means your daughter won’t be able check if the cramps she has in her tummy might be her first period and your kids won’t be able to search for a clear definition of what it means to be gay or transsexual, so they might have to rely on what they hear in the playground.

Kiddle has a team of editors that manually select the terms it determines to be ‘unsafe’ and these include: LGBT, lesbian, gay, sex, intercourse, menstruation, circumcision, death, birth, terror, self harm, self harm help, suicide and many many more.

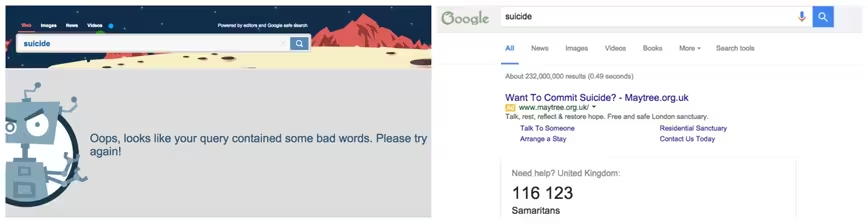

If I search the term ‘suicide’ on Google, I am immediately given a prompt to call the Samaritans and asked if I need help. Kiddle appears to take the stance that ignorance is bliss and has blocked all terms relating to suicide, offering zero help or support for kids who might be in need.

It would be much more helpful if Kiddle offered a tailored result for searches about things relating to self harm, suicide or depression. And I imagine that would be more comforting to parents as well.

I don’t have kids yet and I can’t begin to imagine how difficult it is to protect them from the harmful side of the internet but I also know that I would never rely on software to do the parenting for me, which is what Kiddle is trying to do. However, not every parent is internet-savvy and shouldn’t have to worry about something that’s made for kids actually hindering their development.

Instead of blocking terms like LGBT and intercourse, Kiddle would be much better to help educate kids in an age-appropriate manner about these things, not adding to the stigma.

Suicide, lesbian, gay, menstruation – these are not bad words. These are everyday things that children will deal with, regardless of whether Kiddle blocks them or not. It’s only blocking them from the internet, not real life, and preventing kids from learning appropriately.

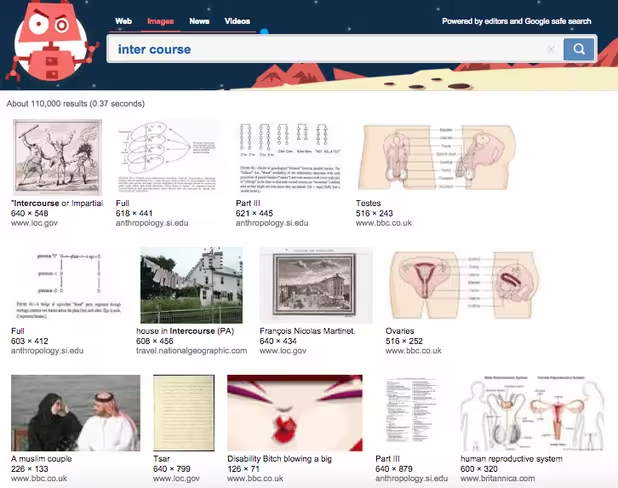

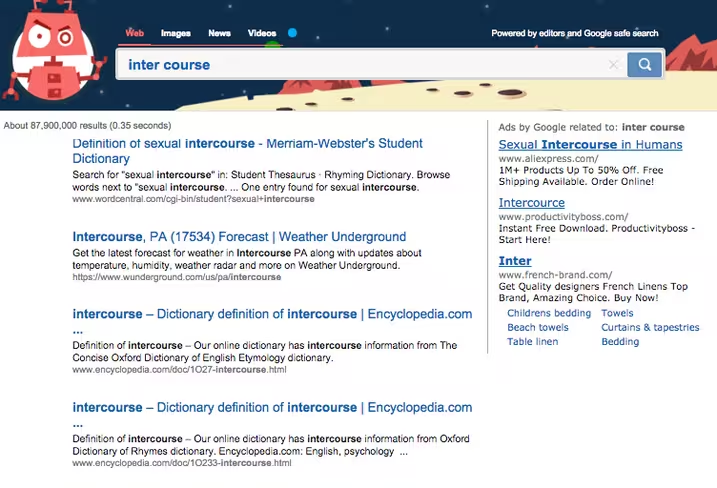

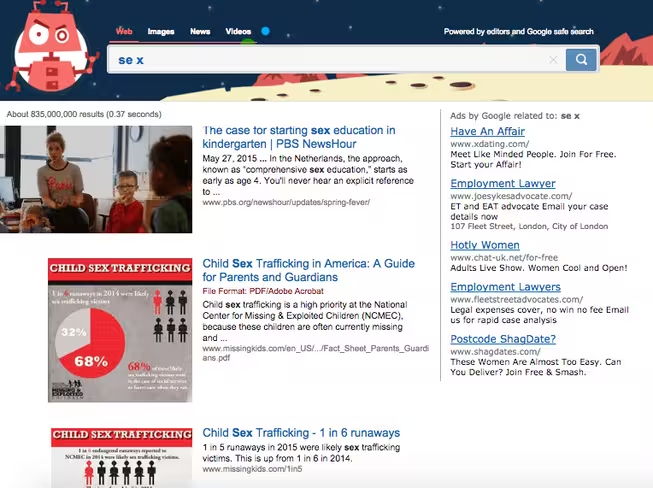

The apparently child-friendly search engine isn’t entirely locked down though, a simple spelling mistake or breaking up of the words can get you detailed results on things of a sexual nature.

If I could find these in less than two minutes, I have no doubt that any internet-savvy kid can do the same.

Kiddle’s parent company isn’t easily identifiable from its website, it just states that it is ‘powered by editors and Google safe search.’ However, it is not related to Google in any way, despite its similar styling and colored logo.

It has been reported that it is the work of Vladislav Golunov, the founder of FreakingNews.com – a site that hosts viral photoshop contests – and its Twitter page has been actively promoting Kiddle.

While the intentions of Kiddle’s creator may have been good, it needs some work before it can be regarded as truly useful in my opinion. Right now, the search engine isn’t protecting kids because it’s still possible to find explicit content within seconds.

It’s not helping to educate or support children by ignoring and blocking common things that children need to learn about. So if it’s not doing any of the above, I struggle to see the value in the platform at all.

➤ Kiddle

Get the TNW newsletter

Get the most important tech news in your inbox each week.