Simulating the universe is difficult. There are, after all, potentially infinite variables to consider.

Scientists typically use supercomputers to crunch data at the cosmological level, but a team of researchers from Carnegie Mellon recently figured out a way to use the same machine learning technology used to teach AI to paint or create music like a human to run advanced simulations on graphics processing units (GPUs).

Yes, the same hardware and neural networking technology that powers “This Person Does Not Exist” can now simulate our universe at high resolution. This is huge. It could very well change the way we perceive the universe and understand the laws of physics.

Background: According to the research team, it would take about 23 days to run a cosmological simulation on a single processing core using traditional methods.

For this reason, researchers tend to use supercomputers for these types of simulations. This is because physics is still an open problem. We don’t have a set of rules that govern the universe which we can apply across its entirety – scientists haven’t figure out how to reconcile the laws of classical physics with what they’ve observed in the quantum realm.

And that means we have to make crap up as we go along. Scientists have to experiment with different values when it comes to, for example, predicting how much dark matter there is in the universe. In this way it’s a massive feat of trial-and-error. They run the simulations, check them against what we can observe locally with space telescopes and other data-collection sources, and then repeat.

The problem

Running a supercomputer is expensive. Just renting one costs upwards of thousands of dollars an hour. And the carbon footprint of a supercomputer is sasquatch-sized compared to the relatively diminutive power consumption of a single GPU.

Supercomputers aren’t especially good solutions for processing problems that intentionally require trial-and-error.

The solution

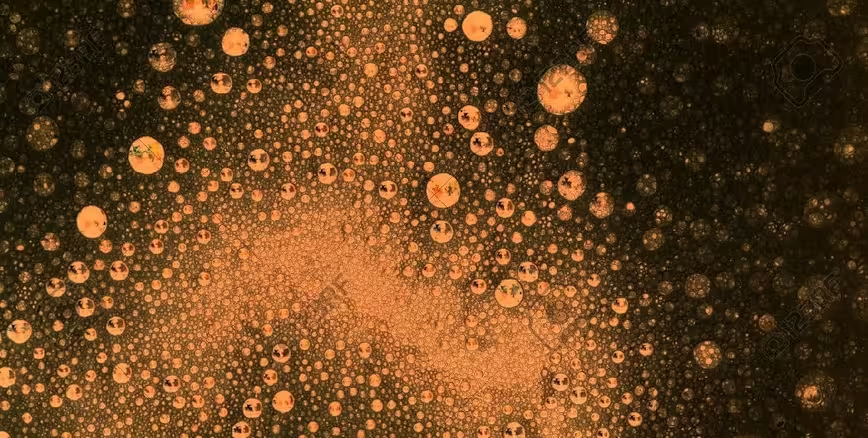

The researchers distilled the problem down to this: we can currently make high-resolution images of small patches of simulated universes and we can make low-resolution images of large simulated areas, but it takes too much time, energy, and power to make high-resolution images of large simulated areas.

This is a work-stopper when it comes to attempting to simulate the entire cosmos. The solution, of course, is AI.

Instead of teaching an AI to simulate a universe by generating it procedurally (again, something that could hypothetically involve an infinite amount of variables), the CMU team taught it hallucinate entire areas in high-resolution.

And this makes things faster. How much? According to a press release written by Jocelyn Duffy of Carnegie Mellon University:

The trained code can take full-scale, low-resolution models and generate super-resolution simulations that contain up to 512 times as many particles. For a region in the universe roughly 500 million light-years across containing 134 million particles, existing methods would require 560 hours to churn out a high-resolution simulation using a single processing core. With the new approach, the researchers need only 36 minutes.

The results were even more dramatic when more particles were added to the simulation. For a universe 1,000 times as large with 134 billion particles, the researchers’ new method took 16 hours on a single graphics processing unit. Using current methods, a simulation of this size and resolution would take a dedicated supercomputer months to complete.

This doesn’t mean the AI “knows” what the universe beyond our reach looks like. It means it can convincingly update low-resolution simulation images to high-resolution, thus making it possible for scientists to generate these large high-resolution images using much, much less time, energy, and power.

In essence, it’s like giving an AI a rough sketch of a movie and having it spit out what it thinks the completed work would look like without actually having to film the movie.

Quick take: It’s a lot more complicated than that, and, in the case of simulating our universe, we do know what the final product looks like – you can verify by walking outside on a clear night and looking up. What we don’t know is exactly how it got this way.

The fact that we can take cosmology simulations off of supercomputers and run them on glorified gaming PCs will democratize the ability for researchers to test new ideas quickly and efficiently.

This work is a rising tide to lift all vessels, it has the potential to change our view of the cosmos and, with any luck, help us come up with a better origin story for dark matter, gravity, and the universe as a whole.

You can read the whole study here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.