Should musicians, whose job relies on the unpredictable and mysterious workings of human imagination, heed the warnings about artificial intelligence driving humans into unemployment?

Apparently, the answer to that question is bit complicated.

Artificial intelligence is gradually transforming into a general-purpose technology that permeates virtually every aspect of human life and society. Just like electricity (another general-purpose technology) which had an impact on music and musical instruments, AI algorithms will inevitably change the way we create and perform music.

While this does not mean an end to the era of human musicians, it is fair to say that some dramatic changes are lying ahead.

How does artificial intelligence apply to music?

At the heart of recent artificial intelligence breakthroughs are machine learning algorithms, programs that find patterns in large sets of data. Machine learning made it possible to automate both cognitive and physical processes that difficult or impossible to define through rule-based programming. This includes tasks such as image classification, speech recognition and translation.

When given musical data, machine learning algorithms can find the patterns that define each style and genre of music. But there’s more to it than classification and copyright protection. As researchers have shown, machine learning algorithms are capable of creating their own unique musical scores.

An example is Google’s Magenta, a project that is looking for ways to advance the state of machine-generated art. Using Google’s TensorFlow platform, the Magenta team has already managed to create algorithms that generate melodies as well as new instrument sounds. Magenta is also exploring the broader intersection of AI and arts, and is delving into other fields such as generating paintings and drawings.

Flow Machines, a project directed by Sony’s Computer Science Laboratories, has also made inroads in creating musical works with artificial intelligence. The research team trained its machine learning algorithm with 13,000 melodies from different music genres, and then let it work its magic and create its own piece of art. The result was Daddy’s Car, a song that mimics the style of The Beatles. While the lyrics were created by a human, the music was totally made by the algorithm.

Another interesting project is folk-rnn, a machine learning algorithm developed by a group of researchers at Kingston University and Queen Mary University of London. After ingesting a repertoire of 23,000 Irish folkloric tunes, folk-rnn was able to come up with its own unique Irish musical scores.

None of the research teams claim their algorithms will replace musicians and composers. Instead, the believe AI algorithms will work in tandem with musicians and help them become better at their craft by boosting their efforts and assisting them in ways that weren’t possible before. For instance, an AI algorithm can provide composers with a starting point by generating the basic structure of a song and letting them do the tunings and adjustments.

Real applications of AI in the music industry

While the work of researchers is geared toward shaping the future of music, a number of startups are already using AI to fulfill real-life use cases.

An example is Jukedeck, a website that uses machine learning to create music tracks. Users specify a number of parameters such as style, mood and tempo, and Jukedeck generates a unique song. Jukedeck doesn’t aim to create perfect, award winning music. Instead, it wants to cater to the needs of people and companies looking for affordable and quick access to decent, royalty-free production music that could be used in videos and presentations.

AI Music is another startup that is active in the space. However, rather than creating music, the company uses AI to alter existing music to better fit in the context they’re being played in. For instance, if the music is being played on a video, AI Music can automatically remix and adjust its tempo to match the footsteps of a person being displayed, or the thrill of a high-speed car chase. It can also alter the instruments to fit different moods such as working out in the gym or waking up in the morning.

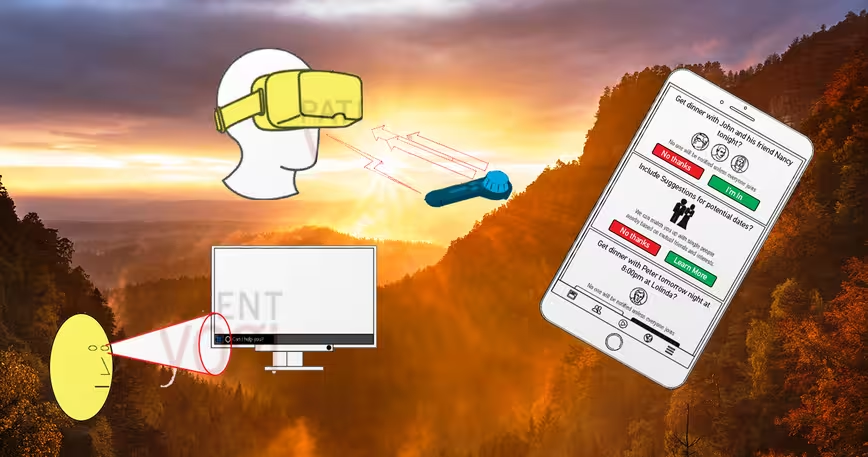

Australian startup Popgun is working on a deep learning algorithm that listens to a sequence of notes that a human musician plays and generates a sequence that could come after. Still in development, Popgun works in a back-and-forth duet style. But its developers believe it will eventually evolve it into an intelligent technology that collaborates with human performers in real-time.

https://www.youtube.com/watch?v=y_zUtY05TuM

None of these developments are threatening the jobs of Hans Zimmer and Ramin Djawadi. To be fair, nothing short of human-level AI will threaten the complexity of human creativity. However, for the moment, AI is making the creation of music easier. This might obviate the need for many skills that were previously hard to come by. But overall a little AI help can benefit professional composers as well as consumers and music lovers.

Get the TNW newsletter

Get the most important tech news in your inbox each week.