Despite the enormous speed at processing reams of data and providing valuable output, artificial intelligence applications have one key weakness: Their brains are located at thousands of miles away.

Most AI algorithms need huge amounts of data and computing power to accomplish tasks. For this reason, they rely on cloud servers to perform their computations, and aren’t capable of accomplishing much at the edge, the mobile phones, computers and other devices where the applications that use them run.

In contrast, we humans perform most of our computation and decision-making at the edge (in our brain) and only refer to other sources (internet, library, other people…) where our own processing power and memory won’t suffice.

This limitation makes current AI algorithms useless or inefficient in settings where connectivity is sparse or non-present, and where operations need to be performed in a time-critical fashion. However, scientists and tech companies are exploring concepts and technologies that will bring artificial intelligence closer to the edge.

Distributed computing on blockchain

A lot of the world’s computing power goes to waste as thousands and millions of devices remain idle for a considerable amount of time. Being able to coordinate and combine these resources will enable us to make efficient use of computing power, cut down costs and create distributed servers that can process data and algorithms at the edge.

Distributed computing is not a new concept, but technologies like blockchain can take it to a new level. Blockchain and smart contracts enable multiple nodes to cooperate on tasks without the need for a centralized broker.

This is especially useful for Internet of Things (IoT), where latency, network congestion, signal collisions and geographical distances are some of the challenges we face when processing edge data in the cloud. Blockchain can help IoT devices share compute resources in real-time and execute algorithms without the need for a round-trip to the cloud.

Another benefit to using blockchain is the incentivization of resource sharing. Participating nodes can earn rewards for making their idle computing resources available to others.

A handful of companies have developed blockchain-based computing platforms. iEx.ec, a blockchain company that bills itself as the leader in decentralized high-performance computing (HPC), uses the Ethereum blockchain to create a market for computational resources, which can be used for various use cases, including distributed machine learning.

Golem is another platform that provides distributed computing on the blockchain, where applications (requestors) can rent compute cycles from providers. Among Golem’s use cases is training and executing machine learning algorithms. Golem also has a decentralized reputation system that allows nodes to rank their peers based on their performance on appointed tasks.

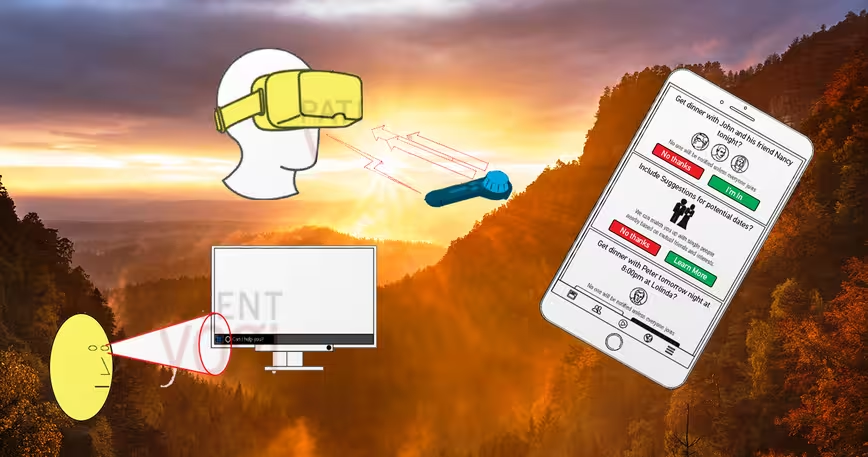

Portable AI coprocessors

From landing drones to running AR apps and navigating driverless cars, there are many settings where the need to run real-time deep learning at the edge is essential. The delay caused by the round-trip to the cloud can yield disastrous or even fatal results. And in case of a network disruption, a total halt of operations is imaginable.

AI coprocessors, chips that can execute machine learning algorithms, can help alleviate this shortage of intelligence at the edge in the form of board integration or plug-and-play deep learning devices. The market is still new, but the results look promising.

Movidius, a hardware company acquired by Intel in 2016, has been dabbling in edge neural networks for a while, including developing obstacle navigation for drones and smart thermal vision cameras. Movidius’ Myriad 2 vision processing unit (VPU) can be integrated into circuit boards to provide low-power computer vision and image signaling capabilities on the edge.

More recently, the company announced its deep learning compute stick, a USB-3 dongle that can add machine learning capabilities to computers, Raspberry PIs and other computing devices. The stick can be used individually or in groups to add more power. This is ideal to power a number of AI applications that are independent of the cloud, such as smart security cameras, gesture controlled drones and industrial machine vision equipment.

Both Google and Microsoft have announced their own specialized AI processing units. However, for the moment, they don’t plan to deploy them at the edge and are using them to power their cloud services. But as the market for edge AI grows and other players enter the space, you can expect them to make their hardware available to manufacturers.

Algorithms that rely on less data

Currently, AI algorithms that perform tasks such as recognizing images require millions of labeled samples for training. A human child accomplishes the same with a fraction of the data. One of the possible paths for bringing machine learning and deep learning algorithms closer to the edge is to lower their data and computation requirements. And some companies are working to make it possible.

Last year Geometric Intelligence, an AI company that was renamed to Uber AI Labs after being acquired by the ride hailing company, introduced a machine learning software that is less data-hungry than the more prevalent AI algorithms. Though the company didn’t reveal the details, performance charts show that XProp, as the algorithm is named, requires much less samples to perform image recognition tasks.

Gamalon, an AI startup backed by the Defense Advanced Research Projects Agency (DARPA), uses a technique called “Bayesian Program Synthesis,” which employs probabilistic programming to reduce the amount of data required to train algorithms.

In contrast to deep learning, where you have to train the system by showing it numerous examples, BPS learns with few examples and continually updates its understanding with additional data. This is much closer to the way the human brain works.

BPS also requires extensively less computing power. Instead of arrays of expensive GPUs, Gamalon can train its models on the same processors contained in an iPad, which makes it more feasible for the edge.

Edge AI will not be a replacement for the cloud, but it will complement it and create possibilities that were inconceivable before. Though nothing short of general artificial intelligence will be able to rival the human brain, edge computing will enable AI applications to function in ways that are much closer to the way humans do.

Get the TNW newsletter

Get the most important tech news in your inbox each week.