How often do servers really fail? If Backblaze’s 61,523 operational hard drives are any indication, not often.

On average, Backblaze reports its drives have a fail rate of 1.84 percent in Q1 2016. That’s 266 failures with over 5 million days in operation.

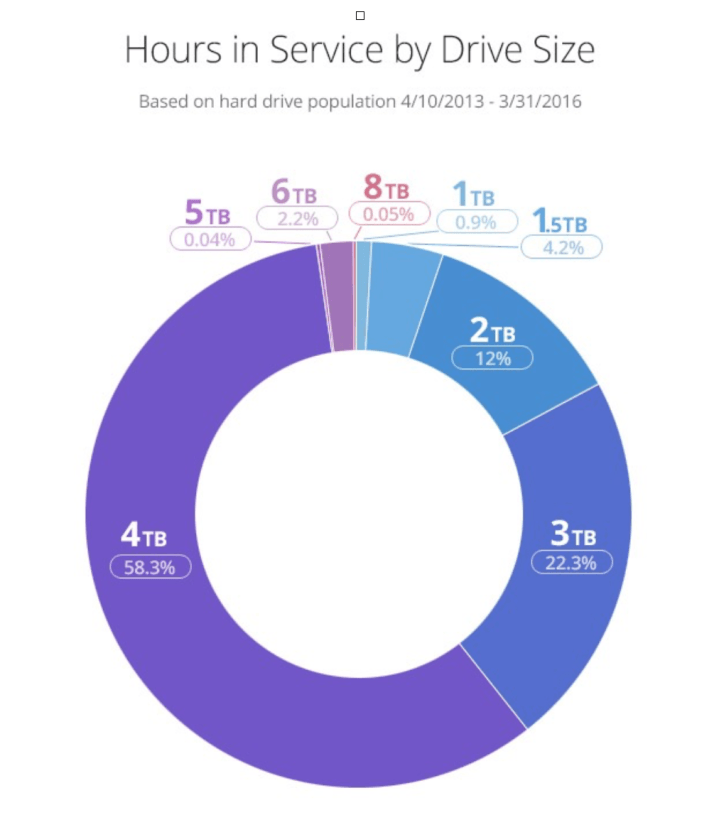

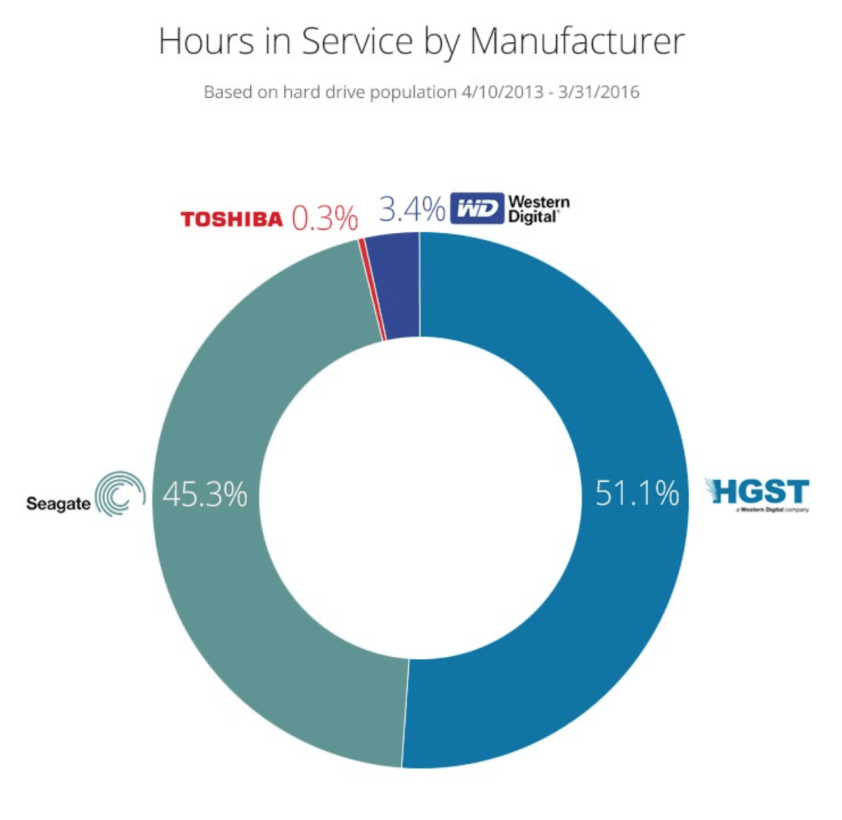

Cumulatively, Backblaze drives have over one billion hours of operation to date. Its most used drive was a 4TB Seagate, while the 2 and 3TB HGST have a slightly better lifespan.

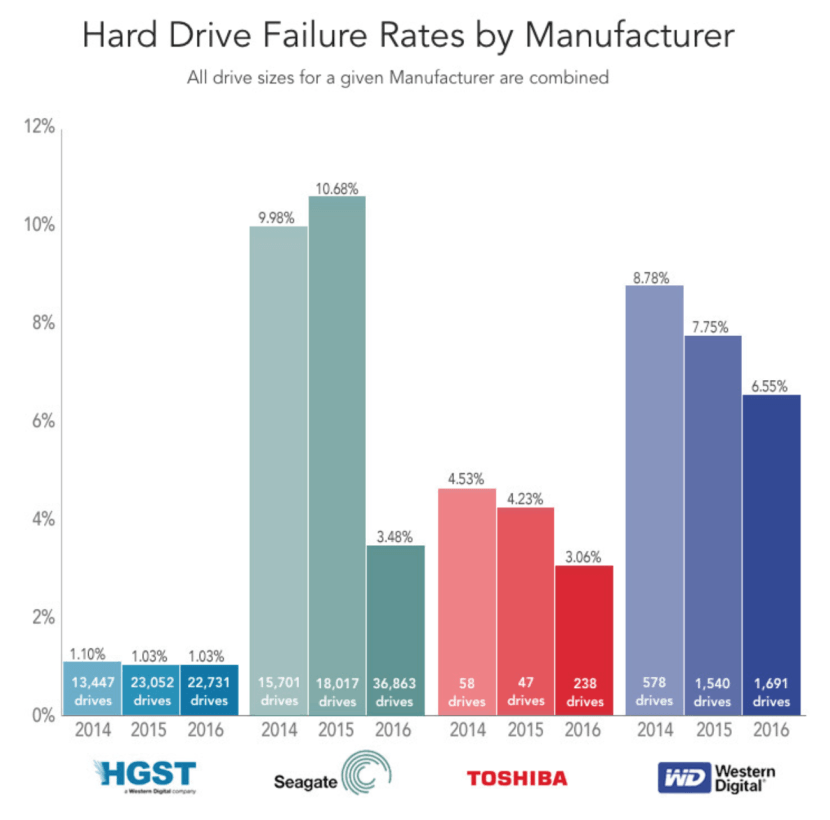

Western Digital drives failed the most, while Seagate and Toshiba have roughly the same drop-dead ratio. HGST was far and away the best drive on Backblaze’s test; it routinely hovers around one percent failure rate.

Backblaze notes it uses three components when considering failure: a drive that won’t spin or connect to the OS, one that will not sync (or remain synced) to a RAID as well as some in-house analytics.

Backblaze obviously isn’t the only horse in the race when considering servers, but it’s a good look at best practices for those interested in building their own server. If your app or service depend on it, looks like HGST may be the way to go.

Get the TNW newsletter

Get the most important tech news in your inbox each week.