While we’ve previously seen researchers train artificial intelligence algorithms to transform your crappy doodles into atrocious cat monsters, this hardly shows the true potential of the technology – though it certainly is a fun way to get people interested.

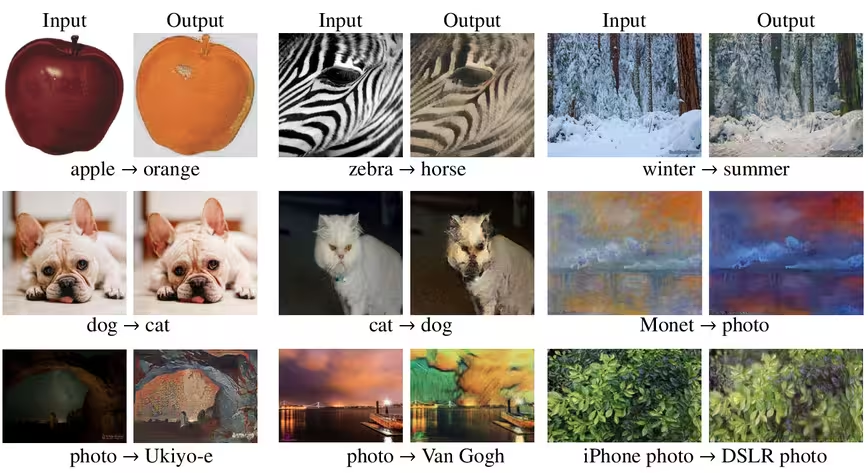

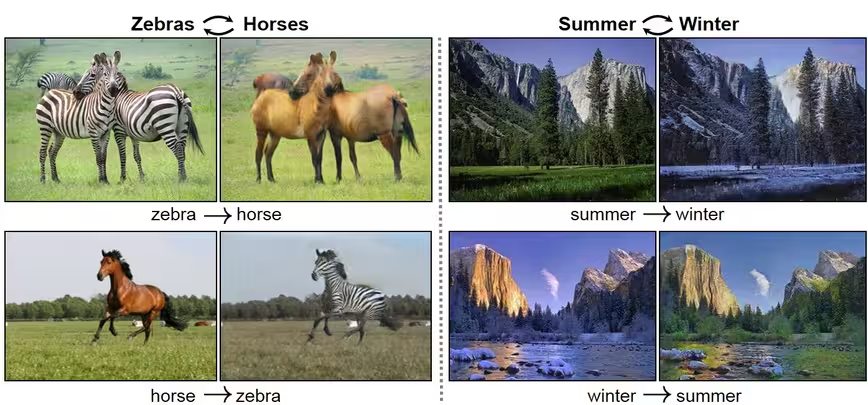

But now the researchers behind the AI model powering the doodle-to-cat-monster tool are back with another impressive image manipulation implementation that lets you turn horses into zebras, apples into oranges, winters into summers and so much more.

In a new paper, Jun-Yan Zhu and Taesung Park from the University of California Berkeley lay out a new model that essentially allows you to transform images in a ‘cycle consistent’ way, meaning that any changes to the original image are expected to ultimately remain fully reversible.

For example, the algorithm could be used to turn a zebra into a horse and vice versa. Catch a peek at the model in action in the video below:

But this is hardly the only thing the improved algorithm can do.

What makes the new model particularly powerful and versatile is that it combines the functionalities of various artificial intelligence methods all at once.

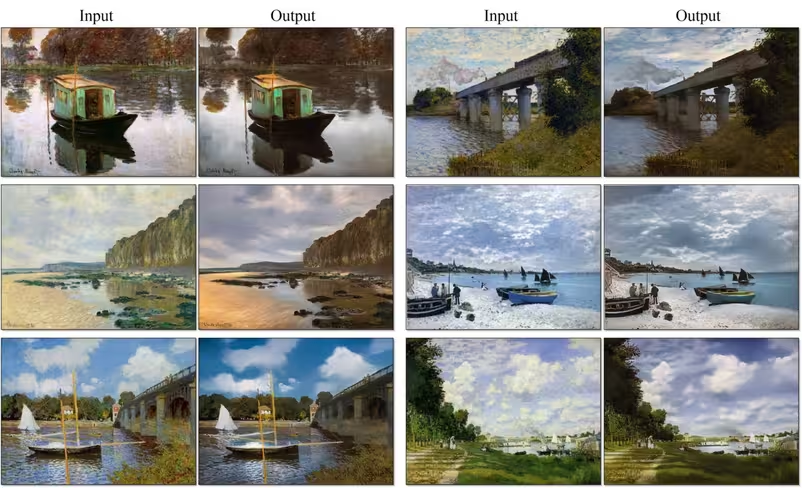

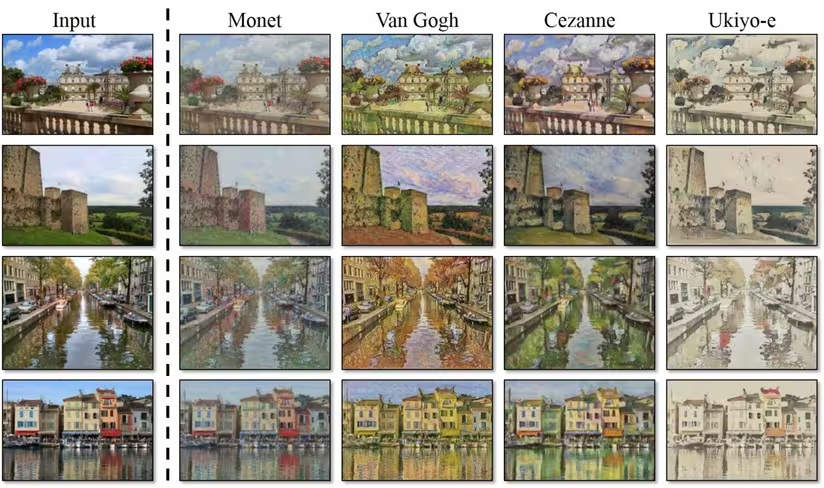

This means the algorithm can be easily tweaked to perform a multitude of different tasks and transformations. For instance, it could turn paintings into photos as easily as it could turn photos into paintings (and then even add various styles to them).

Here are a few more examples:

While the technology is capable of producing pretty amazing results, it still needs some fine-tuning.

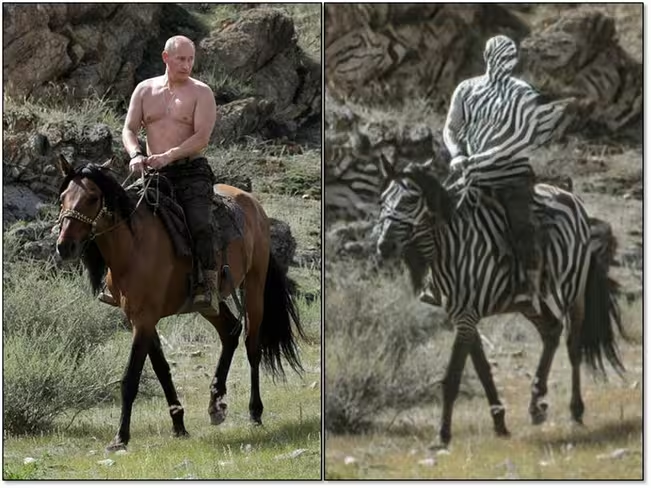

As the researchers explain, the model “does not work well when a test image looks unusual compared to training images.”

But even in these cases, the results tend to be quite entertaining:

For those curious to peruse the code and dig into the documentation, the researchers have made their work available on GitHub. You can browse through the repository here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.