It’s safe to assume you use at least one of Google’s many services: Google, Gmail, Google+, maps, YouTube, etc. unless you’re still sifting your way through Yahoo!, MapQuest, Hotmail, AOL, or another ghost of Internet’s past.

Recently, while visiting one of Google’s many sites, you may have noticed the company is giving its terms and conditions a structural overhaul, a change it announced in late January. A few days later, Google sent a letter to Congress explaining the reasons for its new privacy policy, which is succinctly described as “One Policy, One Google Experience.” The new policy goes into effect today, the first of March, 2012.

While I don’t usually bother myself with privacy policies, I figured the all-powerful Google was a good place to start. Google even nudged me a bit with its: ‘We’re changing our privacy policy’…‘this stuff matters.’

I suppose, if any change in privacy policy should get my attention, it’s Google’s. Over 350 million people have their emails—perhaps an individual’s most private resource in the information age—sitting on Google’s servers. The company knows what we search for on the Internet—a service that is used over 400 million times a day, globally. If that’s not enough, Google aims to know where we go (Google Maps), who our friends are (Google+), what we read (News and Books) and what we want to buy (Shopping).

So, for the first time in my life, I read a privacy policy. What I found was something that initially seemed trivial, but after considering the magnitude of its effects, could have a large and deep-rooted effect on news, popular culture, society and information as a whole.

The common argument spoken by Google’s CEO Eric Schmidt (pictured right): “If you have something that you don’t want anyone to know, maybe you shouldn’t be doing it in the first place’’—is a bit passé, especially from a massive, publicly traded company that’s so popular it’s become a verb.

The common argument spoken by Google’s CEO Eric Schmidt (pictured right): “If you have something that you don’t want anyone to know, maybe you shouldn’t be doing it in the first place’’—is a bit passé, especially from a massive, publicly traded company that’s so popular it’s become a verb.

But let’s get serious Mr. Schmidt; the majority of the general public isn’t well-behaved, and your website just happens to be the most convenient way to find smut, marijuana growing tips and lock picking tutorials amongst other illicit things. Algorithms are the driving force behind the company’s features so it’s not like Google’s employees are sifting through your emails (it’s up to you which scenario you find more disturbing).

If it is indeed an algorithm choosing the ads that surround my email, it’s quite intelligent, or, shall we say, well-written. Recently, while exchanging revisions with an editor, Google bordered my email with advertisements for law school even though, curiously, laws and school were never mentioned in our email exchange. I scanned the thread and found no mention of anything that pertains to Law School, which lead me to think the ad was a recommendation. It was almost as if Google was like a skeptical parent talking to its wide-eyed kid after receiving an undergraduate degree. ‘You want to be a what?’ Google says, ‘A writer? Why don’t you try to find something with more… stability. How about law school?’

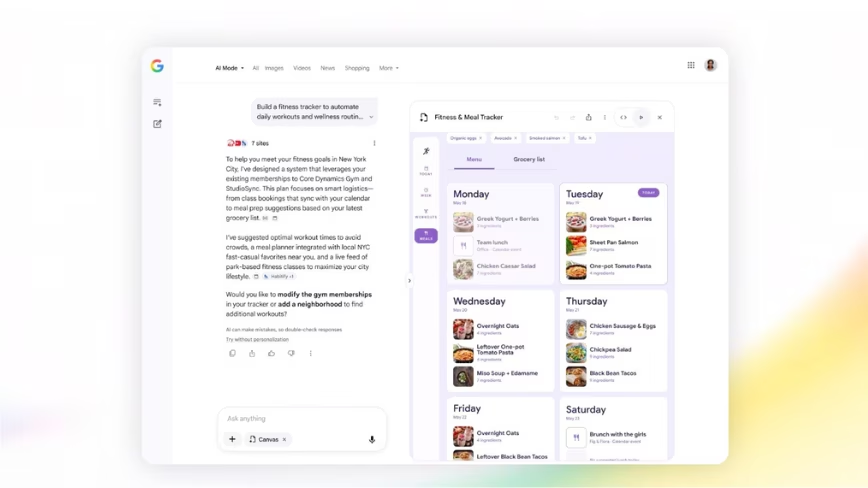

The major initiation that will occur March 1st as a result of the policy change seems harmless enough: personalized searches. Google says, “We can… tailor your search results – based on the interests you’ve expressed in Google+, Gmail, and YouTube. We’ll better understand which version of Pink or Jaguar you’re searching for and get you those results faster.”

In many ways, I’m sure this will be a more succinct and convenient browsing experience. Notice how the examples they give us are ‘pink’ and ‘jaguar’. It will be exciting to find out if Google think I’m looking for pink the color or the artist known as Pink. Perhaps I’ll type in ‘Seal’ and see what it gives me. Does Google think I’m the kind of guy who would be interested in the animal, the military division or Heidi Klum’s ex-husband? With trivial searches like these, surely you’ll be able to find your mid-90s mediocre pop star and luxury car much, much faster. But what about loaded searches? Like ‘September 11th’? Or ‘marijuana’? Or ‘homosexuality’? Or ‘Christianity’?

We should all see the same search results. Why? Let’s say I’m really into conspiracy theories and I like to read about them on the Internet or watch documentaries about them on YouTube. Does that mean that the first links under my ‘9.11.01’ search should suggest the attacks of September 11th were an inside job? My pothead buddy will see the therapeutic effects of smoking weed. My homophobic uncle will find discriminating propaganda. Maybe my creepy neighbor is really into kiddie-porn. What should be at the top of his Google page next time he searches ‘Christianity’?

Now, it’s not like Google’s search algorithm presents us with some sort of eternal truth about the world based on the information found on the Web. But, it seems logical that everyone see the same results. Recall for a moment, if you can, how we used to learn what was happening in this world: Through this archaic process called ‘news’ (I don’t actually remember this, I’ve only heard stories). Things would happen in the world, just as they do now. Then an individual would pick a source of news and let that respective editor (I always imagine this hypothetical editor as a sweat-covered male, hunched over a typewriter with a cigarette hanging on the edge of his lips. This whole scene takes place with venetian blinds in black and white, of course.) feed them the information in which the editor deemed relative, like a human filter to a current event.

Maybe the news would tell you about something happening in Africa. Perhaps it would tell you about a dog that fell into a well. Do you care about Africa? What about dogs or wells? But it didn’t matter, because the news was passive. These sweaty, male smokers would pick content and it would wash over you. Then the Internet came around and a copious amount of news and information was available at your fingertips. News became dynamic—an individual would actively find the information they cared about. There was still a filter but this time it wasn’t a human, it was an equation. Would you rather have your information filtered by a human with biases and morals or an unbiased algorithm that is incapable of comprehending ethics? While there is no right answer to that question, it’s still a question worth answering.

These are things to consider as we shift from one type of filter to another. In this new era, maybe I’ll search for ‘Artificial Intelligence Ethics’ and my buddy will search for the same thing. Maybe his top results will be from a legitimate source, like a philosophy professor or a software engineer because he’s well read. Maybe those results will be on page five of my Google results while The Matrix fan forum will be pushed to the top because of my Sci-Fi movie obsession. I’ll never read what the hypothetical philosopher and scientist wrote and he won’t be privy to what I see. Why? How often do you make it to the fifth page on Google? And as we build up a virtual reputation with Google, it will tell us what we “want” to hear and show us what we “want” to see.

We are in an exciting new era where technology endlessly accelerates, where innovation is omnipresent, where the world is ever-changing and where scientific knowledge just may help us escape from the mess we’ve created. How did we get here? By having our egos’ stroked? Our ideologies confirmed? By surrounding ourselves with an agreeable audience? Or has our society progressed from challenges? Disputes? Skeptics? Have we advanced because we’ve had to prove that what we thought was right is indeed, right? What good is a society where liberals only see liberal righteousness or where conservatives are only exposed to conservative propaganda?

There’s really nothing we can do. Google is going to change its privacy policy and we’re going to keep using its products. Simply because it’s incredible and addicting to have so much information pouring out of a screen. Google has us by the proverbial (digital) balls. But in a very short while it’s going to be essential we become more responsible searchers, more savvy web surfers. Interneters that actively search for things they disagree with and Googlers that get to the fifth page of their search results. Because soon the world’s information will be filtered through the habits and dependencies of the most biased, unforgiving and scary editor of all: ourselves.

➤ One Policy, One Google Experience

Everett Collection via shutterstock

Get the TNW newsletter

Get the most important tech news in your inbox each week.