AI is having a big impact on photo editing, but the results are proving divisive.

The proponents say that AI unleashes new artistic ideas and cuts the time creators spent on monotonous work. Critics, however, argue that the techniques distort reality and propagate an artificial homogeneity.

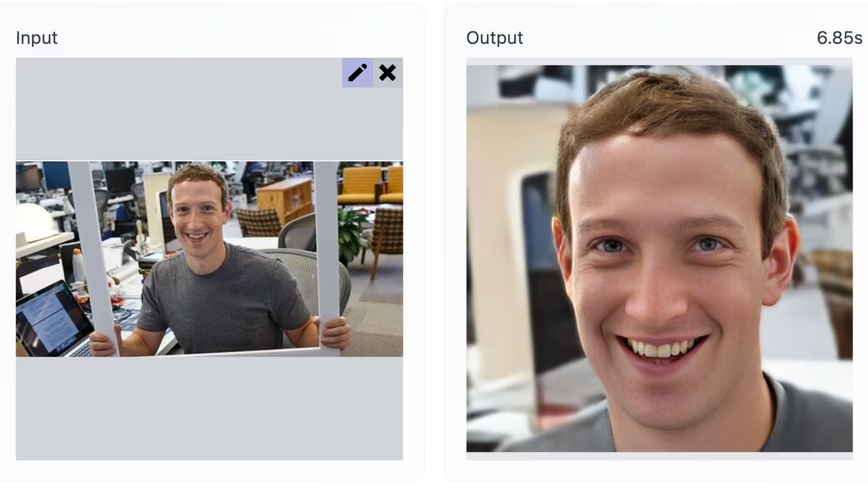

A new entry to the debate is GFP-GAN. The system gives low-quality images a high-resolution revamp — and the results can be impressive.

![Comparisons with state-of-the-art face restoration methods: HiFaceGAN [67], DFDNet [44], Wan et al. [61] and PULSE [52](https://media.thenextweb.com/2021/12/Screenshot-2021-12-20-at-18.05.26.avif) The system, which was created by researchers at the Tencent ARC Lab in China, uses a generative adversarial network (GAN) architecture to enhance faces in old, damaged, and unclear photos.The features are then restored and refined to generate an upscaled image.

The system, which was created by researchers at the Tencent ARC Lab in China, uses a generative adversarial network (GAN) architecture to enhance faces in old, damaged, and unclear photos.The features are then restored and refined to generate an upscaled image.

“While previous methods struggle to restore faithful facial details or retain face identity, our proposed GFP-GAN achieves a good balance of realness and fidelity with much fewer artifacts,” the study authors wrote in their paper. “In addition, the powerful generative facial prior allows us to perform restoration and color enhancement jointly.”

The research team has also kindly created a demo for their system — which gave us a chance to put their claims to the test.

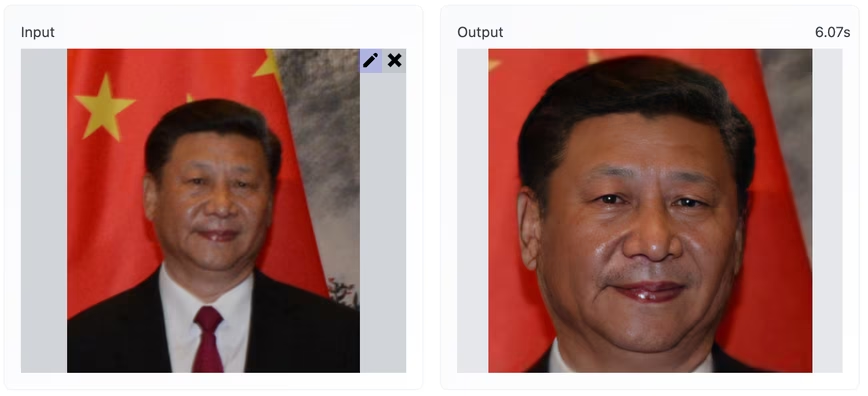

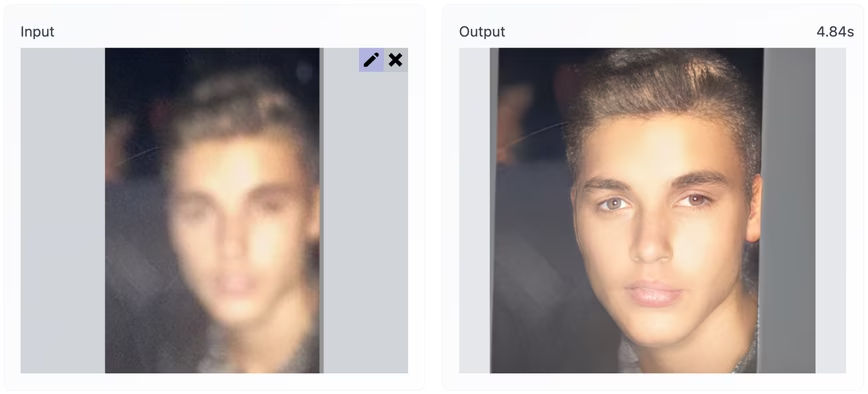

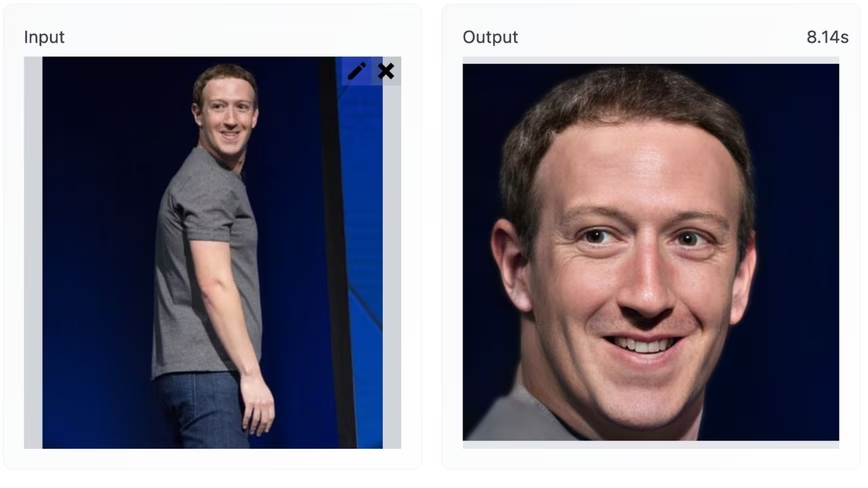

In our brief and highly unscientific trial, GFP-GAN was most adept with images that had only minor blemishes. Unlike many AI photo tools, it also generated a similar accuracy across a diverse range of subjects. The problem was, this accuracy was highly inconsistent.

Unsurprisingly, this issue was most pronounced when the tool was used on extremely blurry images. However, even high-resolution inputs led GFP-GAN down some horrifying uncanny valleys.

Our experiment left me on the fence in the debate over AI photo editing. I can certainly see the creative potential, but I certainly wouldn’t trust it to reflect reality.

Perhaps that isn’t such a bad thing. As privacy professionals have warned, cameras equipped with AI image enhancers could be used to track entire populations.

Get the TNW newsletter

Get the most important tech news in your inbox each week.