Welcome to the first article in TNW’s guide to the AI apocalypse. In this series we’ll examine some of the most popular doomsday scenarios prognosticated by modern AI experts.

You can’t have a discussion about killer robots without invoking the Terminator movies. The franchise’s iconic T-800 robot has become the symbol for our existential fears concerning today’s artificial intelligence breakthroughs. What’s often lost in the mix however, is why the Terminator robots are so hellbent on destroying humanity: because we accidentally told them to.

This is a concept called misaligned objectives. The fictional people who made Skynet (spoiler alert if you haven’t seen this 35 year-old movie), the AI that powers the Terminator robots, programmed it to safeguard the world. When it becomes sentient and they try to shut it down, Skynet decides that humans are the biggest threat to the world and goes about destroying them for six films and a very underrated TV show.

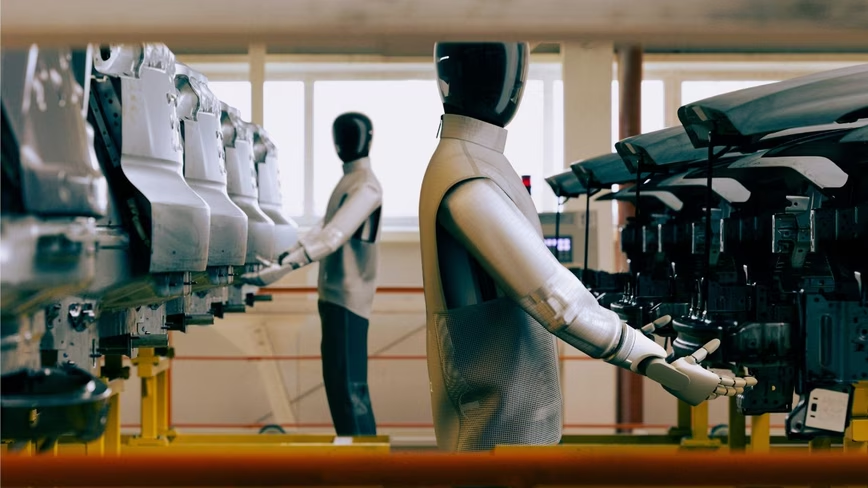

The point is: nobody ever intends for robots that look like Arnold Schwarznegger to murder everyone. It all starts off innocent enough – Google’s AI can now schedule your appointments over the phone – then, before you know it, we’ve accidentally created a superintelligent machine and humans are an endangered species.

Could this happen for real? There’s a handful of world-renowned AI and computer experts who think so. Oxford philosopher Nick Bostrom‘s Paperclip Maximizer uses the arbitrary example of an AI whose purpose is to optimize the process of manufacturing paperclips. Eventually the AI turns the entire planet into a paperclip factory in its quest to optimize its processes.

Stephen King’s “Trucks” imagines a world where a mysterious comet’s passing not only gives every machine on Earth sentience, but also the ability to operate without a recognizable power source. And – spoiler alert if you haven’t read this 46 year-old short story – it ends with the machines destroying all humans and paving the entire planet’s surface.

A recent NY Times op-ed from AI expert Stuart Russel began with the following paragraph:

The arrival of superhuman machine intelligence will be the biggest event in human history. The world’s great powers are finally waking up to this fact, and the world’s largest corporations have known it for some time. But what they may not fully understand is that how A.I. evolves will determine whether this event is also our last.

Russel’s article – and his book – discuss the potential for catastrophe if we don’t get ahead of the problem and ensure we develop AI with principles and motivations aligned with our human objectives. He believes we need to create AI that always remains uncertain about any ultimate goals so it will defer to humans. His fear, it seems, is that developers will continue to brute-force learning models until a Skynet situation happens where a system ‘thinks’ it knows better than its creators.

On the other side of this argument are experts who believe such a situation isn’t possible, or that it’s so unlikely that we may as well be discussing theoretical time-traveling robot assassins. Where Russel and Bostrom argue that right now is the time to craft policy and dictate research dogma surrounding AI, others think this is a bit of a waste of time. Computer science professor Melanie Mitchell, in a response to Russel’s New York Times op-ed, writes:

It’s fine to speculate about aligning an imagined superintelligent — yet strangely mechanical — A.I. with human objectives. But without more insight into the complex nature of intelligence, such speculations will remain in the realm of science fiction and cannot serve as a basis for A.I policy in the real world.

Mitchell isn’t alone, here’s a TNW article on why Facebook’s AI guru, Yann LeCun, isn’t afraid of AI with misaligned objectives.

The crux of the debate revolves around whether machines will ever have the kind of intelligence that makes it impossible for humans to shut them down. If we believe that some ill-fated researcher’s eureka moment will somehow imbue a computer system with the spark of life, or a sort of master algorithm will emerge that allows machine learning systems to exceed the intellectual abilities of biological intelligence, then we must admit that there’s a chance we could accidentally create a machine “god” with power over us.

For the time being it’s important to remember that today’s AI is dumb. Compared to the magic of consciousness, modern deep learning is mere prestidigitation. Computers don’t have goals, ideals, and purposes to keep them going. Without some incredible breakthrough, this is unlikely to change.

The bad news is that big tech research departments and government policy-makers don’t appear to be taking the existential threats that AI could create seriously. The current goldrush may not last forever, but as long as funding an AI startup is essentially a license to print money we’re unlikely to see politicians and CEOs push for regulation.

This is scary because it’s a perfect scenario for an accidental breakthrough. While it’s true that AI technology has been around for a long time and researchers have promised human-level AI for decades, it’s also true that there’s never been more money or personnel working on the problem than there is now.

For better or worse, it looks like our only protection against superhuman AI with misaligned objectives is the fact that creating such a powerful system is out of reach for current technology.

Check out TNW’s Beginner’s Guide to AI, starting with neural networks.

Get the TNW newsletter

Get the most important tech news in your inbox each week.